-

Install Pi-hole on your Mac in 5 minutes

Adblockers: I assume a majority of my audience uses them, such as Ublock Origin, 1blocker, Ghostery, and so on. The problem is that these require browser extensions, but how will I block ads on my Sony TV or make sure my Hue lights aren't spying on me?

Well, we have an answer: Pi-hole. As the name implies, Pi-hole is a web utility originally designed to run on a Raspberry Pi, although we can run it almost anywhere. I'll cover first on a Mac and then a few other devices, and it's really set up. Like, we are talking about 5 minutes.

Pi-hole blocks pesky advertisements and data harvesting by replacing your domain name server with your own self-hosted option that intercepts DNS requests before passing them to another DNS server. To break that down into plain speak, when you type in "YouTube.com," a domain name server functions like a phone book for every name and returns a number, something I now realize my younger audience probably hasn't ever seen before... I think I have a better analogy: it's like a digital map. Ask it where Jacksonville, Oregon is, and it returns coordinates as a long and lat from a database of all the cities in the world. The same thing happens with a DNS. It contains a list of all the IPs associated with every domain name worldwide.

Pi-hole maintains lists of known hosts for advertisements. If a request asks for a domain on the blocklist, it returns a null or fake address, thus preventing an advertisement or tracking script from loading.

This approach is awesome because it's platform agnostic. It requires manually configuring your devices or home network to use the Pihole instead of a regular DNS.

Pi-hole has a nice, easy-to-use interface that is also easy to adjust so you can whitelist potential sites.

The Tutorial

I have a MacBook 2017, and like all Intel Macs, it'll soon be unable to run modern macOS, even with OpenCore. The MacBook 2017 is an oddball model. I love this machine, but it's pretty underpowered, so there's not really a huge use case for it. It has one thing that makes it exceptionally attractive: a power draw. This guy can only draw 29w max but generally draws more than 10w even with the display on. Plus, its CPU is positively monstrous compared to the CPUs found in Raspberry Pis.

If you don't have an old Mac, I suggest getting a Raspberry Pi Zero 2 W as they're under $25, and the official Raspbery Pi website has an excellent tutorial/

Step 1: We need docker. Grab it from the official site Docker is a utility that lets you run containers and think of micro virtual machines. Download it and install it. Docker has Linux and Windows versions as well and I'll touch on the Linux using two different NAS systems.

Step 2: Run the following command below, also on github gist and embedded at the bottom.

docker run -d --name pihole \ -e TZ=America/Los_Angeles \ -e FTLCONF_webserver_api_password=MakeSureYouChangeThis \ -e FTLCONF_dns_upstreams='1.1.1.1;1.0.0.1' \ -e FTLCONF_dns_listeningMode=all \ -p 80:80 -p 53:53/tcp -p 53:53/udp -p 443:443 \ -v ~/pihole/:/etc/pihole/ \ --dns=127.0.0.1 --dns=1.1.1.1 \ --cap-add=NET_ADMIN \ --restart=unless-stopped \ pihole/pihole:latestWhat each Docker setting does:

-d- Runs the container in detached mode (in the background)--name pihole- Names the container "pihole" for easy reference-e TZ=America/Los_Angeles- Sets the timezone. Other examples:America/New_York,Europe/London,Asia/Tokyo,Australia/Sydney. Find your timezone on Wikipedia's TZ database list-e FTLCONF_webserver_api_password=MakeSureYouChangeThis- Sets the admin password for the web interface (change this!)-e FTLCONF_dns_upstreams='1.1.1.1;1.0.0.1'- Sets upstream DNS servers (Cloudflare in this case)-e FTLCONF_dns_listeningMode=all- Allows Pi-hole to listen on all network interfaces-p 80:80 -p 53:53/tcp -p 53:53/udp -p 443:443- Maps ports from host to container (web interface on 80/443, DNS on 53)-v ~/pihole/:/etc/pihole/- Mounts a local directory to store Pi-hole configuration and data--dns=127.0.0.1 --dns=1.1.1.1- Sets DNS servers for the container itself--cap-add=NET_ADMIN- Gives the container network administration capabilities--restart=unless-stopped- Automatically restarts the container unless manually stoppedpihole/pihole:latest- The Docker image to use (Pi-hole's official image)

This will go fast, as this project is very lean.

Step 3: Go to http://127.0.0.1/ and use the password to confirm it's working. We can also see our application in the Docker.

Step 4: On your Mac, change your DNS to 127.0.0.1.

This is done in the system settings, see Apple's documentation as it covers how to, from High Sierra to current.

Step 5: Get your Mac's local IP. Select your network, and then click on details. Click TCP/IP and make a note of your IP address. This is your Mac's IP address. Alternatively you can grab it via the terminal, for most Macs this will be the wifi interface,

ipconfig getifaddr en0however if you have wired internet and wifi, your internet connection could be different. Useifconfig | grep "inet " | grep -v 127.0.0.1and make note of the inet addresses.You can assign this as your DNS server to any device on your internal network, be it a Roku, Smart appliance, or another computer. However, you can set the DNS server to your Mac's IP address if you have a router. This way, all devices on your network will use the Pi-hole as their DNS server.

From my router, I can configure my DNS to use the PiHole. Just point the DNS setting to the Mac. Now, if you're on DHCP, which almost everyone is, your router likely has a setting to reserve an IP for a device. DHCP leases out IPs so they can change. That means a computer might one day be 192.168.10.105 and, after reconnecting or a router reboot, assigned a different IP, 192.168.10.124. If your DNS is set to the old address, this would be a problem, as you wouldn't have a DNS server until it was manually changed. Reserving an IP prevents a device from ever getting a different IP on the local network.

By pointing your home network at your Mac’s Pi-hole, you’ll enjoy ad-free browsing on any device. Go ahead—reserve that Mac’s IP in your router, and reclaim your bandwidth today.

You don't need a Mac

Pi-hole can be run almost anywhere due to it's lightweight nature, and being designed for a Raspberry Pi. This means you can set it up on any Linux machine, Windows, NAS or even in a virtual machine on your existing hardware.

For example, if you have a Synology or Ugreen NAS, you can run Pi-hole in Docker. The process is similar to the Mac setup, but you'll need to use the Synology Docker interface to create and manage your containers.

Instead of using the docker command, you'll want to create a container using a docker-compose file. This is a YAML file that defines the services, networks, and volumes for your application. Here's an example of a docker-compose file for Pi-hole:

version: '3.8' services: pihole: container_name: pihole image: pihole/pihole:latest ports: # DNS Ports (using alternative ports to avoid conflicts) - "1053:53/tcp" - "1053:53/udp" # HTTP Port (using alternative port to avoid DSM conflict) - "8080:80/tcp" # HTTPS Port (using alternative port to avoid DSM conflict) - "8443:443/tcp" environment: # Timezone TZ: 'America/Los_Angeles' # Web interface password from your original command FTLCONF_webserver_api_password: 'MakeSureYouChangeThis' # DNS upstreams (DNS servers you'd like to use) FTLCONF_dns_upstreams: '1.1.1.1;1.0.0.1' # DNS listening mode FTLCONF_dns_listeningMode: 'all' volumes: # Volume mapping - using full NAS path - '/volume1/docker/pihole:/etc/pihole' dns: # DNS settings from your original command - 127.0.0.1 - 1.1.1.1 cap_add: # Capabilities for network administration - NET_ADMIN restart: unless-stoppedThis is a basic example, and you may need to adjust the configuration based on your specific setup and requirements. Once you have your docker-compose file ready, you can use the Docker interface on your NAS to deploy the Pi-hole container. As a pro-tip, AI is excellent for interpreting and diagnosing issues, bew it Claude, ChatGPT, or Gemini.

GitHub Gist versions

-

Moving away from the Mac Pro: Sonnet Echo II DV Review

In the evolving landscape of professional computing, the traditional workstation is radically transforming. For years, the Mac Pro represented the pinnacle of expandability in Apple's ecosystem—a tower of power where professionals could add specialized PCIe cards for everything from video capture to audio processing. Yet Apple Silicon has rewritten the rules, delivering astonishing performance in smaller packages while leaving professional users with a critical question: what about expansion?

For the past several years, I've dedicated much of my content to the Mac Pro lineup—writing guides and producing videos. However, after my 2019 Mac Pro, I'm likely not buying another Mac Pro. The single Mac Pro entry in the Apple Silicon era has been woefully underwhelming, offering PCIe slots for a $3,000 premium that can only be used for expansion cards. YouTuber Luke Miani aptly described it as "Expandable, not upgradeable.

But what if there was a way to bring true PCIe expansion to any Mac? The advent of Thunderbolt 5 now enables 4x PCIe 4.0 speeds through external connections. For professionals in video production, audio engineering, and scientific computing who rely on specialized PCIe hardware, this opens intriguing possibilities: Could a Mac Studio or even a Mac mini paired with the right expansion chassis replace a Mac Pro?

This is where the Sonnet Echo II DV enters the picture—a dual-slot Thunderbolt PCIe expansion chassis with a unique twist: each PCIe slot gets its own dedicated Thunderbolt bus. This approach promises to eliminate the bandwidth bottlenecks that have historically plagued external expansion solutions. This design could be a game-changer for professionals who need to run bandwidth-intensive cards like BlackMagic DeckLink 8K Pro or AJA KONA 5 capture cards alongside high-speed storage.

Sonnet Echo II DV

Disclosure: Sonnet provided a review unit, but I was not compensated or sponsored, and I maintain complete editorial control.

Feature list

- Dual PCIe slots with dedicated Thunderbolt buses

- Each slot supports Thunderbolt pass-through for daisy-chaining

- No power switch needed—it powers up/down automatically when Thunderbolt cables are connected

- Built-in 400-watt power supply with two auxiliary power cables (75 watts each)

- Can charge power-hungry laptops like my 16-inch MacBook M4 Pro via Thunderbolt

- Features dual Noctua fans—the top shelf of PC cooling—rated at just 17 dBA

- All-metal construction with no cheap plastic feel

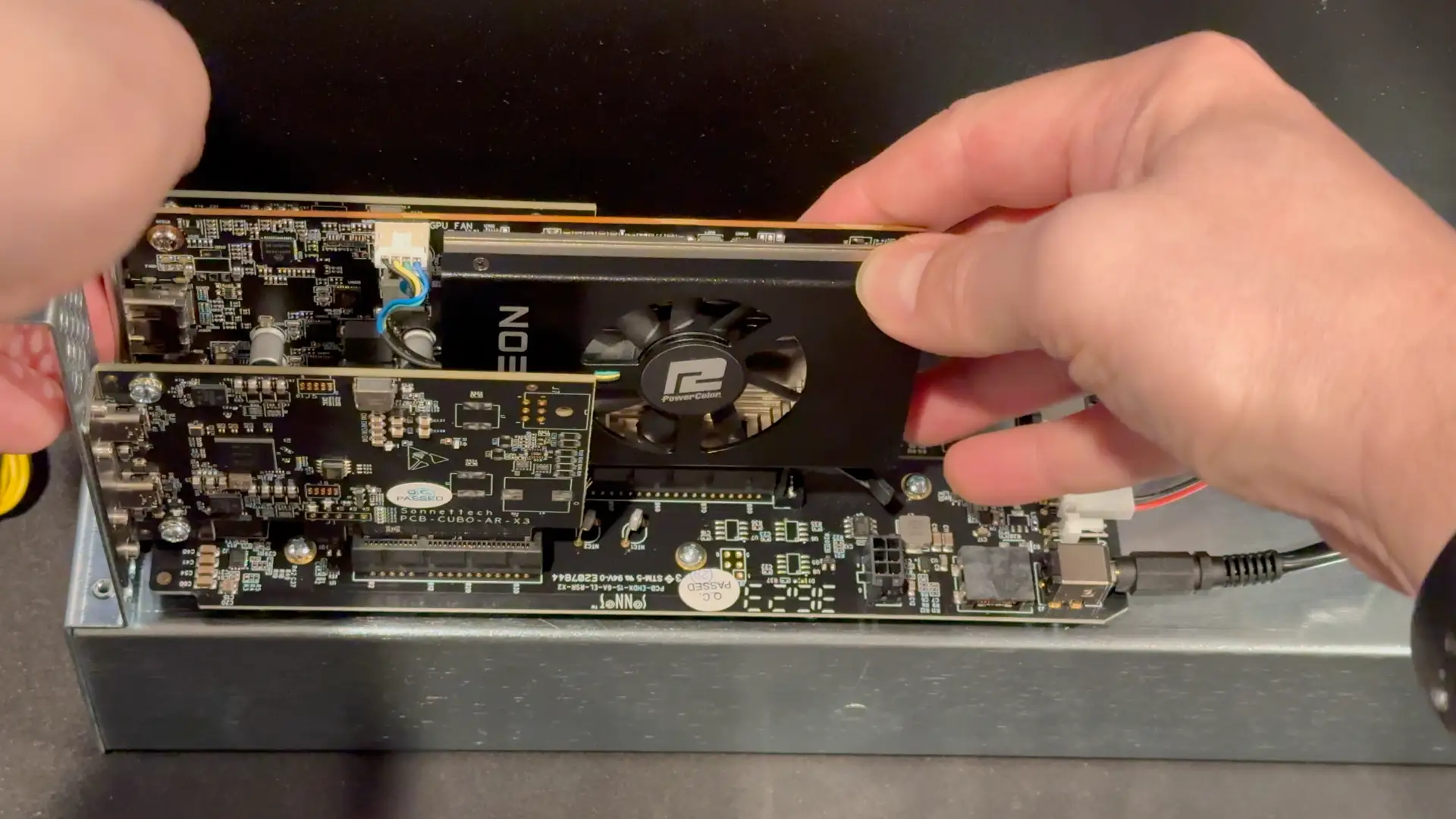

The Sonnet Echo dv2 is a dual PCIe Thunderbolt enclosure with a unique advantage—each PCIe slot has its own dedicated Thunderbolt port. This means you get the full bandwidth of a complete Thunderbolt channel per card. Despite Sonnet explicitly stating that this case is not for GPUs, I still managed to wedge a single-slot GPU into it. It's not pretty, but it worked. You can also dongle the PCIe slots if you'd like a single cable experience, and it can deliver 100w to charge a laptop. Conversely you can also connect two computers to the Sonnet II DV allowing each computer to access a single PCIe slot.

Thunderbolt technology offers PCIe connectivity over a cable, but with limitations. A single Thunderbolt 4 connection provides approximately 2,880 MB/s of PCIe bandwidth. When multiple PCIe cards share a single Thunderbolt bus (as in most expansion chassis), they must compete for this bandwidth, potentially creating bottlenecks.

By providing each slot with its own dedicated Thunderbolt bus, the Echo II DV ensures that both cards can simultaneously utilize full bandwidth—provided your computer has Thunderbolt ports on separate buses. All Apple Silicon Macs meet this requirement, with each Thunderbolt port getting its own PCIe lanes.

The Echo II DV is Thunderbolt 3/4 and not 5. However, it does feature a modular Thunderbolt design, which represents perhaps its most forward-thinking feature. The Thunderbolt interfaces are implemented as daughtercards, which Sonnet refers to as "Thunderbolt upgrade cards" on their website. When I contacted Sonnet about potential Thunderbolt 5 upgrades, they confirmed that it would indeed be possible to swap the card and avoid buying a whole new enclosure. Ironically, this makes the Thunderbolt enclosure more upgradeable than Apple Silicon Macs—a critical consideration for professionals making long-term investment decisions.

Inside is a modular design with two PCIe slots plus Cubo AR X3 Thunderbolt daughter cards. Interestingly, Sonnet refers to these as "Thunderbolt upgrade cards" on their website—suggesting potential future upgrades.

Thunderbolt 5 is not PCIe 5.0 based, instead PCIe 4.0. A single thunderbolt port represents roughly a 4x PCIe slot. While PCIe 5.0 offers double the theoretical bandwidth of PCIe 4.0, real-world storage performance gains are much smaller. The Samsung 990 Pro (PCIe 5.0) vs. 980 Pro (PCIe 4.0) shows only about a 17% improvement in IOPS (47,419 vs. 40,580) according to Tom's Hardware metrics. Of course, NVMe storage will improve and be able to saturate PCIe 5.0 more over time; as of writing this, there are few practical applications where PCIe 4.0 speeds are prohibitive, let alone 3.0. All of this is a very roundabout way to say I don't see the Thunderbolt 4.0 speeds as much of an issue. I hope that a Thunderbolt 5.0 upgrade is around the corner, but for most users, it won't be a game changer.

Thunderbolt 3 vs. Thunderbolt 4

Thunderbolt 3 and Thunderbolt 4 are both high-speed connectivity standards developed by Intel, but they have some key differences. Thunderbolt 3 was introduced in 2015 and supports data transfer speeds of up to 40 Gbps, while Thunderbolt 4, introduced in 2020, maintains the same maximum speed but adds several enhancements.

One of the main differences is that Thunderbolt 4 requires support for USB4, which means it can work with a wider range of devices and peripherals. Thunderbolt 4 also mandates support for daisy-chaining up to six devices, while Thunderbolt 3 does not have this requirement. Additionally, Thunderbolt 4 includes improved power delivery capabilities, allowing for charging devices at higher wattages.

Feature Thunderbolt 3 Thunderbolt 4 Maximum Bandwidth 40 Gbps 40 Gbps Display Support Minimum: Single 4K display

Maximum: Can support dual 4K @ 60Hz or single 5KMinimum: Dual 4K displays @ 60Hz or single 8K @ 30Hz PCIe Data Transfer 16 Gbps minimum 32 Gbps minimum Power Delivery Optional 100W charging Required 100W charging on at least one computer port Security DMA protection optional Intel VT-d-based DMA protection required Wake from Sleep Not guaranteed Required (PC can wake from sleep via connected Thunderbolt accessories) Dock/Hub Support Limited downstream ports Support for docks with up to 4 Thunderbolt ports USB4 Compatibility Compatible Compatible Cable Length Limited certified length options Universal 40Gbps cables up to 2 meters Connector Type USB-C USB-C For all intents and purposes, the Sonnet Echo II DV is a Thunderbolt 4 device, as most of the action for Thunderbolt 4 exists on the controller side of things. It supports daisy chaining and power delivery.

Performance: Real-World Testing

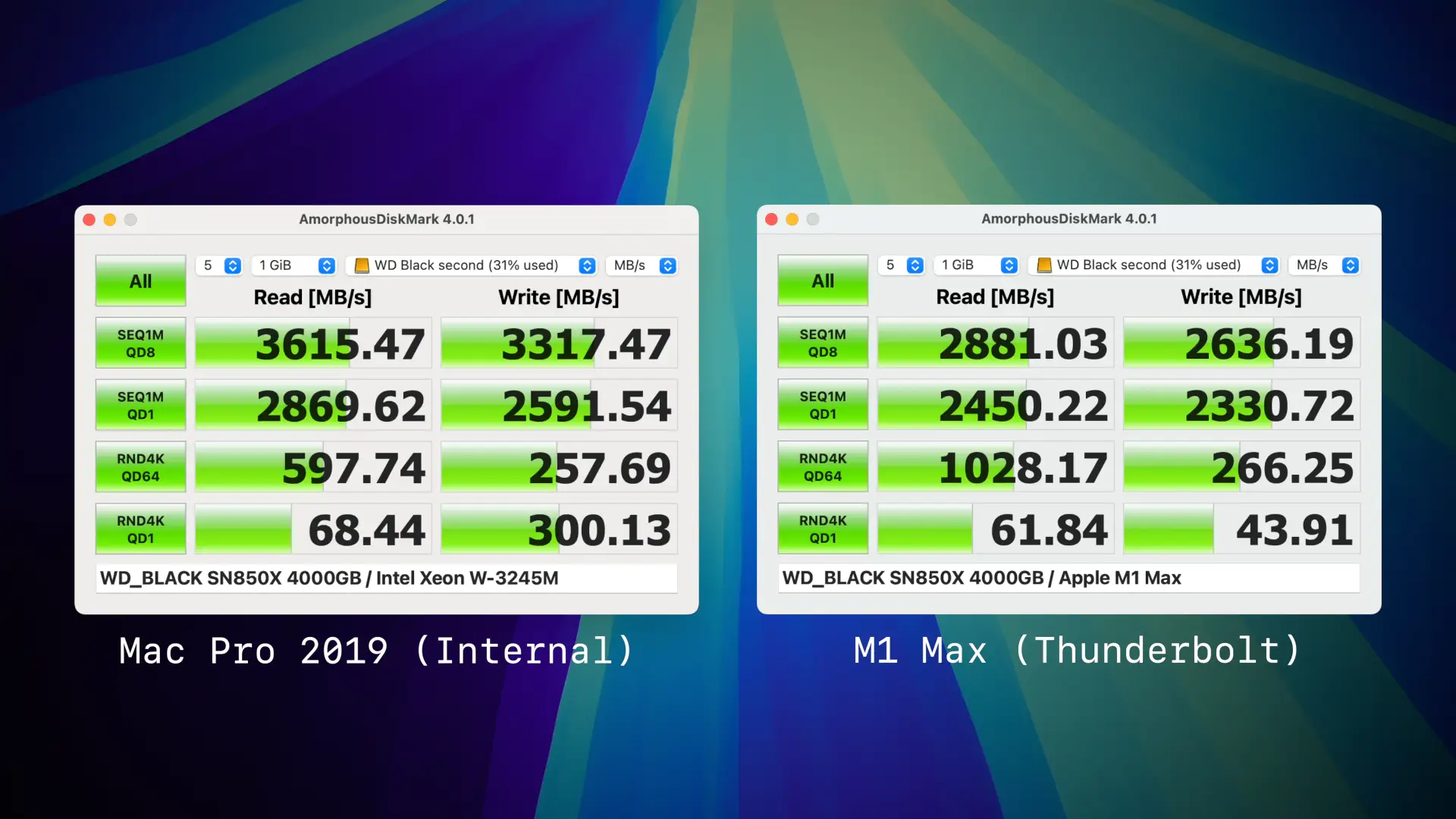

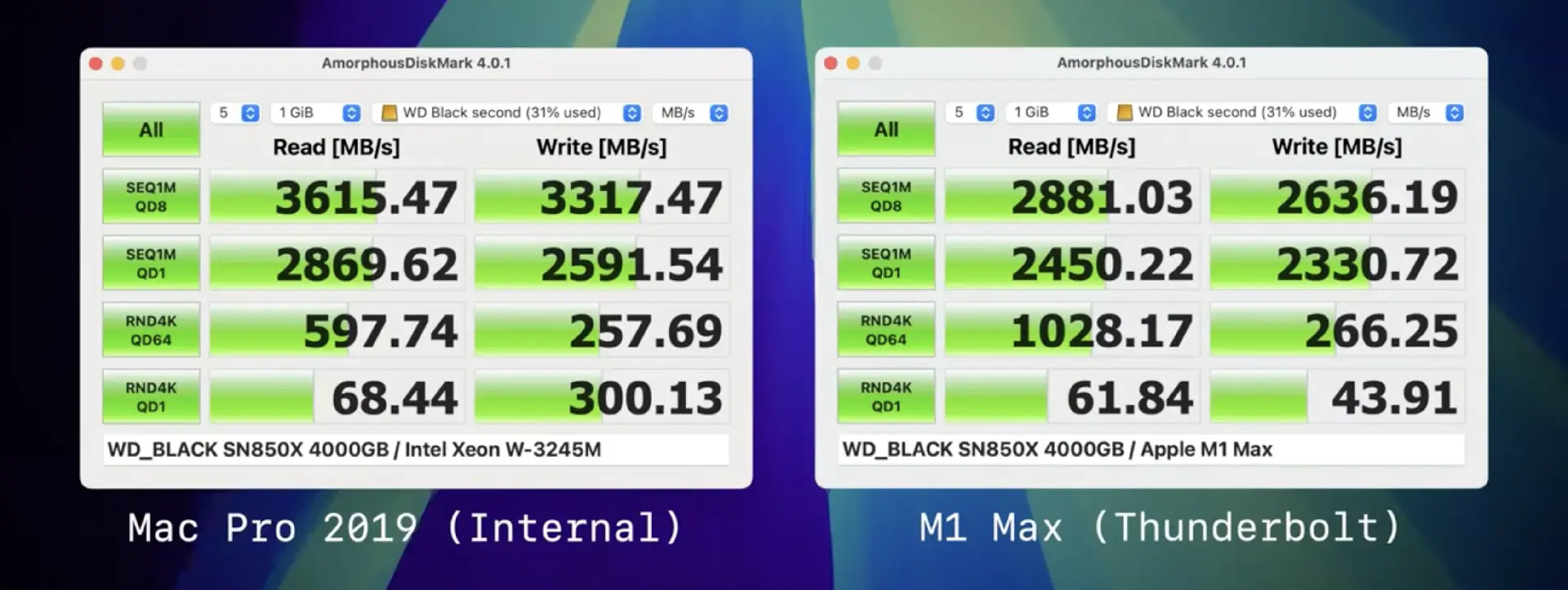

There's not a massive story when it comes to performance. Internal is faster than external, and that should not come as a surprise. Thunderbolt does have a higher protocol overhead; there is signal conversion between PCIe to Thunderbolt and latency due to distance. That said, it's in the ballpark of "close enough".

Comparing against the Thunderbolt 5 M4 Pro and Thunderbolt 4 M1 Max yeilded virtually no performance differences, with the M1 Max performing fractionally better. Amorphous tends to deliver inconsistent random tests and thus shouldn't be taken as exact but rather a rough estimate.

Being a Thunderbolt device, it's plug-and-play like USB. When I plugged it into my M1 Max, System Preferences showed six PCIe devices: four NVMe drives, two USB ports, and Ethernet from the McFiver card. For a secondary real-world test, for gigabit ethernet, I was able to achieve 450 MB/s read/writes to my Synology D923+ via the McFiver, identical to my Mac Pro 2019. The limitation is the NAS and not the card. 10 GBe is only 1250 MB/s max, something that was achievable even on Thunderbolt 2.

As previously mentioned, one of the wackier use cases that the Sonnet Echo II DV supports is the ability to connect two computers. This is feasible as literally each PCIe slot is an independent Thunderbolt device, complete with it's own thunderbolt controller. This is a feature that I don't think many people will use, but it does work. You can connect two computers to the Sonnet Echo II DV and have each computer access a single PCIe slot. This could be useful for certain workflows.

One minor complaint I have is that the cabling extenrally doesn't doesn't include a fused cable akin to the Ivnaky Thunderbolt dock, that makes connecting and disconnecting easier, as it's simple as plugging in a singular cable. That said, Apple does not keep it's orientation and spacing of Thunderbolt ports uniform between laptops and desktops. It's an insignificant gripe but it's something as a laptop user I've become pretty spoiled by.

Not an eGPU case

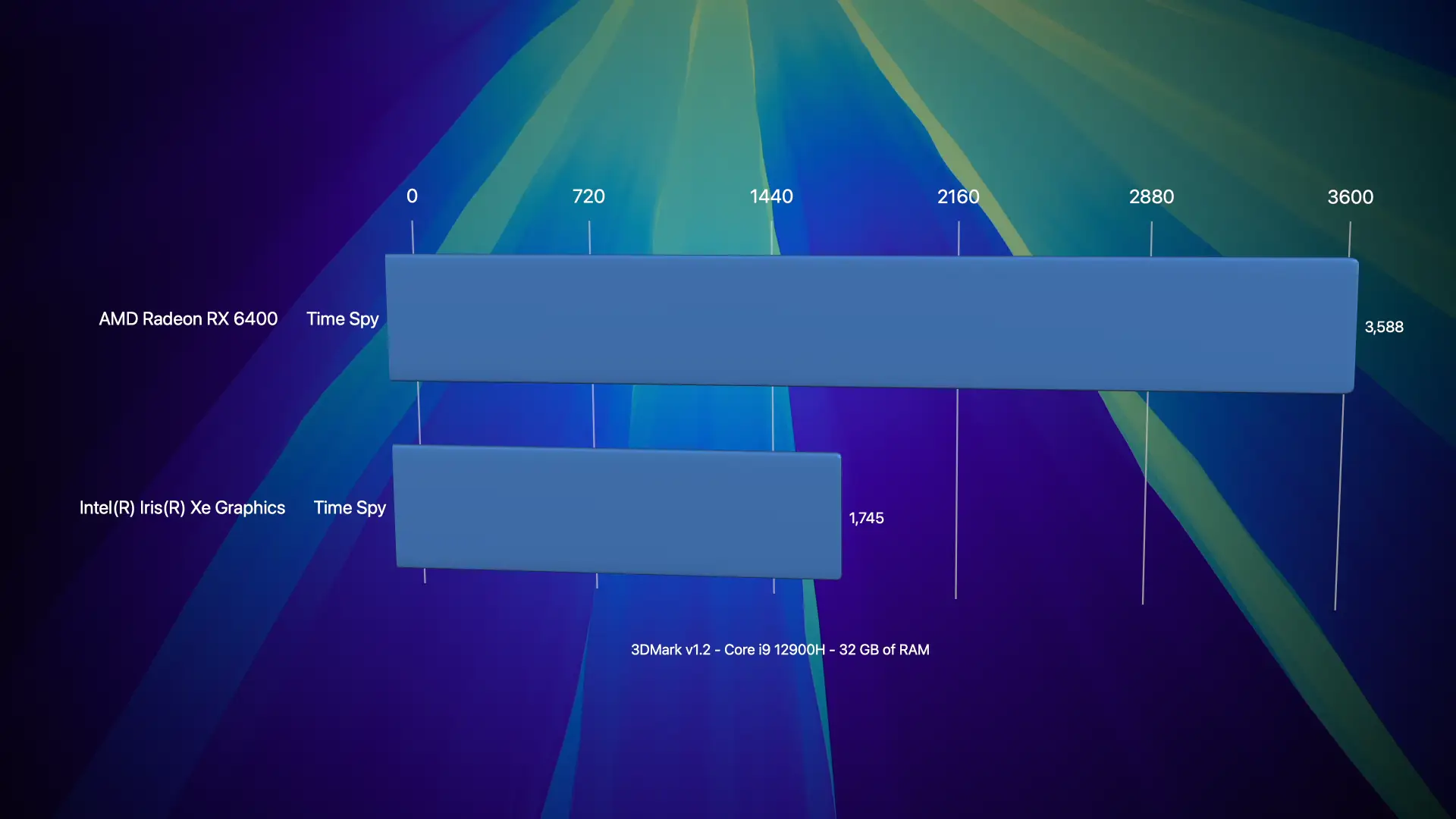

It should be abundantly clear that the II DV is not a GPU case, with its single slot design, limited power capability, and cooling focus on low noise instead of maximum heat dissipation. However, this didn't stop me from trying. I was able to find a modern-ish single-slot GPU in the form of the AMD Radeon RX 6400. The card's specs are pretty abysmal, only 4 GB of VRAM, based on RNDA2 with the processor and RAM clocked at 2 GHz, a tiny 64-bit bus, 7 TFLOPs for FP16, making it performance wise roughly that of a GeForce 770 from 2013. However, it is bus-powered and is a single slot.

macOS does not have drivers for this GPU, so I was limited to Windows testing. It was moderately better than my Geekom MiniPC's very poor Intel Iris Xe

So yes, you can jam a very shoddy GPU into this enclosure, but the sort of GPUs you can use are pretty abysmal. I wouldn't recommend it.

Market Alternatives: How the Echo II DV Compares

With several Thunderbolt expansion options available, it's important to understand how the Sonnet Echo II DV stacks up against alternatives. Here's a comparison of available solutions.

Sonnet's Own Product Line:

The Echo II DV positions itself as a premium offering in Sonnet's lineup. The price jump from the Echo Express III-D to the Echo II DV ($700 to $900) buys you dedicated Thunderbolt buses for each slot—a substantial advantage for bandwidth-intensive applications even though you get one fewer slot.

Sonnet Technologies

- Sonnet Echo Express (three-slot with shared bus): $700

- Advantages: Three PCIe slots, lower price point

- Disadvantages: All slots share a single Thunderbolt bus, limiting combined bandwidth

- Sonnet Echo Express SEL (single PCIe port): $300

- Advantages: Single slot

- Disadvantages: Single slot

OWC Solutions:

OWC's offerings tend to focus on storage expansion, with PCIe capabilities added as a secondary feature. None offer the dual Thunderbolt bus architecture that sets the Echo II DV apart.

- OWC Mercury Helios 3S ($399)

- Advantages: Lower price, includes a PCIe slot plus storage expansion bay

- Disadvantages: Single thunderbolt bus

- OWC Flex 8 ($649)

- Advantages:Combines PCIe expansion with 8 storage bays

- Disadvantages: Single Thunderbolt bus, shared bandwidth

Razer

- Razer Core X ($399)

- Advantages: Designed for GPUs, includes 650W power supply

- Disadvantages: Single Thunderbolt bus, optimized for GPUs

This is by no means a comprehensive list, but alternatives exist ready to meet the consumers' needs at various price points. The Echo II DV is slotted at the upper echelon of external enclosures. It's not a cheap solution by any stretch. Its stiffest competition is from Sonnet itself, as I imagine for the majority of users; the Sonnet Echo Express probably represents the best value if they're looking to port over as much flexibility as possible. It brings a 3rd PCIe slot but relies on a shared bus. Most users rarely push more than one PCIe device at a time, and probably the only times users would feel the limits would be when transferring large files between SSDs (assuming both were in the enclosure).

Apple Mac Pro

Apple's Mac Pro starts at $6,999 and includes seven PCIe slots. However, these advantages come with significant caveats:

- The base model uses the M2 Ultra, which is now outdated compared to the M4 Pro/Max and M3 Ultra

- Internal slots don't support user-upgradable GPUs or CPU upgrades

- Total cost is significantly higher than a Mac Studio ($3,999) plus Echo II DV ($900)

Beyond the cost savings, the Mac Studio + Echo II DV route offers greater flexibility, as you can take your expansion cards to a future computer—something impossible with the Mac Pro's internal slots once the system is obsolete.

As of writing this, due to Apple's very odd market positioning of a M4 Max and M3 Ultra, for many users the M4 Max Mac Studio represents the better value, as the CPU is faster in single and low threaded tasks. In fact the M4 Max, only about 15% faster, granted the M3 Ultra offers a much more powerful GPU, and the ability to toss gobs of RAM into the system.

The $2k price jump probably isn't worth it for the majority of buyers, and the extra money could be spent on accessories like the Echo II DV to expand the storage well beyond Apple's meager offerings.

Conclusion: Rethinking Professional Mac Setups

After extensively testing the Sonnet Echo II DV, I've come to a conclusion that might have seemed heretical just a few years ago: for many professionals, the Mac Pro is no longer necessary.

The combination of Apple Silicon performance and external PCIe expansion creates a compelling alternative to the traditional workstation model. A Mac Studio, MacBook Pro, or even a Mac mini or MacBook Air paired with the Echo II DV, and you're getting most of the benefits of the Apple Silicon Mac Pro 2023 at a steep savings. Sadly, outside of a few exotic PCIe cards, there isn't much point to PCIe now that dGPUs have been sunsetted by Apple. Their switch to shared memory has yielded some impressive results for applications like AI, as VRAM can be dynamically assigned. However, in terms of raw performance, it hasn't materialized and plays deeply into Apple's hands for planned obsolescence.

-

Blog Updates

For personal record, I like to keep a running log of what few updates I do under-the-hood with this site on the rare chance someone actually is a regular reader. This time I added JSON-LD and limited the index pages now to 15 posts as I have a habit of writing VERY long blog posts that can be extremely brutal on the homepage.

removing jQuery, stupid spam bot solution, complianing about AI, adding dark mode, or general changes

-

It's time to jailbreak your audible library

Libation: Free Your Audiobooks from DRM Protection

If you're an audiobook enthusiast who uses Audible, you've likely encountered the frustration of being unable to access your purchased content outside of Audible's ecosystem or have had content removed. While Audible offers a convenient service, their DRM protection limits how and where you can enjoy your audiobooks. Enter Libation, a powerful, open-source tool that allows you to download and decrypt your Audible library, giving you true ownership of your purchases.

What is Libation?

Libation is a free, open-source application that helps you manage your Audible library and liberate your audiobooks from DRM restrictions. With Libation, you can download, decrypt, and convert your Audible content into standard formats like MP3 or M4B, allowing you to listen on any device or player of your choice.

Key Features of Libation

- Complete Library Management: View and organize your entire Audible library

- Batch Processing: Download and decrypt multiple books at once

- Format Options: Convert to MP3 or M4B with chapter information preserved

- Metadata Handling: Maintain all book details including cover art

- Cross-Platform: Available for Windows, macOS, and Linux

Installation Guide

Getting started with Libation is straightforward:

- Visit the Libation GitHub repository

- Download the latest release for your operating system

- Extract the downloaded file to a location of your choice

- Run the Libation executable

Note: Libation does not require installation in the traditional sense. It's a portable application that runs directly from the extracted folder.

Setting Up Libation

When you first launch Libation, you'll need to authenticate with your Audible account:

- Click on the "Login" button

- Enter your Audible/Amazon credentials

- Complete any verification steps if prompted

- Upon successful login, Libation will scan and display your Audible library

Privacy Note: Libation connects directly to Audible's servers using your credentials. Your login information is stored locally and securely on your device.

Using Libation to Liberate Your Audiobooks

Basic Usage

Once your library is loaded, you can:

- Select one or multiple books from your library

- Right-click and choose "Download and Decrypt" or click the dedicated button in the toolbar

- Specify your preferred output format (MP3 or M4B)

- Choose the destination folder for your decrypted audiobooks

- Click "Start" to begin the process

Advanced Options

Libation offers several customization options to fine-tune your experience:

- Audio Quality: Adjust bitrate settings for the output files

- Metadata Customization: Edit book details before conversion

- File Naming: Configure naming patterns for your decrypted files

- Chapter Handling: Choose how chapter information is preserved

To access these settings, navigate to the "Settings" tab within Libation.

Managing Your Liberated Collection

After decrypting your audiobooks, you can:

- Play them with any standard audio player (VLC, iTunes, Windows Media Player, etc.)

- Transfer them to portable devices, including MP3 players, smartphones, and tablets

- Create backups to ensure you never lose access to your purchases

- Use them with audiobook-specific apps like Listen Audiobook Player (Android) or Bookmobile (iOS)

Troubleshooting Common Issues

Authentication Problems

If you encounter difficulties logging in:

- Ensure your Audible/Amazon credentials are correct

- Try clearing cached credentials in Libation (Settings → Account → Clear Saved Credentials)

- Check if your account requires two-factor authentication

Download Failures

If downloads are failing:

- Verify your internet connection is stable

- Ensure you have sufficient disk space

- Try downloading one book at a time

- Check if your antivirus or firewall is blocking Libation

Conversion Issues

For problems with the decryption or conversion process:

- Update to the latest version of Libation

- Try a different output format

- Check the application logs for specific error messages

- Visit the Libation community forum for assistance

Alternatives

While not covered in this post, there are other tools available for managing your Audible library, such as openaudible which is available for macOS/Windows/Linux as well.

Legal Considerations

It's important to understand that Libation is designed for personal use with audiobooks you've legally purchased. The tool allows you to exercise your fair use rights by removing DRM for personal backup and format-shifting purposes. Always respect copyright law and use your decrypted audiobooks responsibly.

Recommended Audiobook Players

macOS

- Books - macOS comes pre-bundled with Books, which will playback audiobooks, supports chapters and will resume where last left off.

- BookPlayer - A wonder application that is pay-optional, core features are all free to use.

iOS

- Books - iOS comes pre-bundled with Books, which will playback audiobooks, supports chapters and will resume where last left off.

- BookPlayer - A wonder application that is pay-optional, core features are all free to use.

Android

- Smart Audiobook Player - A very loved and popular audibook application

- Voice - A free and open-source audiobook player that supports chapters and bookmarks.

Windows

- Windows Media Player - A built-in media player that can handle various audio formats, including MP3 and M4B.

- Audiobook shelf - Open source player that is working on syncing to Android and iOS.

- VLC Media Player - A versatile media player that can handle various audio formats, including MP3 and M4B.

Linux

- Cozy - Modern, dedicated audiobook player for Linux

- VLC Media Player - A versatile media player that can handle various audio formats, including MP3 and M4B.

Conclusion

Libation empowers you to take full control of your audiobook collection, freeing you from the limitations of Audible's ecosystem. By decrypting your purchases, you ensure long-term access to content you've paid for, regardless of changes to Audible's policies or services.

Whether you're concerned about preserving your library, want the flexibility to use different playback devices, or simply prefer to have true ownership of your purchases, Libation provides a straightforward solution that respects both your rights as a consumer and the intellectual property of content creators.

-

Fake Legal Threats for SEO Backlinking scam

I get a lot of spam as I have a public email address on my blog. I ignore 99.99%, but here's one that's found in my inbox a few times and ticks me off. The first time it happened, I took enough time to actually look at the image in question. Within seconds, I realized it was an absolute farce as the image they linked was AI slop. I figured I should sound the alarm on this scam as my blog has solid domain authority and gets a surprising amount of traffic. Another blogger, Mark Carrigan has seen this exact template, jabardasti on Twitter and variations have been seen on Reddit.

Here's how it works:

- They find a blog post with an image that they claim is theirs and use AI slop that has a similar-ish image. It always seems to be linked to Imgur and not another website. I imagine in time, they'll bot it to steal your image and upload it to Imgur as 'proof.' Don't fall for it.

- They send a legal threat to the blog owner, demanding a backlink to their site.

- If you don't comply, they threaten legal action.

Pictured: This is the slop iamge that I received and I stole so that I'm actually now committing IP theft and defaced it.How can I confidently say it's a scam? I've ignored this at least three times now and its been over a year. Absolutely nothing has happened. It's a variation of the old "You have a broken link on your blog. You should link X instead" backlinking scheme.

Here's the email I received, complete with spelling errors and grammar mistakes:

James Harris | Citi Legal Services <james@clexperts.org>

3:45 AM (5 hours ago)

Dear owner of https://blog.greggant.com/posts/2021/09/24/mac-osx-snow-leopard-nature-desktop-backgrounds-in-5k.html,

We're reaching out on behalf of the Intellectual Property Division of a notable entity in relation to an image connected to our associated client: Big Cat Snow Leopard.

Image Reference: https://i.imgur.com/wid2Pil.png

Image Placement: https://blog.greggant.com/posts/2021/09/24/mac-osx-snow-leopard-nature-desktop-backgrounds-in-5k.html

We've observed the above image being used at the above specified placement. We are emailing you to insist our client is correctly credited. A visible link to [fake link removed some big cat facts website, I don't even want to acknowledge them in print as I don't want to give them any benefit] is necessary, placed either below the image or in the page's footer. The anchor text should be "Big Cat Snow Leapard". This needs to be addressed within the next five business days.

We're sure you recognize the urgency of this request. Kindly understand that simply removing the image does not rectify the issue. Should we not see appropriate action within the given timeframe, we will reference case No. 82831 and implement legal proceedings as outlined in DMCA Section 512(c).

For your convenience, past usage records can be reviewed using the Wayback Machine at https://web.archive.org, the main recognized digital web archive.

Take this communication as a formal notice. We value your swift action and expect your cooperation.

Regards

James Harris

Trademark Attorney

Citi Legal Services

1 Beacon St 12th floor

Boston, MA 02108

james@clexperts.org

www.clexperts.org

James I assume is not a real person. The website impressively has multiple pages, a few blog posts, and even a phone number and office location. That said, I know multiple real-life lawyers and real estate lawyers would not be dabbling in intellectual property law on the side.

If you receive emails from clexperts.org, clexperts.site, clexperts.info etc, it's a bullshit law firm. Ignore it. Move on. Don't take my word for it, you can google what real DMCA take down notices look like.

-

You probably don't want to stick an Intel GPU into a Mac Pro 2019...

I tend to do silly stuff with my Mac Pros. This time, I jammed an Intel Sparkle OC ROC A770 into my Mac Pro 2019. It worked well enough in Windows until it caused issues with macOS. The video contains the entire adventure.

As expected, the Arc A770 wouldn't work in macOS - the system recognized it only as a generic display adapter with no ability to output even unaccelerated video. This isn't surprising since Apple hasn't allowed third-party GPU drivers since macOS 10.13.

The card performed reasonably well in Windows 10, though benchmarks showed it lagging about 70% behind my Radeon 6900 XT. That performance gap makes sense given the price difference, but the Arc does have some impressive encoding capabilities that make it interesting for certain workflows.

Things went south when the A770 would enter full leaf blower mode whenever Windows went to sleep. Even worse, after my Windows testing, the Mac wouldn't boot into macOS at all. I don't know if the Intel Arc drivers somehow corrupted my EFI partitions, but when iBoot attempted to load, it failed completely.

Another thing learned from this experiment is that out of the box, the Mac Pro 2019 does not support Rebar (Resizable bar). Resizable BAR (Base Address Register) is a PCIe feature that enhances how CPUs access GPU memory by allowing them to view the entire GPU VRAM at once instead of in limited 256MB chunks. This technology enables faster data transfers between the CPU and GPU, potentially improving gaming and graphics performance, especially in memory-intensive tasks. AMD markets their implementation as Smart Access Memory (SAM) while Intel and NVIDIA simply call it Resizable BAR, but all function similarly by removing memory addressing limitations in the PCIe interface. There might be ways to enable it, but that's a battle for another day.

-

LTT Magnetic Cable Management System Review: Taming My Cable Chaos

Introduction: The Cable Challenge

About six months ago, LTT (Linus Tech Tips) generously sent me their Magnetic Cable Management system (MCM), but I've been avoiding the inevitable pain of reorganizing my entire setup.

Let's be honest - if you're anything like me, organization isn't our strongest point. So today I'm putting the LTT magnetic cable management system to the test on my mess of cables.

The Setup Challenge

My setup isn't extreme, but it's definitely complex. Here's what needs cable management:

- Five computer speakers

- Home theater receiver

- Two displays

- Three MIDI controllers

- Two audio interfaces

- Five external drives

- USB hubs and various devices

- Ethernet connections

- Power banks

- Hue Lights

- And many more components...

The centerpiece is a steel McDowell & Craig Tanker desk from the 1950s that belonged to my grandfather. While I'm not usually sentimental about objects, this desk does hold special meaning. The fact that it's steel works perfectly with the MCM's magnetic design, though I'll be testing the system on wood surfaces as well.

Product Overview: What's in the Box

The MCM system is straightforward but impressively engineered. Here's what LTT sent me:

The Arches

These come in four sizes, and I received the small, medium, and large. The magnets are surprisingly powerful - I can actually open my desk drawers just by attaching these magnetic arches.

Strength Test: The smallest arch can hold my Cloud Lifter preamp (11.3 oz/320g) with just one magnet. The larger arches could likely hold several pounds. They're so strong that prying them off a surface takes real effort - definitely a good feature.

Power Bar Keys

These might be the secret weapon of the entire system. Power bars/surge protectors typically come with screw mounting options, and these keys simply take advantage of what's already there. With a simple twisting motion, you can attach your power supply to any magnetic surface.

Mounting Plates

LTT also sent various mounting plates, which are essential if you want to attach the system to non-metal surfaces like wood. Each plate has an adhesive back (though I opted to bolt mine for extra security).

The Organization Process

My strategy was to:

- Consolidate my mess by relocating my home theater receiver

- Attach one power strip to the bottom of my IKEA stand

- Organize cables using only what LTT provided

Step 1: Starting Fresh

To give my past self credit, I had made some attempts at organization with cable ties, but it was still a mess. Clearing my desk gave me the opportunity to vacuum and clean - keeping your space clean is just part of being a responsible adult.

Step 2: Tackling Ethernet

I started with my ethernet situation, tucking the cable between the bevel and garage floor lip, securing it with painter's tape for now (though I'll revisit this for a cleaner solution later).

Step 3: Power Management

Next came attaching the power bar keys to my surge protectors:

- My trusty 10-15 year old Belkin surge protector attached with a simple twist-and-slide motion

- My ancient (25+ year old) Surge Master with phone line protection (now apparently "rare" according to some optimistic eBay sellers)

I attached the larger surge protector to the back of my desk and the smaller one to the bottom of my IKEA furniture using the mounting plates. For the wood surface, I bolted the plate down rather than relying solely on the adhesive.

Step 4: Cable Routing

One cautionary note: these magnets can scratch metal surfaces! I tried using felt pads to prevent scratching, but they were too thick and reduced the magnetic strength significantly. A product suggestion for LTT would be integrating silicone grips directly into the arches.

The Results

I kept my goals realistic, knowing I'd never be able to hide all cables in a setup this complex. When viewed from most angles, the cable mess is considerably reduced. I prioritized functionality over perfect presentation since this setup serves as both my work-from-home station and my creative outlet for YouTube and music.

One area that turned out particularly well was my Power Mac G4 setup, which is located several feet away from my other electronics. It was already fairly organized, but the MCM made it even better.

Final Verdict

LTT drove a money truck to my house for this review, I'm genuinely impressed with the MCM system. (No, they didn't pay me anything.

Yes, it's somewhat expensive, but I'll be buying more components with my own money for future projects. It's simply the best cable management system I've found so far.

If you're on a budget, start with the power bar keys - they're likely to be the most impactful first step in organizing your setup. Then you can gradually add more components as your budget allows.

Disclaimer: LTT provided the products for this review but did not compensate me otherwise. All opinions are my own.

-

I just got a PowerPC Mac! ... now what?

So... the retro computing bug just got you, and you had to get that iBook; maybe it was an iMac G3, a sleek PowerBook G4 12" or PowerMac G5? In any case, this is a guide for you to make the most out of your new-but-old computer.

Upgrades!... Okay, now what?

There are too many models and upgrades to cover, and finding information on the upgrades is actually the easy part; between LowEndMac, EveryMac, MacRumors Forums, archived XLR8yourMac articles and posts, ActionRetro, Mac1984, This Does Not Compute, and so on. Therefore, I'm going to pass on this or hardware recommendations. CPU upgrades for the old computers are non-existent, RAM can be maxed out, HDDs can be replaced with SSDs and video cards can be swapped. There's a joy to modular computing, and for some the hunt for rare CPU upgrades or flashing video cards is part of the joy. Even the less-modular Macs of the day are much more hackable than today's computers.

Therefore, this is a map of recommended software and hopefully an inspiration board to make use of your old hardware. PowerPC computers are too dated and power sipping to have practical value, seeing as a Raspberry Pi has more raw processing power than almost anything in the PowerPC sphere.

Modern Software

Believe it or not, there are still people developing retro software for old PowerPC Macs, including Discord, web browsers, and even Chat GPT, as who doesn't want to be gaslit even in the world of retro computing? The video contains a deeper breakdown of the software listed below.

- Discord Lite - A lightweight Discord client for PowerPC Macs

- Legacy AI - A modern AI for PowerPC Macs

- PPCAppStore - A app store for PowerPC Macs

- Newsstand - A news reader for PowerPC Macs, OS 9 only

- TigerBrew - A package manager for PowerPC Macs

- TenFourFox - A semi-modern web browser for PowerPC Macs

- InterWebPPC - A fork of TenFourFox

- AquaFox - fork of TenFourFox, incorporating tweaks from InterWebPPC and TenFourFoxPEP

There's always Linux...

Adelie Linux is a Linux distribution that supports PowerPC Macs and still being developed. Popular YouTubers like Action Retro have made videos demoing Adelie on PowerPC Macs, but you bought a Mac to be a Mac.

Okay.... but really, what now? Project ideas!

Gaming

Gaming is an obvious first stop for any retro computer enthusiast to experience games as they were. Apple used to be able to pull in major releases sans the time when Valve canceled the Half-Life port.

I've compiled a list of 100+ Games worth checking out on your PowerPC Mac. This list is not exhaustive, but it's a good starting point. I've tried to include a mix of genres and games. I've also tried to include games that are still fun to play today. It's a topic within itself.

Check out The best games for late PowerPC G4/G5 Macs - OS 9 and OS X (100+ games)

Music creation

This is one I'm particularly fond of, as there's a lot of quality retro software be it Logic, Ableton or Cubase for a DAW and oddball apps like Reason, plus a lot of plugins to boot. If you're a digital musician, you're able to make studio quality music on your Mac on the extreme cheap. USB midi is supported in OS X out of the box and it doesn't take a maxed out PowerMac G5 to be able to create quality tunes.

OS X supports low latency USB midi out of the box, and it's a compelling way to get into digital audio as you can score hardware cheaply, and the limitations can be a blessing. There are musicians (although dwindling) still using PowerPC Macs for audio, building multitrack Protools setups on the cheap. Unlike, say, video, where technology becomes a huge limiter, audio is much freer of restrictions (given you embrace freeze tracks, and RAM caps).

Reason 4.0 is intuitive, and also a great learning tool for understanding routing and wiring and for people who are new to digital audio and MIDI, it is a great "my first audio app" if you're looking to make music.

If you've never really messed with music creation, Reason is a brilliantly beautiful

Digital Photography Workflow

As of late, early digital photography has had a boom in popularity, partly for the aesthetics and partly for the art. A modern iPhone performs thousands of pre-baked calculations to tune, balance, color correct, and otherwise sweeten a photograph. Old digital cameras have radically less as they mostly take data from a CCD or CMOS and record it to a file. For some, this also means eschewing modern digital darkroom solutions for a more "pure" digital photography experience.

Programming / Scripting

Retro software development isn't easier than modern as the barrier for software creation has lowered drastically with the rise of various frameworks and open-source projects that can turn a full-stack web developer into an app developer. For the hardcore, you can take a crack at learning Objective-C or Carbon for classic Mac development.

Retro Web Development

Web development might not be the first thing on your mind with a retro computer, but being a much simpler time, much easier to understand. Creating a simple web page was a rite of passage in days past, before social media. If you're feeling particularly inclined, Set up a local web server and build sites with period-appropriate tools or Create a retro webpage or website using the limitation of the early 2000s with CSS 1.0 / Javascript ES3, and embrace Create websites using Dreamweaver MX or GoLive.

Retrocomputing Documentation and communities

A bit of ironic self-awareness for this suggestion. One of the big draws is to map out what's feasible and possible. There's an entire world of YouTube, blogs, forums, and Reddit groups dedicated to the pursuits of retro computing that even result in expos.

Vintage Software

There's a lot of classic software that's worth checking out. I've compiled a list of some of the best software for PowerPC Macs. This list is not exhaustive, but it's a good starting point. I've tried to include a mix of genres and software. I've also tried to include software that is still useful today.

Games

There's so much to cover in Mac gaming (no really, I'm serious) that it demands an entire article of it's own: The best games for late PowerPC G4/G5 Macs - OS 9 and OS X (100+ games)

Audio

- Ableton Live 5 - Ableton still had some growing to do, but PowerPC Macs can enjoy Ableton.

- Apple Logic Pro 7 - Logic Pro 7 is a full-fledged DAW that's still very capable today. Entire albums were recorded on this. It, however, is a bit of a resource hog

- Audion - Audion was a popular MP3 player that was ahead of its time and offered the most amazing skins of any software. Beautiful, and still a masterclass in Mac software.

- Cubase LE 1.08/1.04 - Cubase is a powerful DAW that's still used today. The light version caps tracks to 48 audio tracks and 64 midi tracks.

- Propellerhead Recycle 2.0 - Recycle is a loop editor that allows you to slice up audio for use in other audio applications, allowing you to sample in a unique way. Recycle "REX" loops are still used to this day. It has a short learning curve.

- Propellerhead Reason 4 - It runs on relatively modest hardware very nicely and features a beautiful skeuomorphic design, allowing you to construct and wire virtual instrument racks. It's a blast and a good way to get into digital music. Highly recommended.

- Propellerhead Rebirth - ReBirth emulates two Roland TB-303 synthesizers, a Roland TR-808, and a Roland TR-909 drum machine, and paved the way to Reason.

- Sound Studio 3 - Sound Studio is a simple two-track audio editor that's still very capable today. It's fast, stupidly easy to use, and a good complement to any audio suite.

Audio Plugins

- Absynth 2.x - a granular synthesizer that allows you to create a wide variety of sounds.

- CamelPhatFree VST - a distortion and filter plugin that allows you to add warmth and character to your sounds.

- CamelCrusher VST/AU/RTAS - a distortion and filter plugin adds color to your sound.

- Native Instruments B4 - A Hammond B3 emulator

- Native Instruments Guitar Rig 2.2 - a guitar amp emulator that allows you to play guitar through your Mac Native Instruments Kompakt v1.0 VSTi - sample library loader that supports a wide variety of sample formats</li>

- Native Instruments Massive 1.0.1 VSTi/AU/RTAS - a loved and powerful synth

- Novation Bass Station and V-Station VST/AU - a collection of classic synth emulations

- Ohmicide VST/AU/RTAS - multiband distortion plugin

- OhMyGod VST - a distortion plugin that allows you to add grit and character to your sounds

- TAL-BassLine-101 VST/AU - a classic analog bass synth emulation targeted for EDM, and useful for trap and acid.

- TAL-Elek7ro VST - a virtual analog synth..

- Native Instruments Reaktor Session One Carbon Futuremusic Edition VST/AU - a synth emulator that lets you build your own synths.

- Voxengo Freeware Audio Plugins PPC/x86 VST/AU - a collection of free audio plugins that includes a variety of EQ/effects.

- ZebraCM VST/AU - a powerful synth that allows you to create a wide variety of sounds.

Emulation / Virtualization

Emulation was very popular in OS 9 and OS X. Thus, a wide range of emulators were ported to OS X and OS 9. One of the most impressive is Virtual PC, although requires a beefier Mac as it emulates an x86 CPU. Virtual PC is surprisingly usable on late gen PowerPC G4s and G5s and will also make you thankful for modern virtualization software like Parallels. Connectix VGS is super impressive, as even iMac G3s can join in on PlayStation gaming with near flawless emulation for most titles. It's actually a viable way to play PSX games to this day. I highly recommend trying it out.

- Boycott - A Gameboy / GameBoy Color emulator for OS 9.

- Boycott Advance - A Gameboy Advance emulator for OS 8 - OS X.

- bsnes - A SNES emulator for OS X, only for the PowerMac G5.

- Connectix Virtual Game Station - Kicked off a major lawsuit by Sony which established emulators as legal, PlayStation games on your PowerPC Mac in OS 9.

- DGen and Dgen OS 9 - A later emulator for Sega Genesis / Megadrive games on your PowerPC Mac in OS X.

- GenEm - Play Sega Genesis / Megadrive games on your PowerPC Mac in OS 9.

- Genesis Plus - A Sega Genesis / MegaDrive emulator for OS 8 - OS X.

- Generator - A Sega Genesis / MegaDrive emulator for OS 8 - OS X.

- GrayBox - A NES emulator built for System 7 - OS 9.

- Handy - An Atari Lynx emulator for OS 8 and OS X.

- iNES - iNES is an NES emulator that allows you to play NES games on your PowerPC Mac in OS 9.

- KiGB - Gameboy emulator for OS 8 - OS X.

- Mini vMac - Mini vMac is a Mac Plus emulator that allows you to run classic Mac software on your PowerPC Mac.

- M1 multi-platform arcade music emulator - play music from videogame ROMS for OS 8 - OS X.

- MAME OS X - MAME is an arcade emulator that allows you to play arcade games on your PowerPC Mac in OS X.

- MasterGear - A Sega Master System / Game Gear emulator for OS 7 to OS 9.

- Mupen64 - A Nintendo 64 emulator for OS X, limited compatibility.

- NeoPocott - A Neo Geo Pocket Color emulator for OS 9 and OS X.

- Nestopia - A NES emulator for OS X.

- PCSX - The PowerPC version of the popular PlayStation emulator

- RockNes - OS 8 to OS X NES emulator

- SMSPlus - A Sega Master System / Game Gear emulator for OS 9 and OS X.

- Snes9x - A powerful SNES emulator that worked on relatively modest hardware.

- QuickNES - An NES emulator that works both on OS X and OS 9.

- Virtual PC 7 + Windows XP - Virtual PC is virtualization software that opened up the world of emulating Windows on a Mac.

- VisualBoyAdvance - A Gameboy Advance emulator for OS X.

- Blitter Library - visual enhancements for many popular OS 9 emulators

- Emulation Enhancer - visual enhancements for many popular OS X emulators

Graphic Design

We've both come a long way and are not terribly far in the world of graphic design software as Photoshop 9 is still more than capable today, even if it lacks some of the ML/AI features of upscaling, content-aware replacements, focus correction and so on. If you get good with Photoshop 9, you're basically ready-to-go on the current version of Photoshop CC.

- Adobe Creative Suite 1 & Adobe Creative Suite 2 - The suite were much smaller with Adobe Acrobat Professional, GoLive, Illustrator, ImageReady, InDesign, & Photoshop. Even today, Illustrator and Photoshop 9 remain capable as they feature HDR support, warping tools, and smart objects (still lacking from virtually all competitors). Photoshop 9 is a beast and, while intensive, is surprisingly nimble compared to its bloated modern versions. Most everything you'd ever need is in this version.

- Aperture - Apple's professional photo editing software that developed a small cult following.

- Cinema 4D R9 XL - Cinema 4D is a 3D modeling and animation software. It's robust for its time.

- Macromedia Studio MX - Macromedia Studio MX is a suite of software that includes Dreamweaver, Fireworks, Flash, and Freehand. This is mostly a nostalgia binge, but Freehand, in my opinion, was much easier to use the vector illustration app.

Productivity

It's unlikely you'll be using your PowerPC Mac for writing the next great American novel, but there are still a few productivity software packages you may want to experience.

- Microsoft Office 2004 - Yep. It's Microsoft Office, and that means Word, Excel, PowerPoint but also Virtual PC 7

- OmniGraffle - If you're after some 2000s-era diagramming and flowcharting in a native Mac-only experience, OmniGroup has you covered.

Utilities & Enhancements

OS X was infinitely more hackable than modern macOS due to less OS security. OS customizations were popular and varied. It's one of the more senseless and fun things to explore for early OS X.

- Diskwarrior 3.x - Absolutely a must. HFS+ is pretty awful and prone to a lot of errors. Diskwarrior saved my ass many a time back in the day and makes retro computing more enjoyable as it's able to fix the by far and away the most problems with disk corruption, and much better than Apple's own disk utility.

- Drag Thing - A dock alternative that predates OS X

- Drop Drawers - A lesser-known but useful utility that allowed for spring-loaded folders on any side of the screen and could house links, application aliases, text, etc

- FruitMenu - customize the Apple menu

- Kaleidoscope - An OS 9 theming application

- Onyx - Onyx is an OS X system maintenance tool that allows you to clean up your system, repair permissions, and optimize your system. It's a good way to keep your system running smoothly. Depending on the OS X version, lets you modify the dock appearance and default behaviors.

- Roxio Toast 6 Titanium - Toast is a CD/DVD burning software that allows you to burn CDs and DVDs and mount disk images. Highly recommended.

- OmniDiskSweeper- quickly find and delete large files.

- Shapeshifter - early OS X was wildly themeable, and Shapeshifter offered awesome theming capabilities. It only worked until 10.4 Tiger.

- QuickSilver - application launcher

- TinkerTool - exposes hidden system customization

- Window Shade X - Staple of OS 9, allowing for collapsible windows that made its way to OS X

Development & Programming

Many developers have fond memories of coding on PowerPC Macs, and some might want to relive that experience. Modern devs might want to take a swing at writing a new app with the challenges of retro software.

- Xcode - Apple's IDE for writing software for those looking to make retro software.

- BBEdit - Legendary text editor with PowerPC support.

- CodeWarrior 10 / Codewarrior 9 - Essential development environment for classic Mac

- FileMaker Pro 6 - Database software

- MacPerl/MacPython - Scripting languages for Mac

Networking & Internet

Less "fun" than more practical as FTPs are a surefire way to transfer software, especially to OS 9 computers.

- Fetch / Transmit / CyberDuck - FTP clients

- Colloquy - IRC client

- Eudora / Entourage / Outlook Express / Sunbird - Email clients

- NetNewsWire - RSS reader

Video and Media

PowerPC Macs were the go-to for video editing and media production, although they struggled during the High-definition transition as even the mightiest of G5s were somewhat ill-equipped for 1080p editing. If you have an old DV camera, they'll chew through 480i and 480p video.

- Final Cut Studio - Apple's professional video editing suite, including Final Cut Pro, DVD Studio Pro, Motion, Live Type, Compressor, Cinema Tools, and Soundtrack.

- iLife - iLife is a suite of software that includes iPhoto, iMovie, iDVD, and GarageBand that makes any Mac user a multimedia wizard.

- iMove HD - Who doesn't love editing 1080i video?

- VLC - The legendary open source media player

-

What is even happening?

You wake up one day to find yourself with unfathomable wealth. You're not sure how it happened, but you're not going to question it. You have enough riches that your children's children's children will live a life of utter opulence and decadence. You're not sure what to do with all this money, it's unearned. You were born into incredible wealth so you were free to take risks. Even if you failed, you'd still in the 0.1%. However, mostly blind luck, you invested and made a few gambles, and it paid out. Sure, you had to lie, cheat, and steal a bit, but you're not going to think about that. The world is your oyster. People flock to you. They swarm, looking to you for guidance as one of the richest people who ever lived. People hang on to your every word. You start to believe that maybe, after all, you are chosen. Your humility and humanity start to leave as you choose flattery over honest feedback. Sycophantic, desperate men idolize you as you are an avatar for their own mediocrity and lack of acuity. Whether they or you realize it or not, your own mediocrity represents the escape fantasy. A line gets crossed; maybe it's the psychedelics, lack of sleep, and alcohol, or maybe it's the isolation and sadness as you start to stare down your own mortality, but your id takes control, impulse over consideration. Rather than self-actualization, you choose self-infantilization. You buy your way into a despotic government run by a clueless old man who you can easily woo with flattery and fame. You can reform this world in your making.... and you lie about video game and cry publicly about a beef with twitch streamer.

What is even happening?

Even this mental exercise of trying to explain the world I lived in to my past self in 2015 is too stupid to even take seriously. This goddamn timeline.... I swear...

Elon sucks. Also, 🇺🇦🇺🇦🇺🇦

-

Blog Updates

Not that I have regular readers, keeping with the tradition of announcing changes, like my stupid spam bot solution, complianing about AI, adding dark mode, or general changes, I finally removed jQuery. That's a 70k JS payload down to 7k. The only reason it existed was for FitVidJS, which I converted to vanilla JS.

Also, now my slogan changes with well over 100 phrases; some are a bit spicy... well, only if you're the sort of low-acuity person who still thinks Elon is cool. It's okay, I'm just a mean guy who doesn't use Twitter. My opinions don't count.

I'll probably look at integrating this blog into ActivityPub to continue supporting the open internet.

-

Setting up a Synology VPN for your Mac

I know why you're here. It's because you want to connect to your local network via WWW, but Synology's guides aren't complete, and a bit out of date, right? I don't know why I'm asking, as you can't respond. Anyhow, let's get to it.

Step 1: Setting up the your Router

In your router, you'll need to set up port forwarding or "Virtual Server" or "NAT Settings":

- UDP port

500(IKE) - UDP port

1701(L2TP) - UDP port

4500(IPSec NAT-Traversal)

These must be pointed to your Synology's internal IP address, like 192.168.x.x or 10.10.x.x. You also may need to unblock these in your Firewall.

Step 2: Setting up the VPN Server

If you haven't installed the VPN Server package from Synology on your NAS, you'll need to do this.

You have three main options for setting up a VPN, only two of which are real options: OpenVPN and L2TP over IPSec. The path of least resistance is L2TP over IPSec. You'll only need to configure the IP address, which should be in the same host range (the last two octlets). My network is

192.168.50.x. To avoid any IP conflicts, I just add +1, so my VPNed devices will be192.168.51.x.The second piece is the Pre-shared key. Assign this to whatever you'd like, but you will need this password.

Step 3: Setting up your Mac

Locate VPN in the system settings; in modern macOS, search "VPN," and it'll appear in the sidebar. Click add VPN configuration, and select L2TP over IPsec.

You'll need to configure the following:

- Display name: This can be anything

- Server address: This is your network's external IP. I couldn't get the quickconnect URL to work, but there is probably a way. The easiest way to determine your IP is to use a "What's my IP" search in a web browser when connected to the same network as your Synology.

- Account name: This must be a Synology user on your NAS. You can create an account just for VPN or use an existing account

- Password: This is your password for the Synology user.

- Shared Secret: This is the pre-shared key you set up in the Synology VPN server.

To test the connection, you'll need to use an external network; if you have an iPhone, a quick and easy way is to connect your Mac to your iPhone as a hotspot and test the VPN connection.

If you have issues connecting anything on your network, in your VPN configuration, click options and select "Send all traffic over VPN connection". This is a brute force method that'll do exactly what it says. Your Mac essentially exists on the same network as the rest of your devices.

- UDP port

-

The Definitive Guide to iOS/iPadOS emulation

As of April 2024, Apple has allowed emulation as long as they don't use JIT. This has opened up the floodgates to a technology that virtually every other modern platform in existence allows. Emulation can be used for many things, but gaming is the most popular use case for average users, and thus, this guide will focus entirely on gaming.

This guide is a living guide and is in the process of being built out. The goal is to demystify iOS emulation and make it accessible. Thanks to the r/EmulationOniOS community.

Also available in video!

If you prefer a video version of this guide, I've made a video version that covers everything you need to get started with iOS emulation.

- Glossary

- Getting Started / Requirements

- File Management

- Legal Considerations for ROMs and BIOS Files

- iOS vs Android

- Emulators

- Sideloading: Interpretation vs JIT

- Controllers and iOS

- Dumping Your Own ROMs... on an iPhone?

- Creating Your Own PlayStation / Sega CD / NeoGeo ISOs

- Communities

- Version History

- macOS Emulation Guides

Does this guide seem familiar? Perhaps you've seen my The Definitive Classic Mac Pro (2006-2012) Upgrade Guide, The Definitive Trashcan Mac Pro 6.1 (Late 2013) Upgrade Guide or The Definitive Mac Pro 2019 7,1 Upgrade Guide. These are all free of charge, free of advertisements and annoying trackers, labors of love. You can find me on YouTube and patreon.

Glossary

Emulation has a lot of jargon that comes with it. As a quick refresher, here's a list of terms that will be used throughout this guide.

- BIOS (Basic Input/Output System) – A small program essential for some emulators to replicate the original hardware's startup process and functionality. Required for systems like PlayStation and Game Boy Advance.

- Core – A specific emulator module within a front-end system (like RetroArch) designed to emulate a particular console. It is an application within an application.

- Emulator - software or hardware system that mimics the behavior of another system, allowing one device or platform to run software or applications designed for a different environment. It replicates the original system's functionality, including hardware and software interactions, without requiring the original hardware.

- Firmware – Low-level software stored in a device's ROM or flash memory that controls hardware functions. Some consoles require firmware files for proper emulation.

- Frame Skip – A feature that skips rendering frames to improve performance, reducing the number of frames displayed per second and affecting lower frames-per-second (FPS). This was a common technique with underpowered hardware. Modern iOS/iPadOS devices almost never need to make this compromise. Generally, poor performance is due to other factors like using too many game enhancements.

- Front-End – A graphical user interface that simplifies the process of using multiple emulators and managing game libraries. RetroArch is a popular front-end for multiple emulators.

- JIT (Just-In-Time compilation) - A method that dynamically compiles code during execution, improving emulation performance but restricted by Apple's policies

- IPA (iOS App Store Package) – A file format used for iOS apps that can be sideloaded onto devices using tools like AltStore or Cydia Impactor.

- ISO (International Standards Organization file) – Files distributed with a .iso suffix adhere to the ISO 9660 standard. A .iso is a disk image file that contains a complete copy of a CD/DVD game commonly used for PlayStation, Dreamcast, and other disc-based consoles.

- libretro - An open-source development interface that allows for the easy creation of emulators, games, and multimedia applications that can plug straight into any libretro-compatible frontend. RetroArch and Provenance are a front-ends that uses libretro cores. Libretro cores have been ported to an incredibly diverse set of operating systems like Linux, Windows, macOS, Android, iOS/iPadOS/tvOS/visionOS, and CPU architectures like x86 (Intel/AMD), ARM, Risc-V, and even PowerPC. Almost all iOS/iPadOS emulators are based on libretro and is the backbone of iOS emulation.

- Native Resolution - the original display resolution of the emulated console.

- ROM - (Read-Only Memory) is a type of non-volatile memory that stores firmware or software permanently and cannot be easily modified or erased. In emulation, a ROM refers to a digital copy of a game's software extracted from a physical cartridge or disc, allowing it to be played on an emulator.

- Save State – A snapshot of a game's current state that can be saved and loaded at any time, allowing players to resume gameplay from that point.

- Side Load – Sideloading is the process of installing apps on iOS/iPadOS devices from sources other than the official App Store, typically by using tools like AltStore, which allows access to emulators that use JIT compilation and other features restricted by Apple's App Store policies.

Getting Started /Requirements

Emulation is software that mimics another device's hardware and software environment, allowing your iOS device to run games and applications originally designed for different systems like the Nintendo Game Boy, PlayStation, or Sega Genesis.

Requirements:

- A device running iOS 17, iPad OS 17 or later

- Free space on your device

- Optional but recommended: A gamepad

- A bit of patience

Modern iPhones and iPads are powerful machines; the iPhone 16 Pro in raw CPU computing bests an 8-Core Mac Pro 2019. Any device capable of running modern iOS has enough processing power to emulate many different platforms. In the late 1990s, an iMac G3 233 MHz could emulate a NES and do reasonably well at SNES emulation. The biggest impediment for most devices will be storage as 32-bit era consoles like the Sony Playstation, Sega Saturn, or PPSSPP games can easily eat 600 MB per game, and in the case of the PSP, over 1 GB.

An emulator cannot understand interactions the console was not programmed for, such as touching menu items in an SNES game. While emulators feature touch controls, gamepads are highly recommended as console games are designed specifically for controllers; thus, all touch controls are mapped to key presses. Touch controls are either unable or very difficult to use for some interactions, such as analog triggers.

Legal Considerations for ROMs and BIOS Files

The video above is a video I made about the story of Connectix VGS and how it enshrined emulators as legalUnderstanding the Legal Landscape

The legal status of game ROMs and console BIOS files exists in a complex gray area that varies by country and jurisdiction. While emulators themselves are generally legal software, the content they run often raises copyright concerns.

ROM Files

ROMs (Read-Only Memory) are digital copies of game cartridges, discs, or other media. From a legal standpoint:

- Personal Backups: In many jurisdictions, making personal backup copies of games you legitimately own is considered legal under fair use doctrines.

- Downloaded ROMs: Downloading ROMs of games you don't physically own is generally considered copyright infringement, even if you previously owned the game but no longer do.

- Time Limitations: There is a common misconception that games become "abandonware" after a certain period. However, copyright protection typically lasts for decades (in the US, copyright extends 95 years for corporate works), and most classic games are still under copyright protection.

Of course, said files are often distributed openly on the internet and found via search engines.

See the ROM Dumping and Creating ISOs section on how to make legal backups of your games library.

BIOS Files

BIOS files are even more legally sensitive than ROMs:

- Copyright Protection: Console BIOS files are protected by copyright and are generally not intended for distribution.

- No Abandonment Provisions: Even for discontinued consoles, the BIOS copyright remains in effect.

- Reverse-Engineered Alternatives: This is why many emulators (like those mentioned in this guide) offer reverse-engineered open-source BIOS alternatives that don't infringe on copyrights.

Best Practices

To stay on the safer side of the legal spectrum:

- Only create backups of games you legally own.

- Don't distribute ROMs or BIOS files to others.

- Support developers by purchasing games when they're available on modern platforms.

- Consider using legal alternatives like official re-releases or subscription services that offer classic games.

Legal Alternatives

Many companies now offer legal ways to play classic games:

- Nintendo Switch Online (NES, SNES, N64, Game Boy games)

- PlayStation Plus (PlayStation classics)

- Virtual Console and classic collections

- GOG.com and other digital stores that sell classic games

This guide is not intended to encourage copyright infringement. The technical information provided is for educational purposes and for those who wish to play games they legally own on modern devices.

Note: This section provides general information, not legal advice. Laws vary by location and interpretation. When in doubt, consult legal resources specific to your region.

iOS/iPadOS vs Android

This guide will likely never feature a comprehensive breakdown comparing iOS vs Android, but Android has a considerable advantage compared to iOS.

Due to its more open nature, Android has a decisive advantage. While iOS/iPadOS emulation dates back to the jailbreaking era of iOS, Android app stores have officially allowed emulation from virtually the beginning, meaning there are many more mature emulators. Android also places fewer restrictions on emulation; thus, emulators exist for more modern consoles, like the Sony PlayStation 2, Sega Dreamcast, GameCube, Wii, and even the Swìtch.

The diversity of the Android ecosystem has spawned full-blown console-like Android devices such as the Odin 2, a high-end device that features a built-in gamepad akin to a portable videogame console. Devices like the Odin 2 feature memory cards, allowing for a relatively inexpensive way to store game collections.

Android is also more forgiving about 3rd party controller mapping, whereas iOS has a much more limited ability to map 3rd party controllers. This gives Android an accessibility edge as less conventional layouts and input devices can be mapped according to user preference.

Mainstream iOS/iPadOS emulation, while relatively young by comparison, still offers a great experience. Apple's hardware is second to none, as there are few devices that can match an M3 iPad in raw performance. Android does not make the setup easier in my experience, but rather, it offers a lot more options. This guide will help you get the most out of your iOS or iPadOS device.

Android is the superior option if emulation is your primary concern.

File Management

iOS has a very locked-down file system, but it does provide multiple ways to transfer data to and from your device. The most common methods are:

- USB File Transfer - USB File Transfer is the most reliable and recommended method, but it requires a computer.

- iCloud Drive - iCloud Drive allows for dynamic file management but requires a subscription for more than 5 GB of storage

- AirDrop - Airdrop is the most convenient but is limited to Apple devices.

Transferring via USB

Transferring files via USB, as stated, is the preferred method due to speed, reliability, and accessibility.

- Connect your iPhone or iPad to your computer via cable. You may need to authorize the device on your computer and/or device.

- Open Finder on your Mac or File Explorer on your Windows PC.

- Your device should appear in the sidebar or as a drive in the finder. Click on it to bring up the iPhone pane.

- Click on the Files tab to access the file system. You should see a list of installed applications that support file transfers, including your emulators. Due to the limitations of Apple, you cannot access any files within a folder

- Drag and drop files to your device on the Application icon. You can drag and drop entire folders.

Regardless of file transfer type, file management is almost entirely handled on the device using the Files app. For detailed instructions, see Organize files and folders in Files on iPhone. Files can be accessed between applications. This is very useful for sharing ROM libraries between emulations such as RetroArch and Delta, which can both emulate a subset of the same consoles (NES, SNES, GameBoy, Gameboy Advance, DS, and N64). They can share files rather than storing duplicate copies of the same game.

To select all, tap a file, and then from the lower left corner, click select all.

To move a folder or file, long press it, and then select move.

Third Party File Management

Dude, to the arbitrary limitations Apple places on file management, there is a cottage economy of phone management applications, the most prominent being iMazing. These applications allow for viewing and editing the contents of directories that exist on an iPhone. Unfortunately, these applications do cost money but are easier to use than Apple's Files app.

Adding games to Emulators

Once games have been transferred, adding games to the emulator in question is relatively easy.

Every emulator follows the same pattern for adding ROMs to its library by clicking some sort of add + or Add games button, then locating the files and selecting all or pointing a scan function to the directory. A few emulators have default locations like PPSSPP that will auto-scan. Only DolphiniOS requires the ROMs to be located in an exact directory.

Emulators

Emulators on iOS exist in two camps: App Store and Sideloaded (see lists below). iOS's emulation selection is slim, but fortunately, almost all of the major consoles are covered up to the 32-bit era. Here is the list of consoles supported, all of which have RetroArch support. Emulators like Delta use the same cores that are found in RetroArch.

- Amstrad - CPC

- Arcade - MAME / NeoGeo / CPS 1-2-3

- Atari - 2600, 5200, 7800, Jaguar, Lynx

- Bandai - WonderSwan

- ColecoVision

- Commodore - C64,C128, Plus4, Vic20, Amiga

- DOS - DOSBox

- GCE - Vectrex

- Magnavox - Odyssey 2 / Phillips Videopac+ (O2EM)

- Microsoft - MSX+

- MNec - PC Engine / TurboGrafx-16 / CD, PC-98, PC-FX

- Nintendo - NES, SNES, N64, DS, Gameboy, Gameboy Color, Game Boy Advance, Virtual Boy, 3DS

- Palm OS

- Sega - MasterSystem, Game Gear, SG-1000, Genesis / MegaDrive, Saturn

- Sharp - X68000

- Sinclair - ZX 81, ZX Spectrum

- SNK - NeoGeo Pocket / Color

- Sony - PlayStation, PSP

- 3DO

- Thomas - MO/TO

- Uzebox

App Store Emulators

There are several emulators available on the App Store that Apple sanctions. These emulators are limited in scope and are generally focused on older consoles.