-

Install your Windows Steam games on Apple Silicon Macs Using Whisky (A free GPTK Front-End), a tutorial

This tutorial won't be ultra-comprehensive, but rather a quick start guide designed to get you up and running Windows Games fast as possible. The video version of this includes a bit more info and demos of games running.

You've probably heard about Apple's Game Porting Toolkit also known as GPTK, a utility design to porting Direct X based games to Mac, and it happens to have the ability to run Windows games on the Mac. This process originally required installing a bunch of tools via the command-line and it wasn't stream lined. Now it is, thanks to apps like Whisky. This app is 100% free and it's caveat is it was designed only to work with DirectX 11 and 12.

If you're looking for a geekier solution, I now have a guide on "Transform Your Apple Silicon Mac into a Steam Deck with Asahi Linux, A Tutorial

Requirements

- Apple Silicon Mac running at the very minimum macOS 13 Ventura but macOS 14 Sonoma is strongly recommended. (check the Whisky github page for the latest info). Intel Mac users can use bootcamp.

- Whisky (Link to the Releases)

- Steam for Windows (download the Windows version by clicking the Windows logo to download the .exe)

Installation

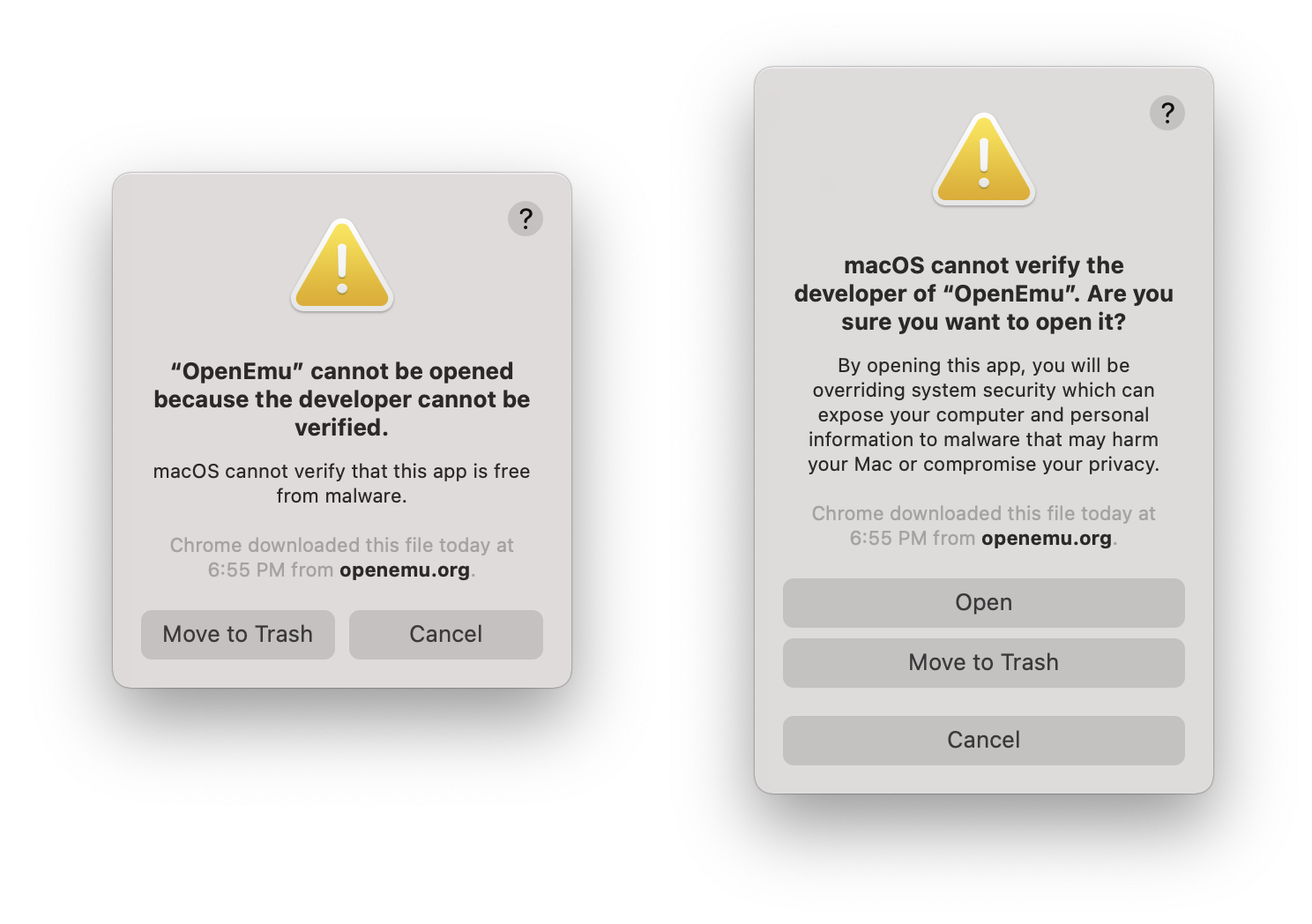

After downloading Whisky, Double click it and then it will prompt you to install Rosetta 2, a translation technology by Apple that allows Macs with Apple Silicon processors to run software designed for x86 and GPKT (Game Porting Toolkit). The total will be be over 400 MBs.

Click create New Bottle. Just for context, GPTK uses WINE, and WINE bottles are self-contained environments that allow Windows applications to run on non-Windows operating systems. You can choose where the WINE Bottle will be installed on your Mac (this is where the windows games will be installed, if you want to use an external drive, you can).

From the Whisky interace, click open C drive. Then Drag the SteamSetup.exe into the drive_c folder.

Now go back to Whisky and click Run and select the SteamSetup. You'll step through the steam setup as if it were a normal PC.

From here you can install games as you normally would. Just be aware sometimes important dialog boxes can be hidden behind the steam application.

Game Compatibility

It's important to understand many older titles are unlikely to work for multiple reasons such as:

- The game is not Direct X 11 or Direct 12

- AVX instructions which Rosetta

- Anti-cheat Software

- Unsual copy protection

- Certain types of online features

There isn't a comprehensive list of compatible titles, the best place to check is AppleGamingWiki and look under GPTK compatibility. Also, Whisky has a small Game Support Wiki for running particular titles.

-

Scenes from the Columbia Gorge Blizzard - 01/13/24

When the winter weather gets terrible, instead of seeing it as execuse to curl up indoors, I find myself grabbing my gear and heading into the storm. At this point in my life, I've logged enough hours driving in pretty abysmal conditions of just about every imaginable stripe. As a PNWer, I've rarely experienced blizzards in my home state so I saw a 16F day with 65+ MPH wind gusts as an opportunity.

Driving conditions started off not terrible, I84 was mostly clear of snow due to the winds. On my way on old Columbia Highway, I cleared a downed branch, and on the way up Multnomah Falls, I moved several logs off the trail.

The visibility dropped during my hike (as seen in the video at the end), and rather than keep venturing further, I decided to drive back as visibility was so poor. It was comparable to the extreme fog often in the Redwoods on 101, where 25 mph seems like a big ask. I ended up driving with my emergency blinkers back into town and taking the advise of TLC even though I wanted to check out Horsetail Falls.

On my drive back, I started seeing more evidences of the storm. Large swathes of Portland were without power and trees were downed. Each day during the snowpocalypse/icepocalypse I made my way out into Portland. I saw multiple trees on houses, downed power lines and houses without power. My neighborhood was relatively well off, despite lots of large branches falling and a few trees.

-

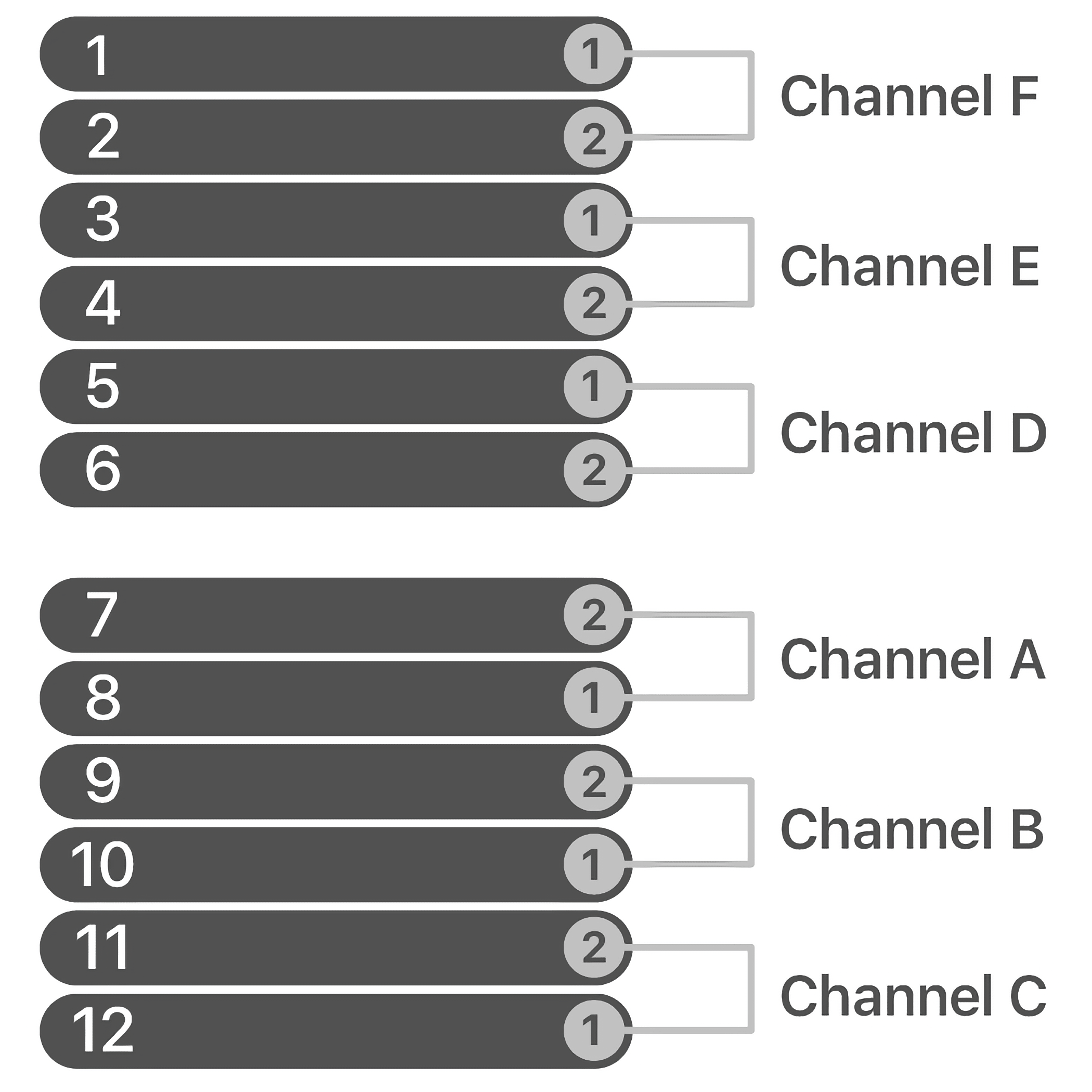

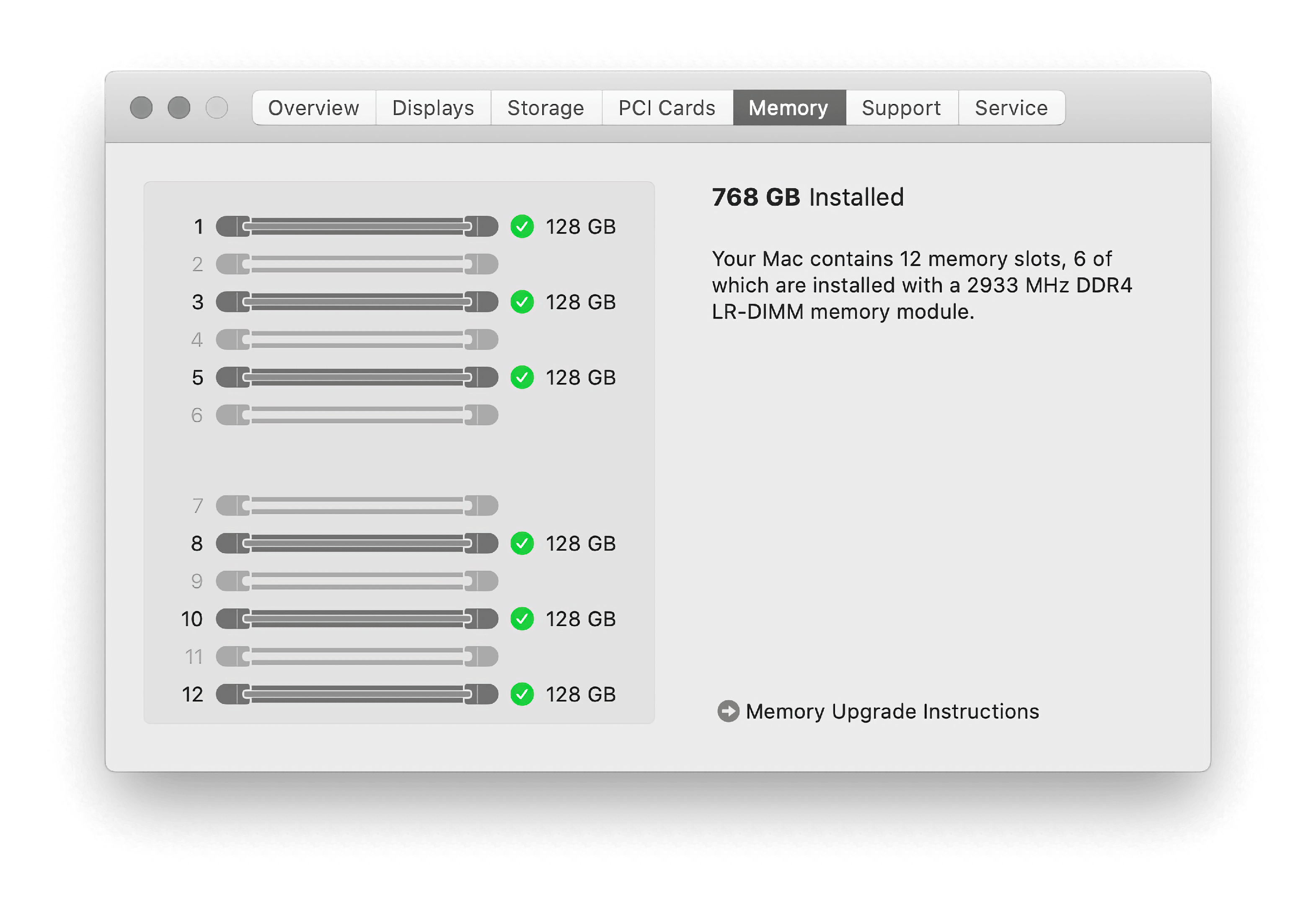

Apple designed a modular computer that they do not want you to service

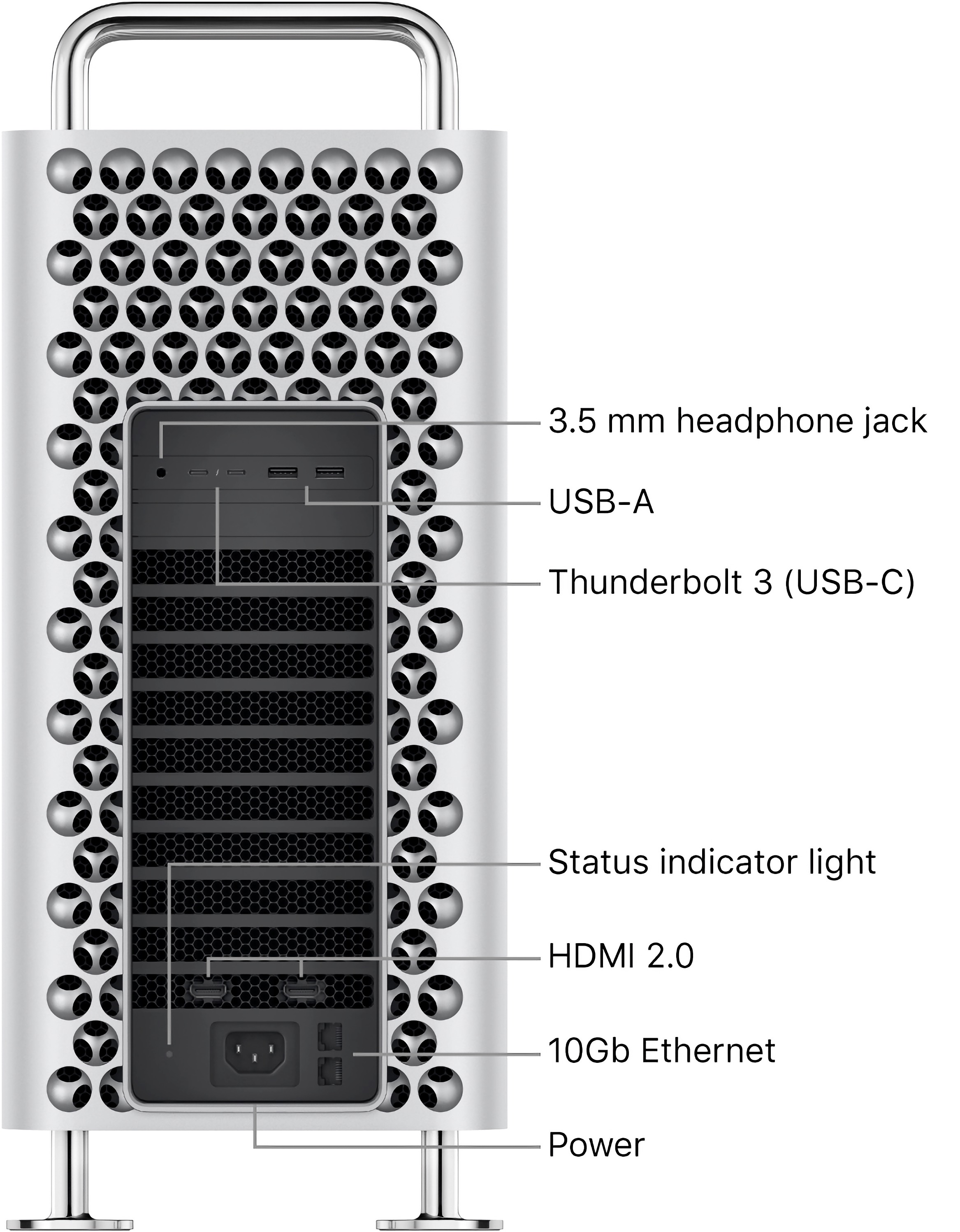

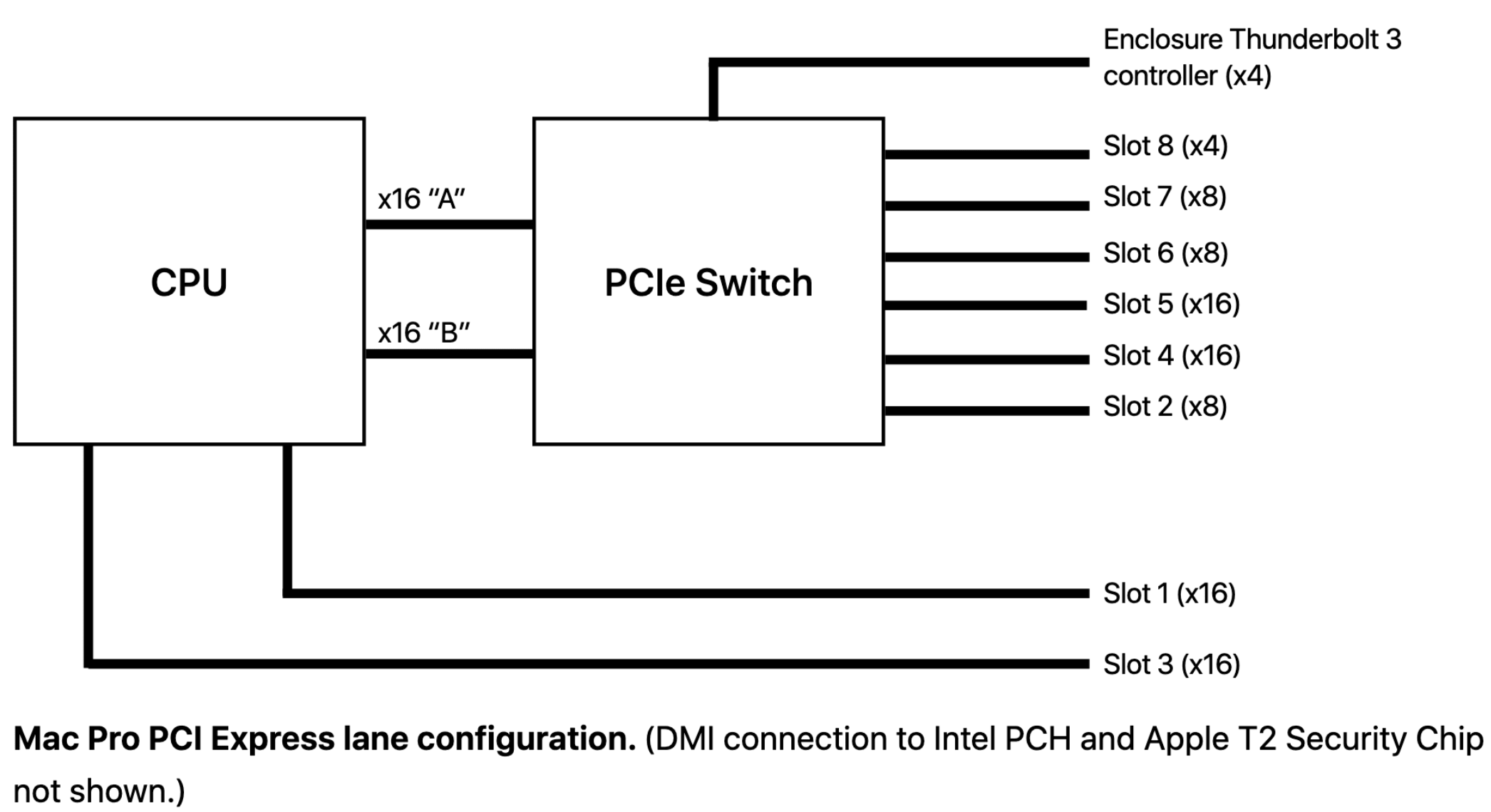

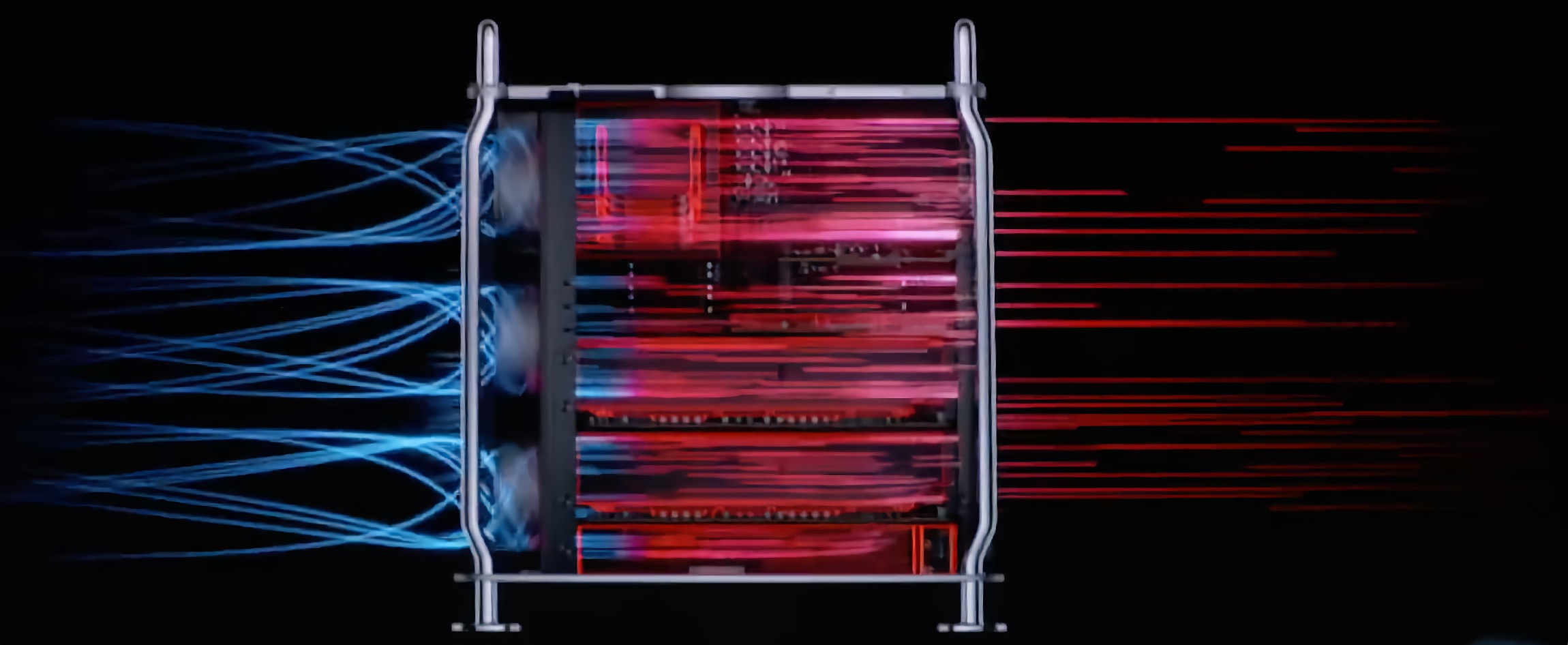

Recently, Apple announced some pretty killer features for Final Cut Pro X, Motion, and Compressor, but they are Apple silicon only. The updates include machine learning based object tracking and faster exports for HEVC and H.264 by simultaneously processing video segments across available media engines but these aren't magical features that would be should be limited to Apple Silicon considering the GPU compute power that the Mac Pro 2019 offers and the ability to have multiple GPUs.

Video version of this post is attached above.

Apple wants to vector away from Intel Macs, which isn't news, but they're leaving the most dedicated Mac users or professionals in the lurch.

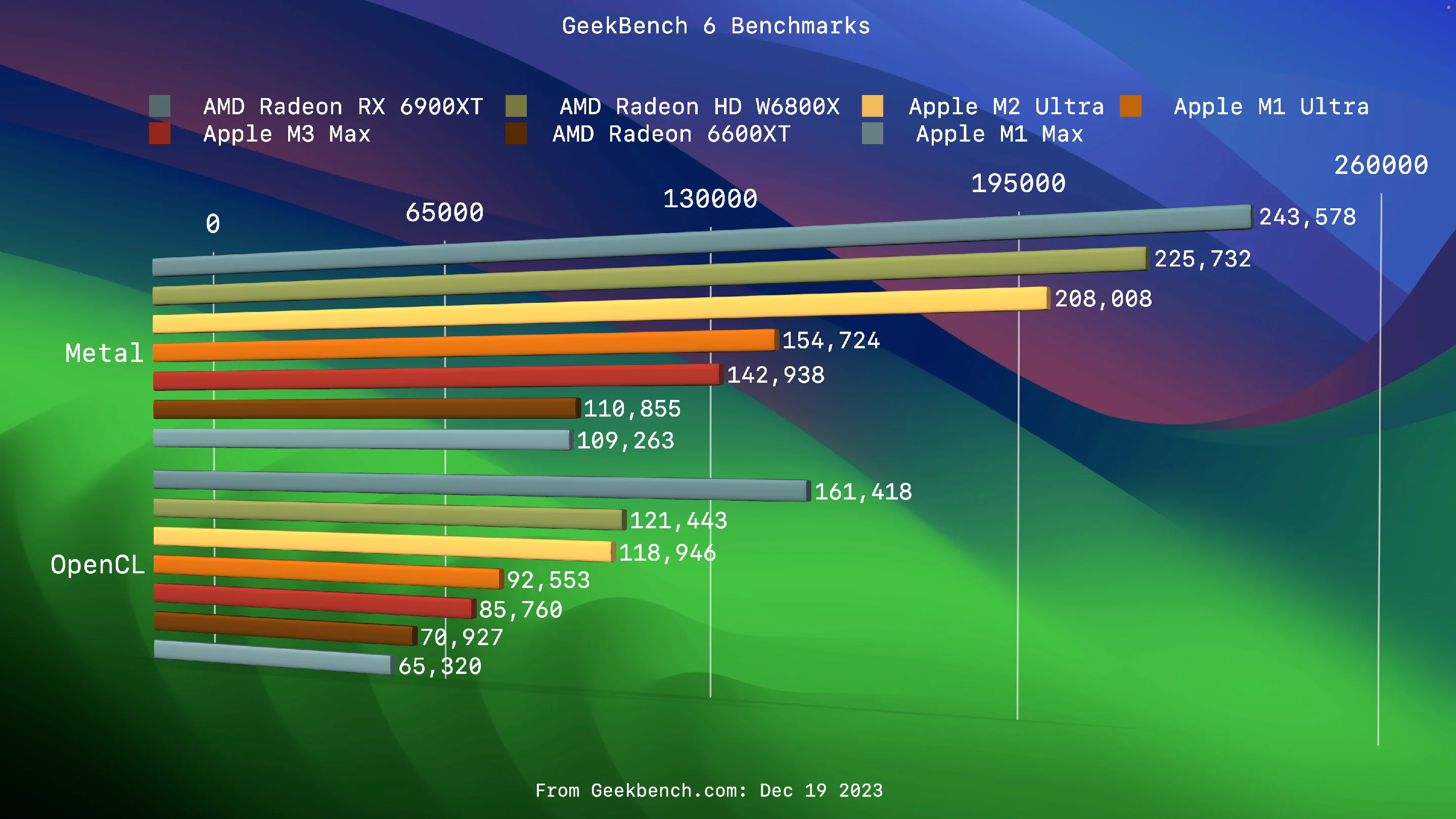

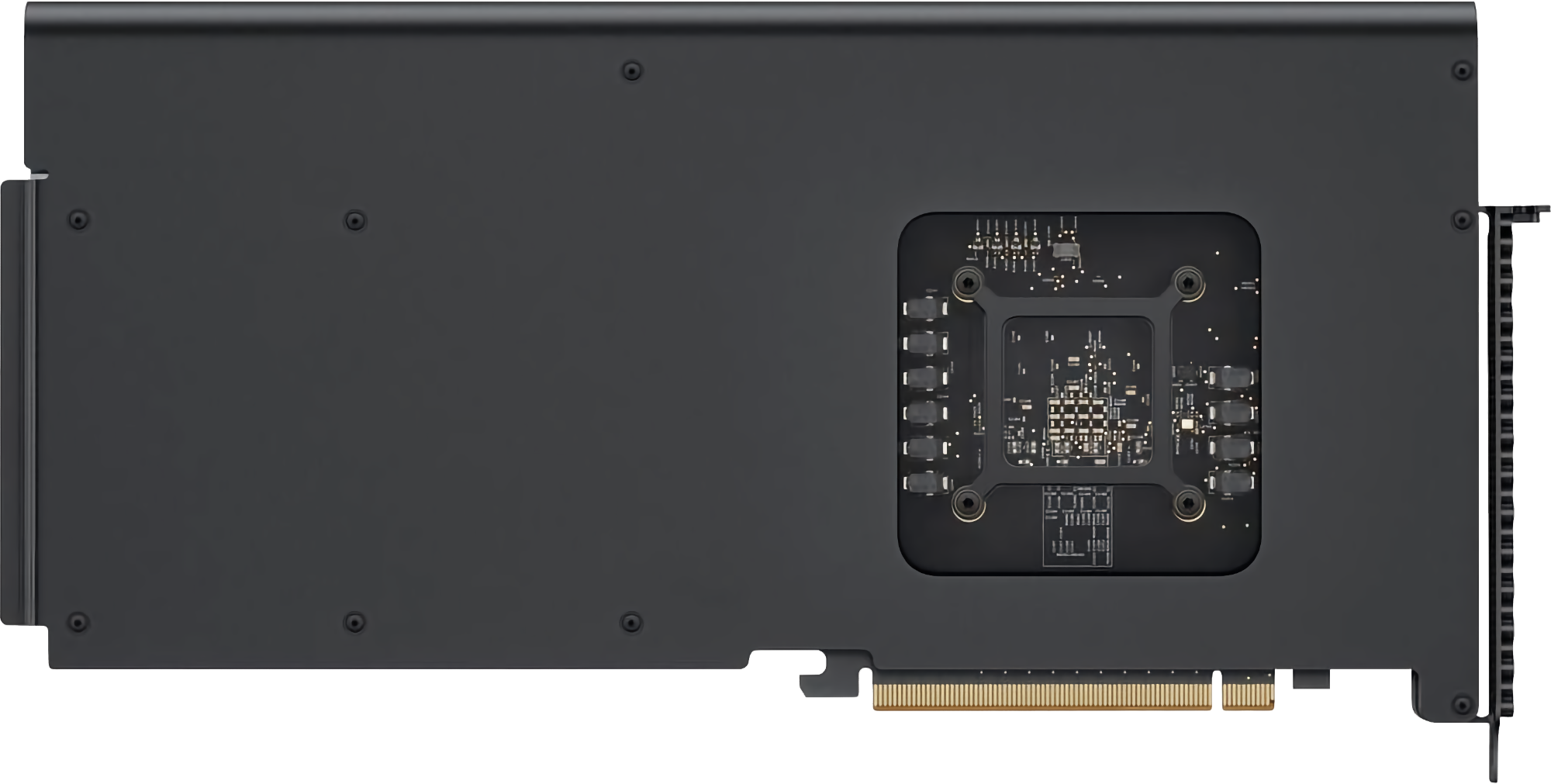

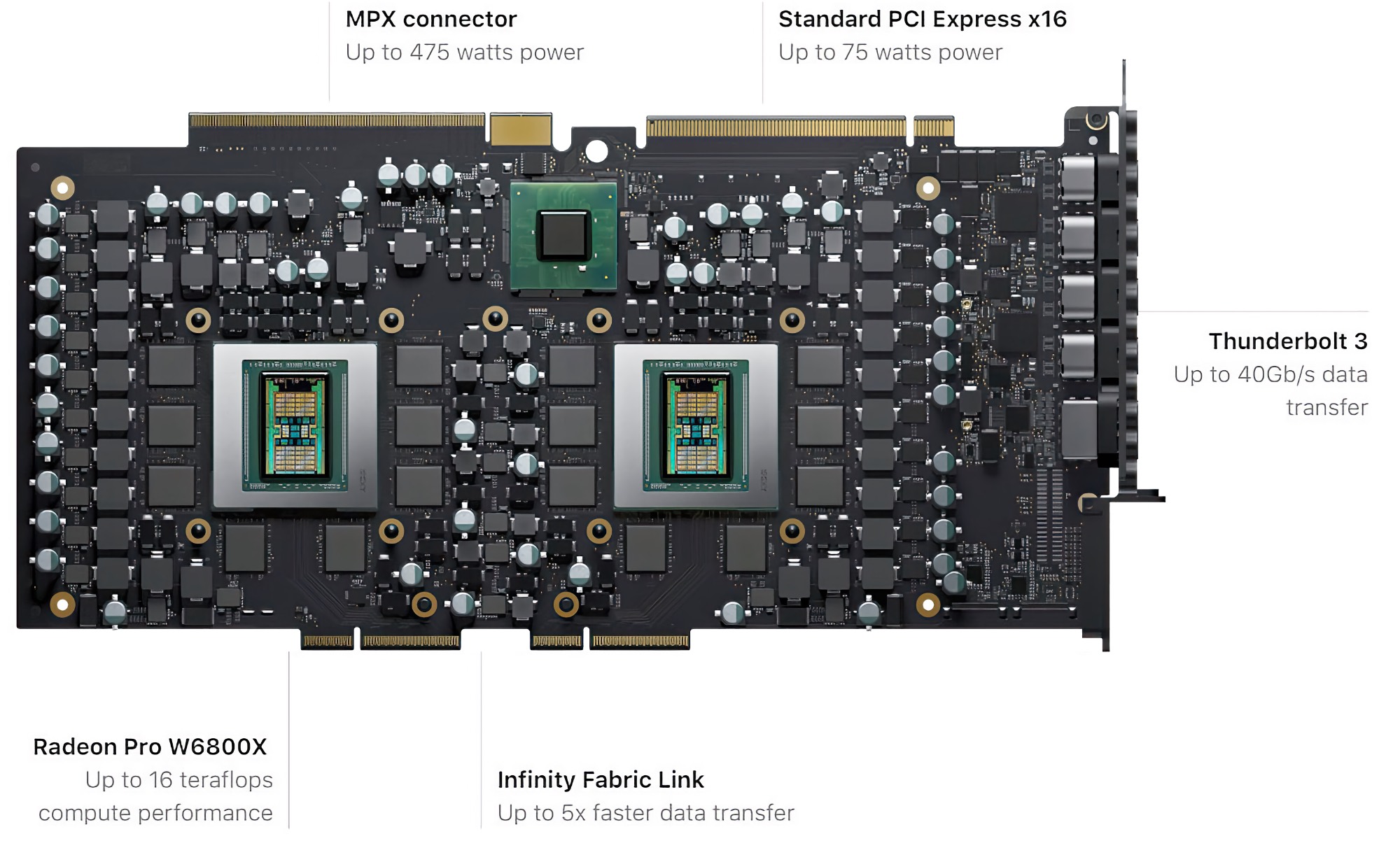

The Mac Pro 2019 represents inconvenient truth about Apple Silicon. To this day, Apple has not produced a GPU competing with AMD's highest-tier offerings. The highest-end GPU supported macOS still from the previous generation, the 6900 XT.

Apple’s GPUs lag in comparison to the power-sipping dedicated GPU market…

Apple’s GPUs lag in comparison to the power-sipping dedicated GPU market…While Geekbench 6's metal benchmarks are not the only way to gauge a GPU performance, The 6900 XT in sheer compute is oodles above the current line up of integrated GPUs and almost certainly will outperform the M3 Ultra's GPU. Apple Silicon integrated GPUs have incredible TDPs (Thermal design power), which are fantastic for laptops but much less of an issue in the desktop space, where TDPs can be offset by power-consumption and arrays of fans.

Apple thus far has opted not to support the AMD 7000 series GPUs, aka Navi 31 (released December 13th, 2022). While AMD is lagging behind Nvidia, they managed to make the transition to a 5 nm Chiplet technology.

My wild speculation is Apple does not want to support these as it'd be an embarrassment for the Apple Silicon GPUs and Apple would like to move away from all things X86. For reference, the monstrous 7900 XTX is roughly 45-50% faster than the 6900 XT. Also, it supports hardware AV1 encoding.

This in itself isn't worth a blog post as Apple's lack of extended support is disappointing but not surprising or novel considering Apple's long history of abandoning computer support fast, be it the poor souls who bought PowerMac G5s 2x Dual-Core CPUs or worse, the earliest adopters of the Intel Macs, with the Core Duos, each getting 3-4 years of support before Apple abandoned them.

My absurdist Apple Store experience

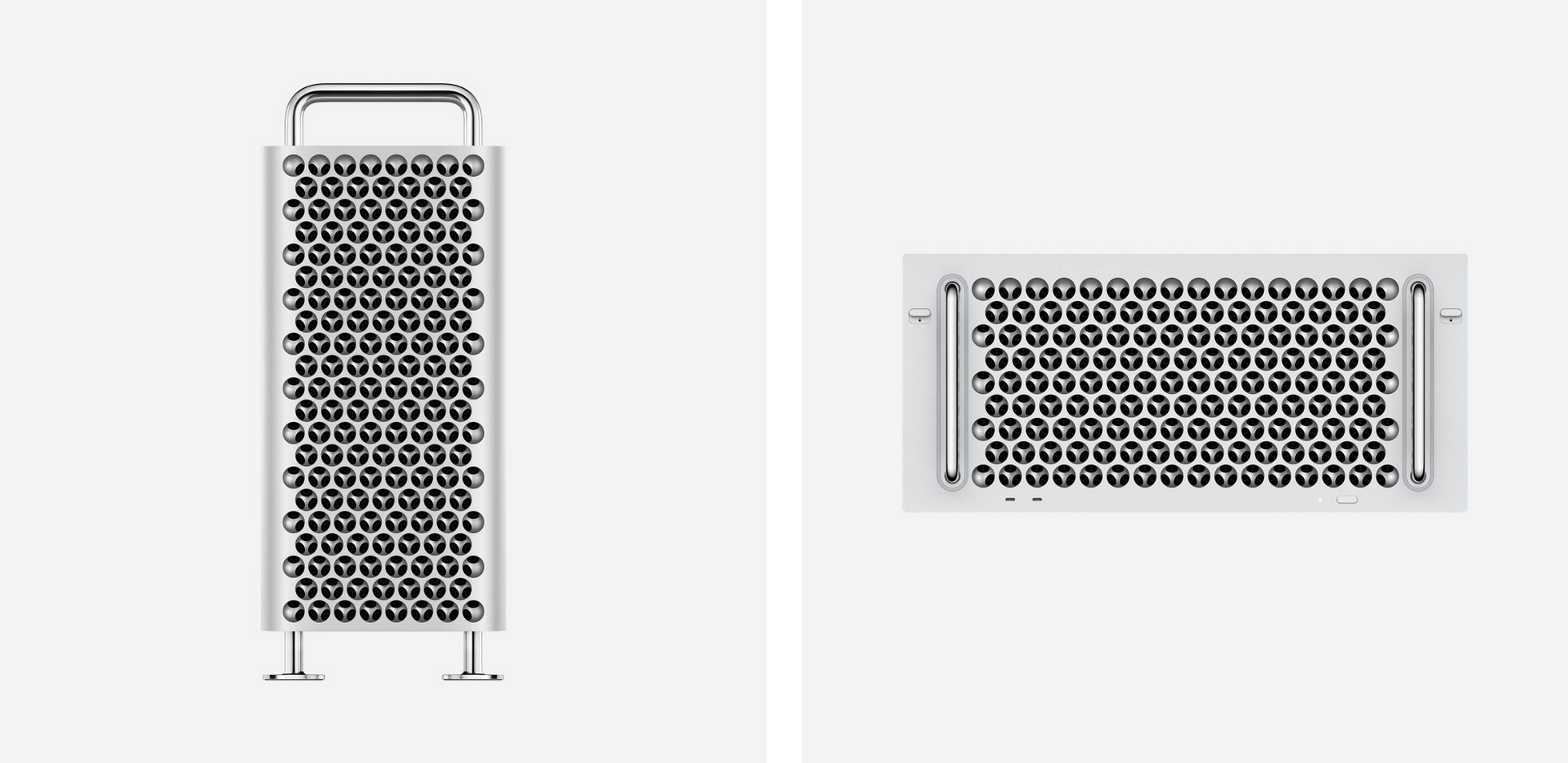

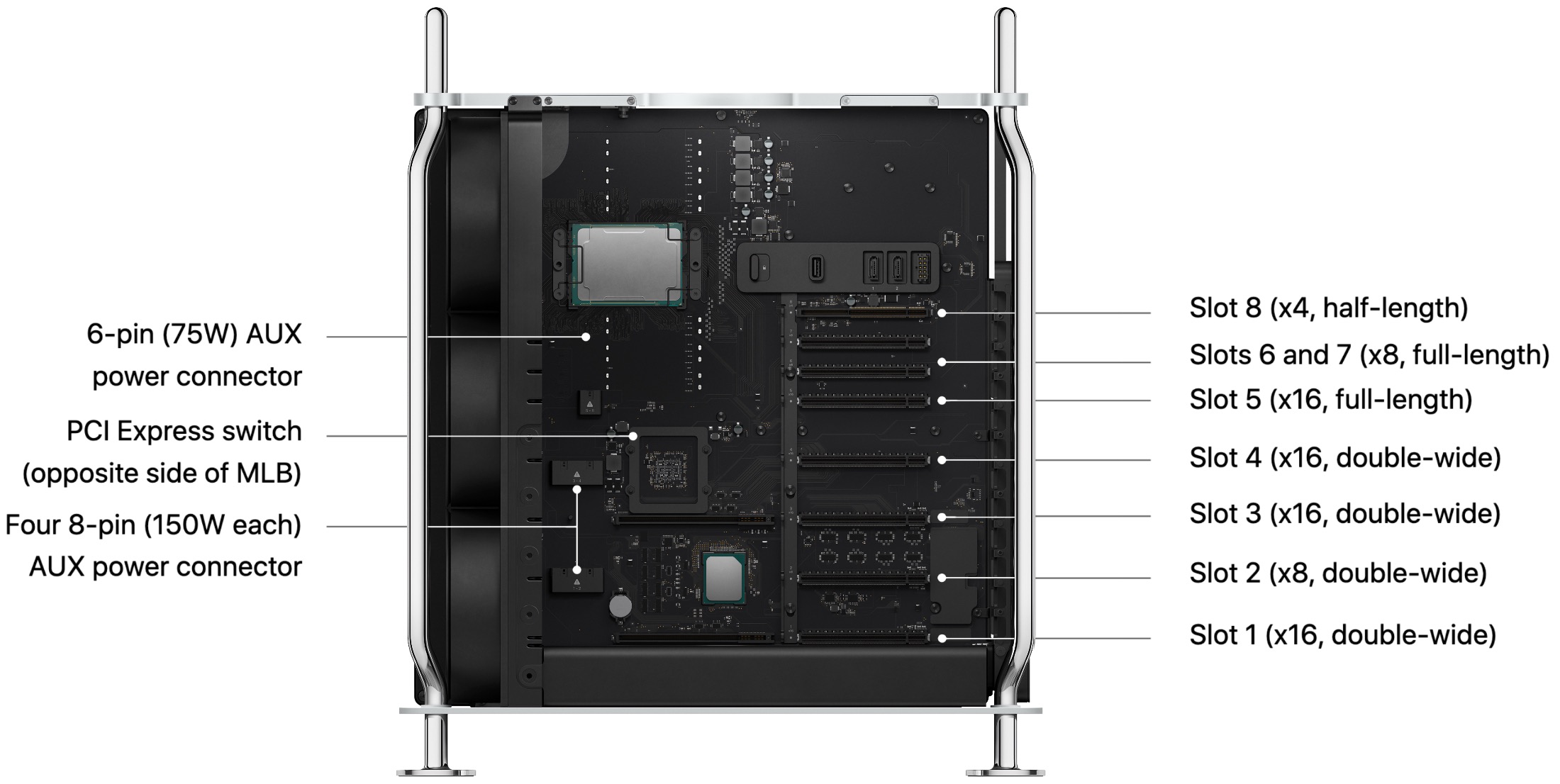

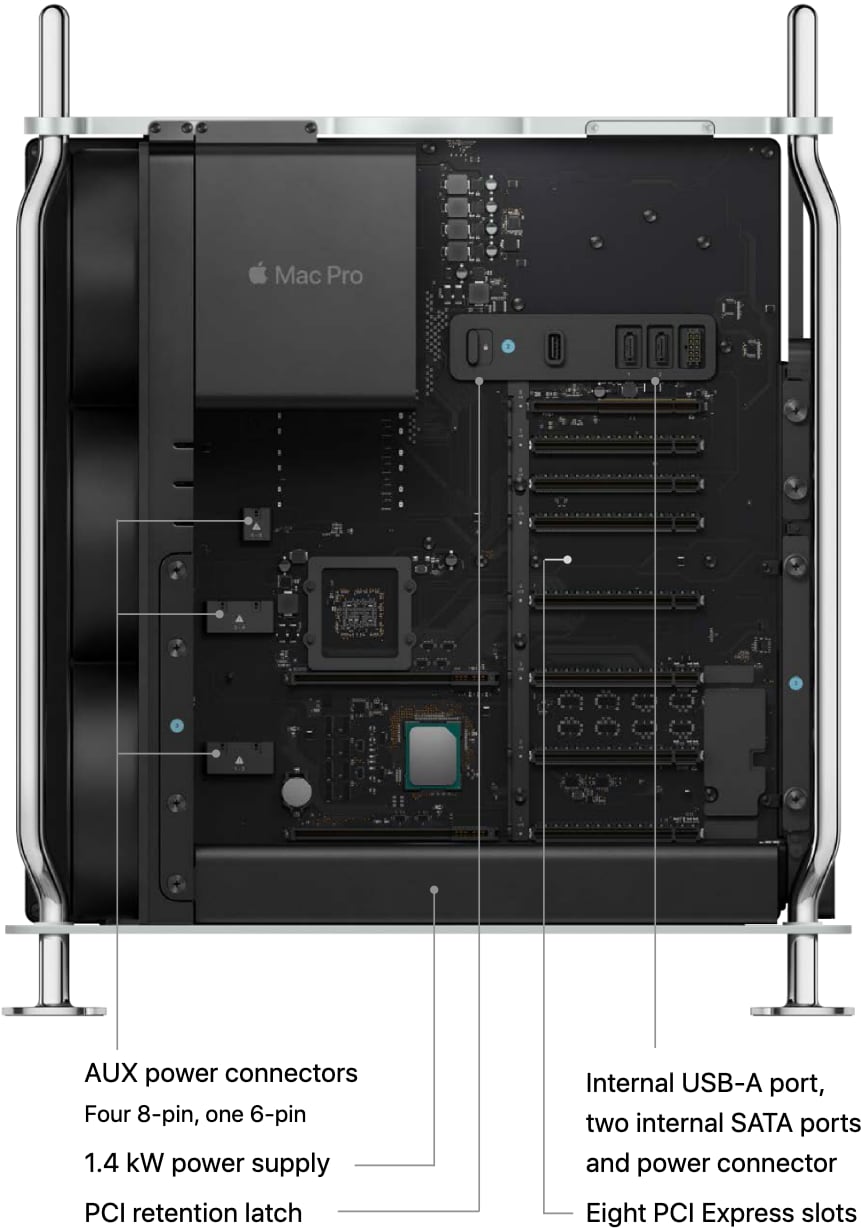

The Mac Pro 2019 is completely modular, but it doesn’t matter.

The Mac Pro 2019 is completely modular, but it doesn’t matter.The most curious thing about the Mac Pro 2019 is how much time and effort Apple spent making a modular computer, one that you cannot repair yourself. Replacing the PSU (Power Supply Unit) is a 5 minute affair, no more difficult than replacing an MPX GPU. This is wonderful... assuming you can actually buy one.

The only way to service a Mac Pro 2019 is via an Apple Store. I discovered this after my Mac Pro 2019 reported a fan issue in diagnostic mode. After trekking to a local Apple Store lugging my 50+ pound computer, it took Apple roughly a week to reach the same conclusion as me: the diagnostic mode is reporting a fan error and that Apple would need to service my Mac Pro. I learned several things:

- Apple will not sell you parts directly.

- Apple requires a technician to install the part even if you're not covered by Apple Care.

- Any parts removed become property of Apple. Under no circumstance will Apple give you your non-functioning part.

- Apple will not replace missing parts.

This is absolutely bonkers, considering the time and effort Apple took to make the Mac Pro 2019 serviceable by a novice, earning itself a 9 out of 10 from iFixit, as it requires only a Phillips and Torx screw driver. I was extra miffed that I couldn't keep my faulty fan array. It's functioning properly but may have a bad sensor. I wanted to see if I could fix it myself.

Apple may have backed a right-to-repair bill, but Apple itself is rotten to its core.

-

Encrypting USB Drives / External Media / External SSDs, a pictorial guide + troubleshooting

I'm sure there are many tutorials on the web, but I was a bit surprised how a simple UI quirk makes this a lot more confusing than it needs to be. Encrypting external media like USB drives (thumb drives/USB sticks), Hard drives, and SSDs can be a bit cumbersome in macOS. This tutorial will walk through the steps needed to create encrypted APFS external media.

Warning! This process will reformat the drive, thus losing all its contents. Be sure to have your data backed up on the drive you intend to encrypt.

Step 1: Launch Disk Utility

By default, disk utility doesn't present the options we need to properly reformat a drive to use encryption.

Step 2: Select Show All Devices

Show all devices will display the volume and not just it's partitions.

Step 3: highlight the drive you wish to format and click erase

Step 4: Set the scheme to "GUID partition map"

On the lower menu make sure you have Apple Partition app selected.

Step 5: Select an encryption option from the Format option

Select APFS (Encrypted) or APFS (Case-sensitive, Encrypted). I personally recommend case sensitive, but macOS can use pathing to files and ignore the casing used in the words to the file. In a non-case sensitive context

/path/to/fileis the same as/PATH/To/File. With case-sensitive pathing, these would lead to different directories. Apple recommends using case sensitive.Step 6: Select password

Be sure to remember this password, as you will not have the option to recover the password. You can save your password in your Apple Keychain so every time the drive is plugged in, you will not be prompted for a password. You can also look up the password in the keychain.

Trouble shooting!

You may have problems formatting some drives. the following:

Mounting disk

Creating a new empty APFS Container

Unmounting Volumes

Couldn't unmount disk. : (-69888)Fixing this requires manually unmounting the volume before formatting by clicking the eject button next the format drive (not the parent).

Umount, and repeat from step 3.

-

Gruber back on his BS

If Apple says they support California’s SB 244, it probably just means they actually support it. - John Gruber, Daringfireball

I've fallen out of reading Daringfireball daily (hence the delayed hot take), or even weekly when for years it was a must reads and I think these sort of defensive takes are probably why. When I penned my first line on my personal blog, I think I was trying to immitate Gruber's wit and quippiness. I even have a daringfireball shirt.

That said, my desire to hear a defense of Apple corrolates directly to Apple's more and more egregious clownery when it comes to the right to repair. These days I'm more Louis Rossman than John Gruber, for better or worse.

Also, I need to post more....

-

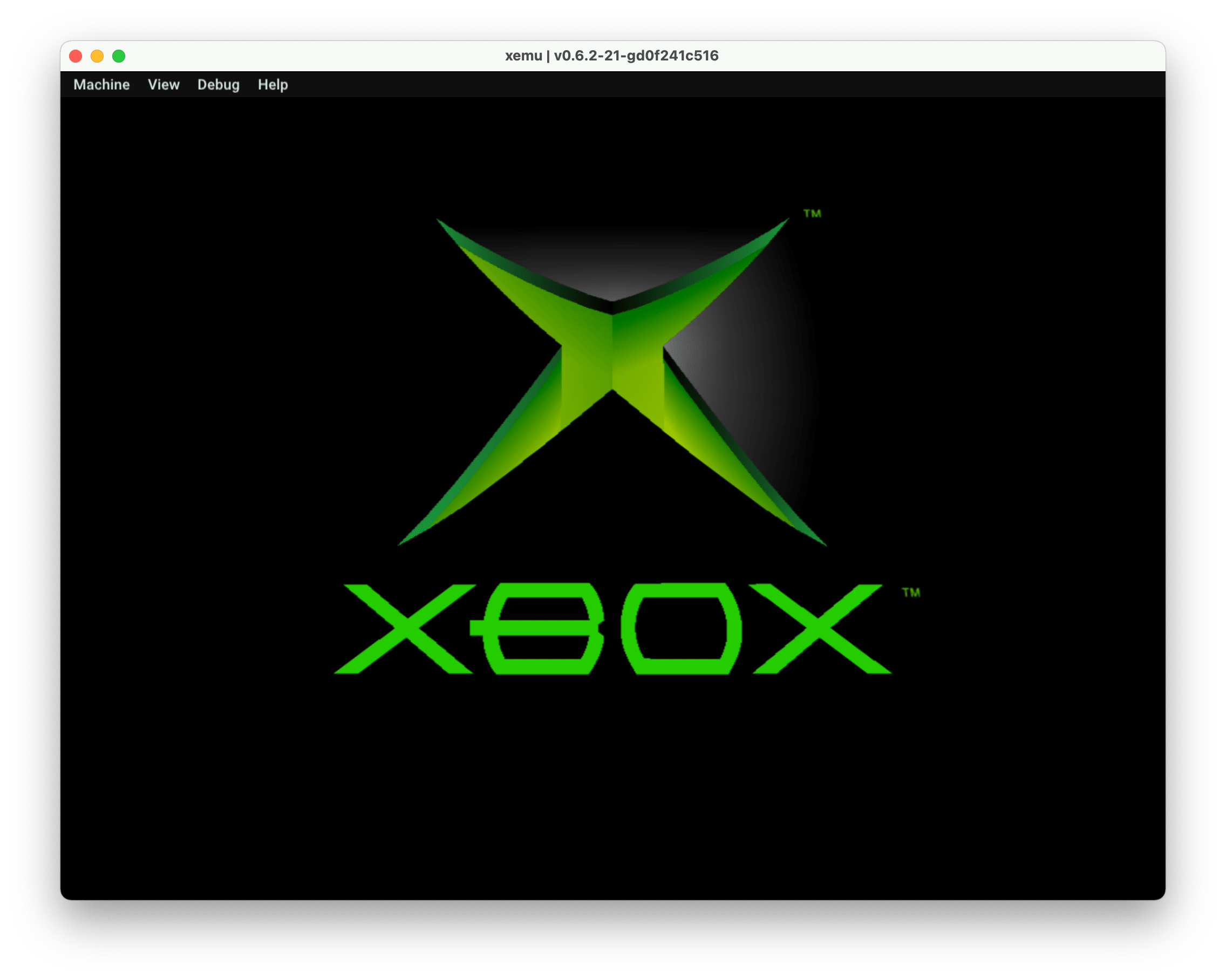

Getting XEMU to work on macOS (Intel / Apple Silicon Xbox emulation)

Getting XEMU on macOS running isn't super difficult but running games is as direct ripped Xbox ISOs will not work with XEMU. I've updated this guide with an Xemu video tutorial that dives deeper into Xbox emulation. I recommend using it in tandem with this guide. Terminal savvy users probably can follow the written guide but I'd recommend checking out the video if you encounter issues as there's a few quirks with the emulator.

- Homebrew - (it is possible to do it without Homebrew but for sanity's sake I will be using it

- Git. There are multiple ways to install git but I'd recommend using

xcode-select --install - The XEMU emulator

- System Support files

- extract-xiso to make converted ISOs

First, you need to download XEMU. It's updated frequently. Grab it from the official website here. It's a universal binary, so it runs natively on both Apple Silicon and Intel Macs.

After you need a few files, these are, legally speaking, the parts of the emulator that are copyrighted. I stumbled across them on Reddit. I own an Xbox, so I'll just say I extracted them myself. Please do not ask me about where to get these files or games.. I'll ignore your request.

The files are:

- Flash (Bios) - Complex_4627v1.03.bin

- MCPX Boot Rom File - mcpx_1.0.bin

- Hard disk Image File - xbox_hdd.qcow2

And the EEPROM, which will be created automatically. Leave the RAM at 64 MB.

You'll have to go to settings and manually assign each of these files; I found that placing them in the same directory as the emulator is recommended for whatever reason. It got confused when I didn't. Also, be sure to quit, as you'll need to reboot the emulator for the changes to take.

Next, it's running games. Games are generally in the ISO format. It's up to you to determine how your ethics work on this and please do not ask me for ISOs. There are places where people back up the games they own, like Archive.org.

This is where Xbox emulation gets tricky. You cannot just play ISOs. You first need to repack them into an ISO format that XEMU will understand.

For that, we have extract-iso, a command-line utility that is used to convert ISOs into playable ISOs.

First, we need to download, cmake so we can compile extract-xiso to run on our Mac. You'll need Git and Homebrew installed for this to work.

Open up a terminal window and do the following:

Step 1: Dependencies

Run the following, update brew and then install

cmake, a utility to create the necessary files to build/compile the application.brew updatebrew install cmakeStep 2: Clone The Repo

From the terminal, you'll want to navigate or create a directory where you'd like XEMU to live, as by default, the terminal will open up into the

~/(your user directory.)git clone https://github.com/XboxDev/extract-xiso.gitStep 3: Go into the directory

Now we enter the directory where extract-xiso was cloned to.

cd extract-xisoStep 4: Create a build directory

Next we need to create a build folder for camke as per the instructions for extract-xiso and run the cmake/make commands from the this directory.

mkdir buildcd buildStep 5 Building the app

Next, we're going to run

cmakeand after it completes and creates the makefiles, runmake.cmake ..makeNow we're ready to prep Xbox ISOs

Using extract-xiso

From the build folder, we can run the CLI utility.

The utility has the ability to unpack Xbox ISOs and repack them into usable ISOs for XEMU.

There are two ways to about converting the ISOs. The easier method, which I had mixed success with, is to use:

./extract-xiso -r path/to/.isoThis will convert the ISO into the correct format. It'll rename the original iso to

.iso.oldand place in the build folder the converted ISO.The other is a two-step process.

Step 1: extract the game contents

./extract-xiso -x /path/to/isoStep 2: repack the game contents

./extract-xiso -c /path/to/extracted-filesA few tips:

XEMU is a fickle beast; quitting it and reopening it after changing settings is best. If you try an ISO that does not work, you must quit and reopen the app with a working ISO. Don't expect perfect emulation, as Xemu is still actively being developed. I found NBA Street Vol. 2 playable, but there are annoying crackles in the audio.

Other Emulation Articles I've written

-

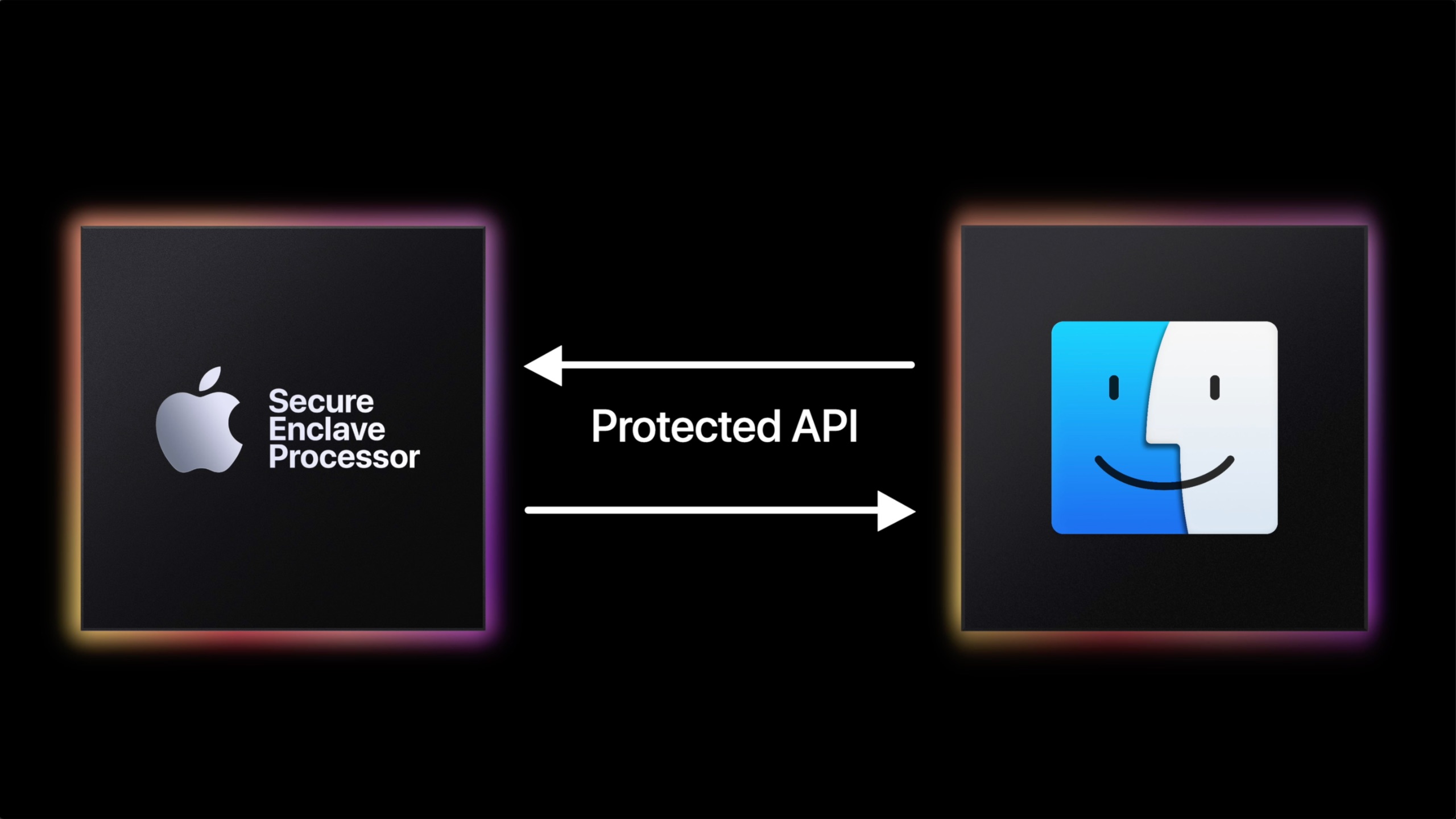

Apple's secret OS and the Secure Enclave Processor

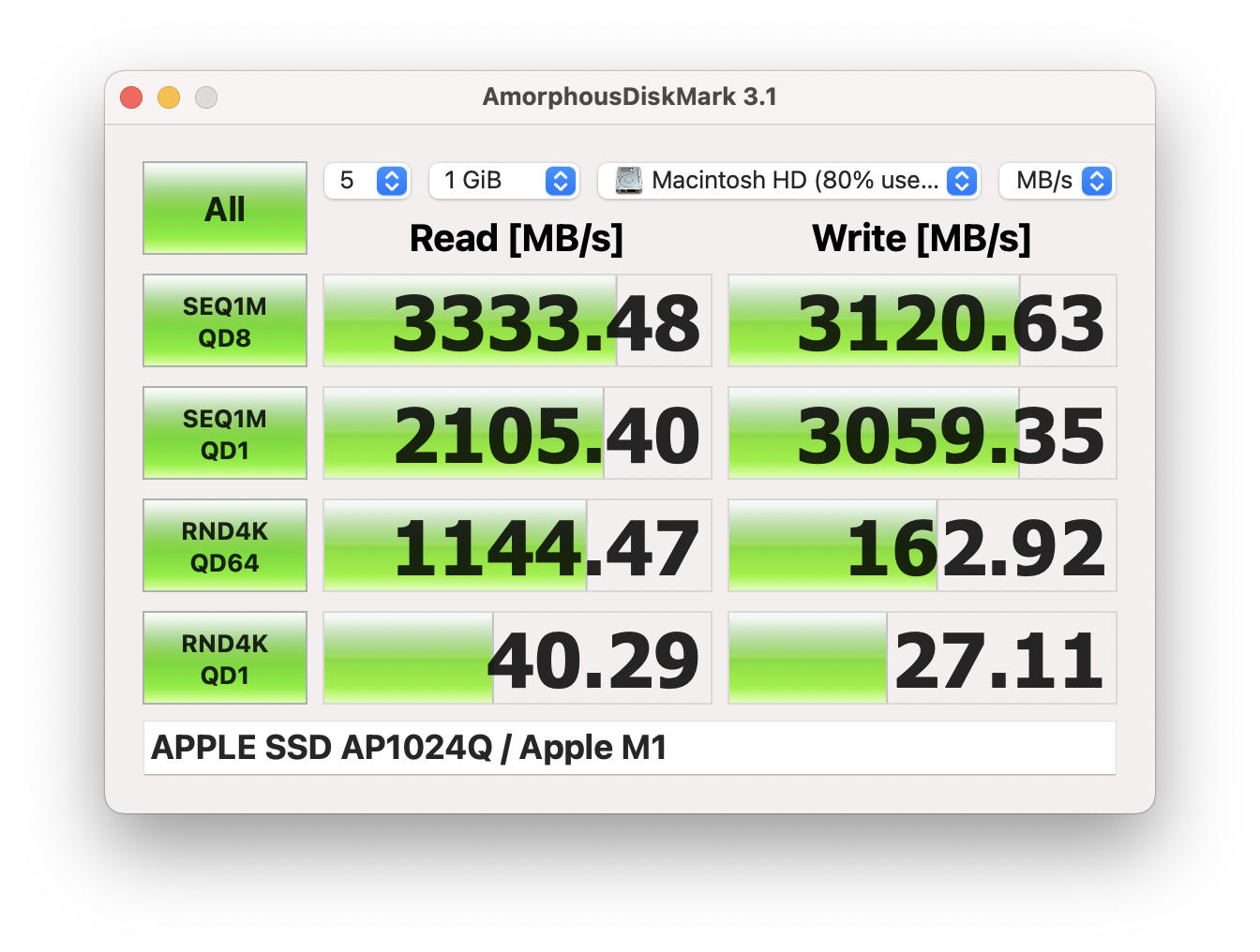

Did you know Apple Silicon Macs run more than one operating system at once in order to function.... and this secretive secondary operating system is why you can't upgrade your SSDs on Apple Silicon Macs? But that's not the whole story.

Apple silicon macs and also T2-equipped Macs, iPhones, iPads, and even the Apple watch use a dedicated hardware component known as the secure enclave, and it's more than just marketing.

The secure enclave is a separate processor explicitly designed to handle sensitive operations related to security and privacy.

One of the main operations for the secure enclave is to generate and store encryption keys and biometric data like Touch ID, and it needs to protect this data from various attacks like physical tampering and side-channel attacks. In order to do this, it needs it has its own memory and storage and needs to be isolated from the rest of the system.

To do this all, it also needs its own stripped-down operating system, known as Secure Enclave OS or SEPOS, and can only be accessed by the computer via a few protected APIs.

When a user's password is set up on an Apple Silicon Mac, the password is passed through a one-way hashing algorithm that produces a key used to encrypt the Secure enclave's key. This means that even if someone has access to the password, they cannot access the encryption keys stored in the Secure enclave without the Secure enclave's cooperation.

This is important. This means any encrypted data must pass through the Secure enclave. The operating system and user never get to see this encryption key and can only use APIs to interact with the Secure Enclave.

It also uses a unique identifier, a Root Cryptographic Key, called the Secure Enclave ID, which is used to identify the device. This is fused to the secure enclave during manufacturing without even Apple's ability to access it. This ensures that the encryption keys stored in the Secure enclave can only be used on the device they were generated on.

So if you stole the physical NAND memory modules out of a MacBook and even had the encryption keys, It would not work because you would still need to match the encryption key to the Secure Enclave ID.

It also helps thwart DMA attacks, where an attacker uses a device with direct memory access, like a Thunderbolt device. A Thunderbolt device uses a PCIe interconnect, and one of the main selling points of PCIe is direct memory access. macOS encrypts its memory and uses an I/O processor that manages communication between the main processor and Secure the Enclave. The memory needs to be encrypted and decrypted, and any device trying to attack memory will only get encrypted data. Apple refers to this as the Memory Protection Engine.

Handling these tasks is SEPOS. The SEPOS is designed to be resistant to attacks, including physical tampering, and it has been certified under the Common Criteria security standard. It's based on the L4 Microkernel, which is popular for a secure embedded system as it has a minimal set of services and uses a highly privileged mode that is isolated from user-level code. This starts to get abstract, but the point is that there's a well-defined interface, and the kernel is small and focused. Thus, it is easy to analyze and verify by security analysts and has a design that allows for specialized isolated subsystems. Apple took this operating system and modified it for use in the secure enclave.

This isn't everything that the secure enclave does, as it does quite a bit, like true random number generation, Secure Neural Engine, AES Engine, Secure Enclave Boot ROM, Secure Enclave Boot Monitor, and so on. I really suggest reading the Apple document on this. It's what I used to make this video.

The end result is if you buy a used Apple Silicon Mac, and the user doesn't provide the firmware password, then there's no way for you to reset it.

SSDs and the Secure Enclave

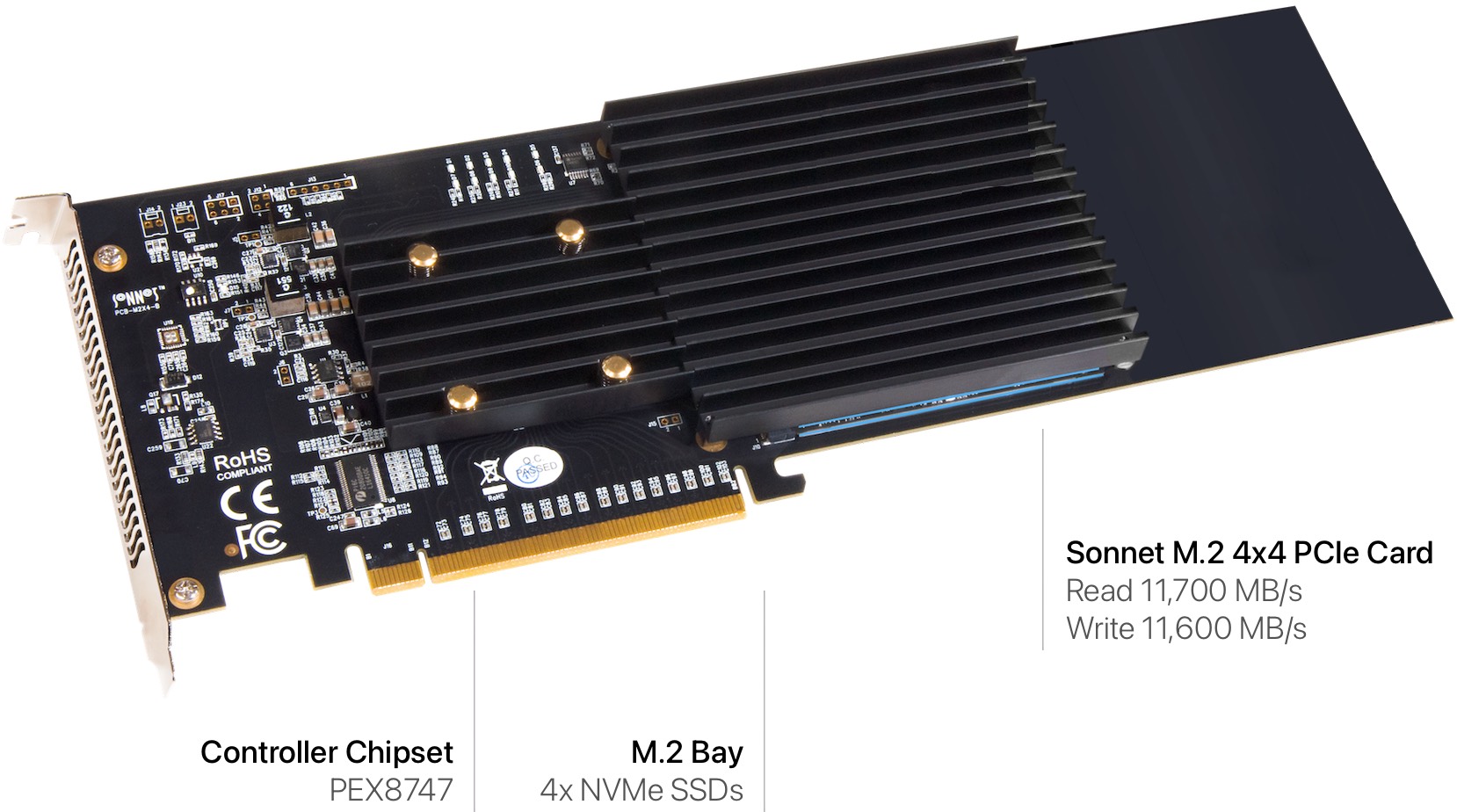

SSDs generally consist of a controller, NAND memory modules, DRAM cache (found on quality SSDs), and an interface.

Apple's Secure Enclave is tightly integrated for Apple, and the SSD controller itself resides within Apple Silicon. As we previously discussed, the secure enclave generates a hardware encryption key and is used to encrypt the contents of the NAND memory (storage). The key is stored in the Secure enclave, and the keys are derived from a combination of the secure enclave ID and characteristics of the NAND. When a new SSD is installed, it would have to generate a new key. If an attacker might be able to determine the original key by comparing the new key to the old key and identifying the differences between the two. If the new key had different characteristics than the old key, this could potentially reveal information about the old key and compromise security. Apple also uses its own implementation of the PCIe and not NVMe protocol, so Apple would have to also harden its security for NVMe.

Now I'm confident Apple could arrive at a solution as Apple's Secure enclave has gone through multiple iterations now, with roughly 16 versions now at the time of making this video. Apple could arrive at giving users the ability to change NVMe SSDs requiring reduced security settings or perhaps an unlock that warns a user about the potential encryption key exposure.

Secure Enclave is extremely powerful when it comes to security. In my OpenCore Explained video, I broke down Apple's many security innovations on the operating system side.

I consider myself an informed user and I would gladly accept any risk for removable storage over being locked into zero upgrades as the NAND memory, which makes an SSD, has a finite shelf life. A memory cell on an SSD can only be written and overwritten so many times before it fails. SSD controllers identify bad blocks eventually they hit a critical mass and will fail. Apple preventing anyone from swapping these means that every Apple Silicon Mac has a time bomb built into it, and there's nothing end users can do to fix it.

Despite the greenwash marketing, Apple has no qualms about generating eWaste. Also, Apple shipping bottom-tier Macs in RAM-starved configurations and with laughably small SSDs means that the OS will have to use the SSD for memory swap operations when the RAM is completely filled more frequently and with fewer bytes to rotate on very small SSDs like 256 GB. This also shortens the NAND shelf life.

Apple chooses not to tackle this on any front as it knows that it generates money no matter how this plays out: A user has to pay upfront the Apple tax on overpriced upgrades and also has to deal with planned obsolescence baked into the hardware and software. Let's not forget Apple will stop supporting its Mac at some point. It gets to hide behind security as a smokescreen.

So when you see right to repair legislation pop up, please support it. Apple makes wonderful products marred by their disdain for the users who use them.

-

OpenCore and OpenCore Legacy Patcher Explained

You're most likely aware OpenCore and OpenCore Legacy Patcher. It's a boot loader, whatever that means... .which we will get to in-depth, and it lets you run macOS on old Macs that are no longer supported by Apple. This blog post and vide is a high-level overview so you can understand how OpenCore works and what Open Core Legacy Patcher is.

Let's step back in time to a few years ago. When users wanted to run macOS on unsupported Macs, they'd turn to modify the operating system, the most common being preconfigured scripts like DOSDude1. These weren't perfect, as you generally had to reapply them each time you updated the OS, no matter how small. Even a security update could render your mac unbootable until repatched. It was simple until it wasn't. Here's what happened:

Over time macOS has evolved to be more closed at the system level. This started when Apple started following the industry trend of signed code in 2009 with the introduction of Snow Leopard. Signed code allows the OS to verify the identity of the software developer and ensures that the application has not been tampered with or modified since it was signed. This evolved in many ways, but the most important is the modern usage of integrity protection which exists as System Integrity Protection, introduced in 10.11 El Capitan, SIP or System Integrity Protection which restricts the actions of the root user / privileged processes that can be performed on critical system files and folders. Translation: a rogue app will have a much tougher time hacking your OS as it doesn't have permission to do so.

Apple began requiring signed code for applications distributed outside the Mac App Store with the release of macOS 10.8 Mountain Lion in 2012, not with Snow Leopard in 2009. Snow Leopard (10.6) introduced support for signed code but did not mandate it.

System Integrity Protection (SIP) was indeed introduced in macOS 10.11 El Capitan, and it restricts the actions of the root user and privileged processes to protect critical system files and folders. This makes it harder for rogue apps or malware to compromise the system.

Also, integrity protection exists in the file system itself in APFS with metadata integrity protection, which uses cryptographic verification of metadata, which helps prevent tampering and protects against malware attempts to modify the system. The system also now exists as a separate partition within the APFS container that is read-only during normal operation. All of this makes macOS a lot less likely to be infected with OS-level malware.

Apple File System (APFS) includes metadata integrity protection, which uses cryptographic verification to help prevent tampering and protect against malware attempts to modify the system. APFS was introduced in macOS 10.13 High Sierra.

The system partition's read-only status during normal operation was introduced in macOS 10.15 Catalina, further enhancing security.

Apple, in even more recent releases, has deprecated Kexts, small modules of code that are designed to extend the functionality of the macOS kernel and other system components, such as device drivers or filesystems. Kexts or kernel extensions are very powerful. Thus, they are a potential vector for malware.

Of course, the focus on security has complicated modifying macOS by 3rd parties; however, some very smart programmers and hackers devised impressive solutions.

On the Hackintosh side, users who wanted to run macOS on PC hardware had created a thriving software scene. Clover became the preferred and essential method of installing macOS on unsupported hardware. Clover was a boot loader and could inject Kexts into macOS.

A bootloader is a piece of software that is responsible for loading the operating system kernel and initializing the hardware devices during the boot process. We'll dig into this more in a minute.

Clover was essential but had shortcomings regarding security, compatibility, configuration and generally required additional patching. Hackintosh users and owners of unsupported Macs faced a similar problem when macOS was on unsupported hardware. A system update could break the entire setup until certain hacks and patches were reapplied.

OpenCore was developed as a way to fix these issues for both unsupported Macs and Hackintoshes, relying on its ability to inject changes as part of the boot process rather than modify the OS itself. The advantage is that the OS would be left intact without requiring altering of most security settings or patching/hacking the OS.

OpenCore and Kexts

OpenCore uses a feature called Kext Injection. When OpenCore boots the macOS kernel, it scans the system for all available kexts and injects them into the kernel as needed. This allows users to add support for hardware devices that are not natively supported by macOS or to modify system behavior in various ways.

OpenCore also uses the concept of "Kext Patches" to modify the behavior of existing kexts or to patch the macOS kernel itself. This isn't unique to Clover, but OpenCore's methods are improved. Kext Patches are small code snippets that are applied to kexts or the kernel at boot time, which can be used to modify system behavior or to add support for additional hardware components.

When the computer boots, OpenCore acts as middleware for the UEFI or EFI on the computer, a standard for computer Bios that macOS uses. It loads its own firmware and presents the user with a boot loader GUI allowing the user to select the OS. If the user boots macOS, it performs pre-checks, prepares for booting macOS by prepping necessary modifications, then loads macOS Kernel into memory and modifies it with the kernel patches and modifications, and loads kexts for additional hardware support or system modifications. Once done, OpenCore hands over control to the OS, and booting proceeds.

To summarize, each time you boot macOS with OpenCore, it is modifying macOS on the fly, meaning you can update your Operating system without worrying about losing patches or lowering security settings.

The Case for OpenCore Legacy Patcher

OpenCore is fairly complicated to configure. Thus, users would often share their configs for various hardware setups. For example, A very popular configuration for classic Mac Pro users was Martin Lo's OpenCore configurations. This worked well for users whose hardware matched or resembled the hardware the preconfig was targeting, as it created a template for other users to follow and edit, assuming their hardware similar to the preconfig file.

While this worked, it required a fair amount of technical know-how, reading, and research, especially if your hardware is different in a significant way, such as a different GPU or Network interface. OpenCore Legacy Patcher aimed to make this a point-and-click experience.

OpenCore Legacy Patcher is a community-driven project based on OpenCore designed with old Macs specifically in mind. OpenCore Legacy Patcher is a graphic user interface that automates installing OpenCore on Macs that Apple no longer supports.

Unlike PCs that come with an exceptionally wide range of configurations, Apple's product line is exceptionally small. This makes it predictable for OpenCore Legacy Patcher developers to create configurations for the user based on the hardware it detects rather than the user modifying the OpenCore configurations themselves. Power users can still modify OpenCore manually after using OpenCore Legacy Patcher.

With a few short steps, a user can install OpenCore on an old Mac, allowing them to run recent versions of macOS on hardware that Apple has elected to no longer support. Apple does not make money on old hardware and thus habitually drops support even if the hardware is quite capable of providing a pleasant experience.

Apple's yearly OS updates also have slowly required more and more developer support for Apple's security features and also have depreciated older technologies at a fast clip. The end result is an older copy of macOS may not support the latest and greatest software, even as crucial as a web browser that works with modern web standards. In contrast, Windows has a much longer support window with its less frequent overhauls.

It makes one realize the value of a paid OS update model, as seems to be the case for Windows for longer support.

OpenCore is the backbone of providing support to older computers. OpenCore legacy patcher is a utility used to configure and install OpenCore in a very user-friendly way.

OpenCore is a boot loader designed specifically to work with Apple's current security paradigm and avoids modifying the OS stored on the boot volume. It instead applies the patches on the fly during the boot sequence.

Looking for info on how to install OpenCore?

I've made separate blog post, The 10 Step Guide to OpenCore Legacy Patcher (with pictures and video) or you can check the video below.

Happy OpenCore-ing

-

The brute force Drupal 9 content query

While this might be apparent to many, here is a brute force method to search a drupal 9 for the Body field for any matches of a string. I am not a Drupal expert, so finding the table + field was a bit of a chore.

SELECT * FROM node__body WHERE body_value LIKE ‘%string%’;;The SQL query selects all columns (*) from the

body_valueinsidenode__bodytable where the post code lives. The%symbol is a wildcard operator in SQL and is used to match any number of characters before or after a specified pattern. Be sure to surround your query with the%. For example, if searching for "Hello World!", it would be%Hello World!%. You can search for HTML within the individual fields as well.Of course this can easily search multiple entires by using AND.

SELECT * FROM node__body WHERE body_value LIKE ‘%string%’ AND body_value LIKE ‘%string2%’;;Using a GUI like Sequel Ace, you should be to see which bundle it's in, if you've set up different page types, and the

entry_idwhich can, of course, be accessed via PHP or simply by going to sitename.com/node/entry_id or sitename.com/node/entry_id/edit to get directly to the admin "edit" panel for the node.

-

The 10 Step Guide to OpenCore Legacy Patcher (with pictures and video)

OpenCore Legacy Patcher recently hit version 0.6.x, an important milestone. It finally added support for Non-Metal Graphics Acceleration, older wireless network chipsets, UHCI/OHCI USB 1.1 Controllers, and AMD Vega Graphics on pre-Haswell Macs (Mac Pro 2008-2012). It continues to be updated, with recent advances to support macOS 13.3.

The goal of this guide is to provide a very granular step-by-step guide to installing OpenCore Legacy Patcher on a supported Mac for users who are new to the world of OpenCore.

As a quick primer, OpenCore is a boot loader. OpenCore functions as middle wear between the firmware and macOS. This allows changes to be injected without modifying the OS. Through these modifications, discontinued hardware can be supported. OpenCore was designed to replace Clover and other Hackintosh solutions as a way to avoid repeatedly patching after minor OS changes. However, OpenCore proved not to be useful for Hackintosh owners but also for Mac owners as well.

OpenCore Legacy Patcher (OCLP) is a utility that automates the installation of OpenCore on older Macs that Apple no longer supports and has matured to a point-and-click utility. Users do not have to understand esoteric software configuration in OpenCore and instead can rely on a community to test the latest developments from the OpenCore community and fold them into a package.

If you're looking for a detailed explanation of OpenCore, I wrote a separate article explaining OpenCore and OCLP or you can watch the video below.

OCLP's accomplishments are nothing short of amazing but also contradictory as it really should not need to exist. OCLP proves that Apple's decision to drop older Macs has little to do with user experience and everything to do with planned obsolescence, as OCLP proves that older Macs are more than capable of running modern macOS versions and even flourish. For a company that love to talk about it's efforts to be "Green", the best thing it could do to keep eWaste out of dumpsters is to continue to support hardware for as long as possible. Regardless of where you live, please support the right-to-repair in your city or state or province or country.

This guide also exists in video format as "How to install OpenCore Legacy Patcher in 5 minutes".

Requirements:

- 16 GB+ USB flash drive

- Supported Mac, see OCLP's website,

Before you install OpenCore Legacy Patcher on your Mac, go to the OCLP website and confirm your old Intel Mac is supported. Some Macs may not support the latest version of macOS. OCLP's team is chipping away at support for older Macs. Most Macs post 2010 are well-supported but with caveats such as non-functioning Bluetooth. Also, compatibility differs between OS versions, generally with the latest OS having the least amount of support. Users likely will have better results running a semi-recent OS vs the most recent.

Next, you will need a 16 GB or larger USB flash drive. Not all USB flash drives are bootable but most are. I used a SanDisk 128GB Ultra Fit USB 3.1 as can often be bought for $15 (the 64 GB version generally is $10), and while not the fastest drive, it's made by a reputable company and will be faster than most $10 USB flash drives.

Lastly, always check OpenCore's website when updating the very latest OS release. I highly recommend waiting a few weeks for point releases, ones that are a major revisions like 13.3 (Not 13.3.1) for the OpenCore community to test and vet the OS update with various hardware configurations.

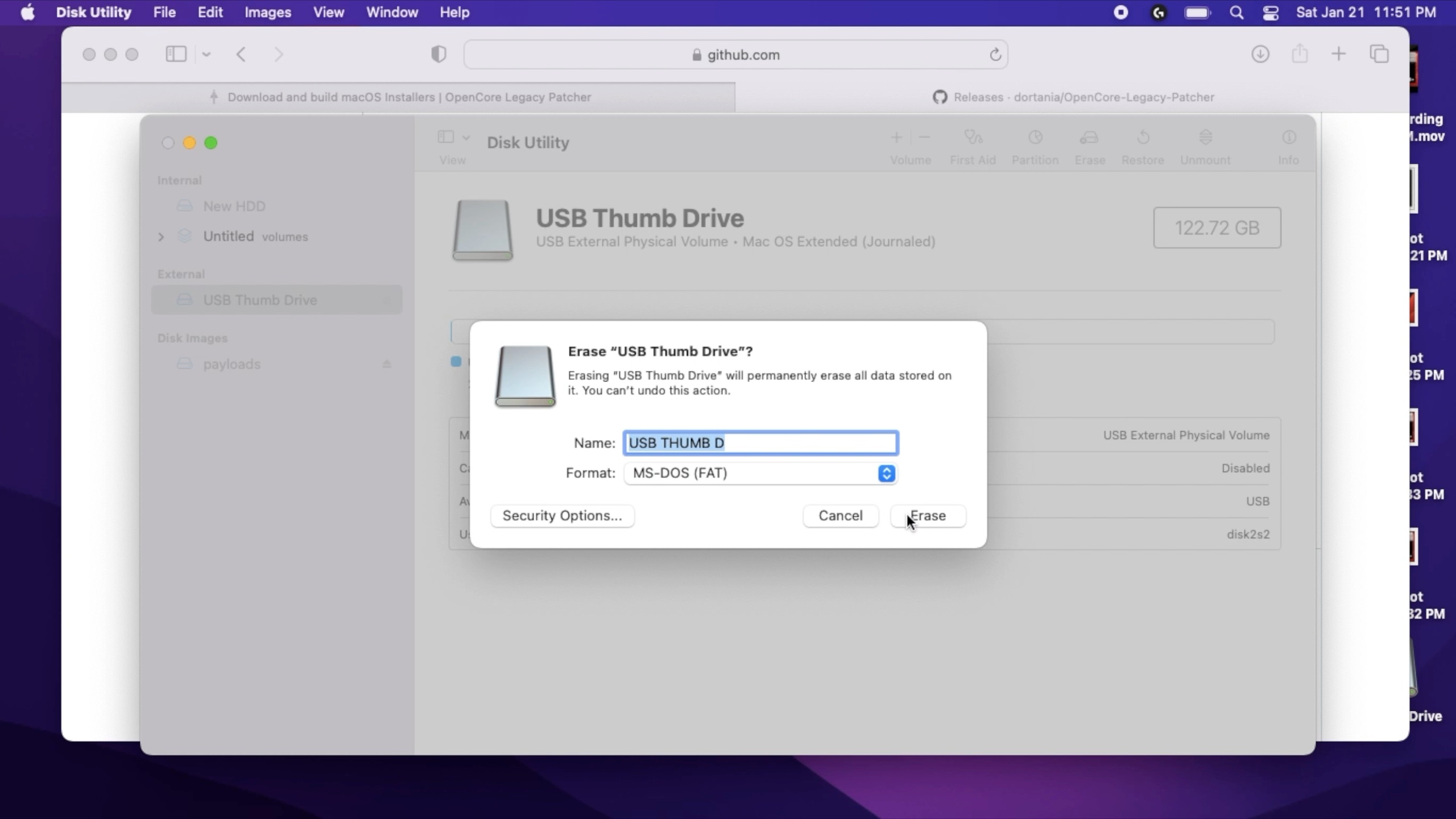

Step 1) Format Drive

Plug your USB flash drive into your Mac (or a PC). Open up disk utility on your Mac. This is located in

Utilitiesfolder in yourApplicationsfolder, or easily found using spotlight. Highlight your USB thumb drive and select format. Format your flash drive to Fat 32. macOS lists this as MS-DOS (Fat). As a friendly reminder, Formatting will erase all the contents of your flash drive. If you have any important information, back it up before performing this step.This is required so the OCLP utility will be able to recognize the drive, and will format it again later.

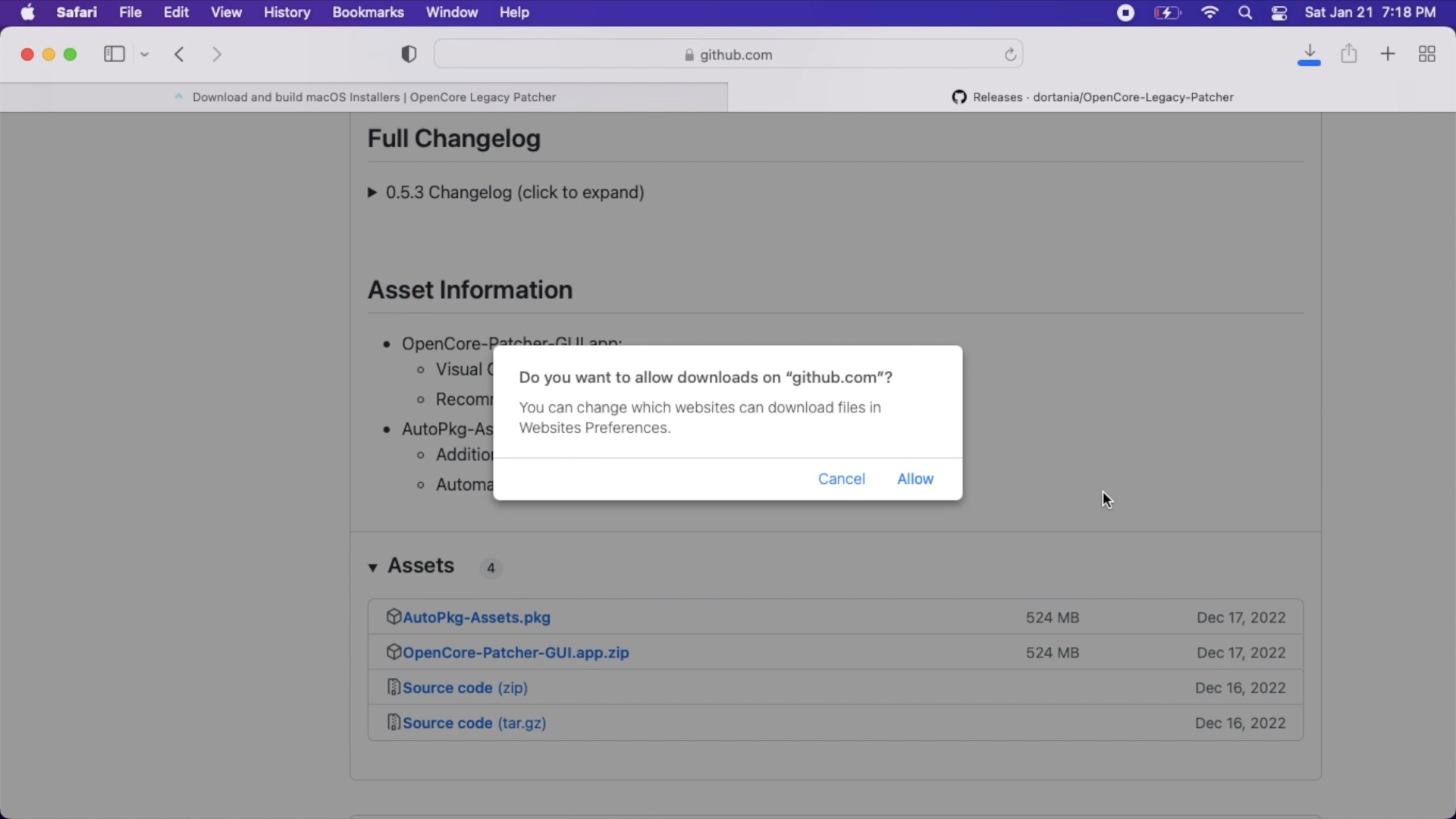

Step 2) Download OCLP

Confirm the support for your Mac, at OCLP's website, Download OpenCore Legacy Patcher from the OCLP GitHub page. As a general rule, you should download the latest version of OCLP. OCLP does receive semi-regular updates which may improve your Mac if you are already using OCLP you can upgrade it in the future.

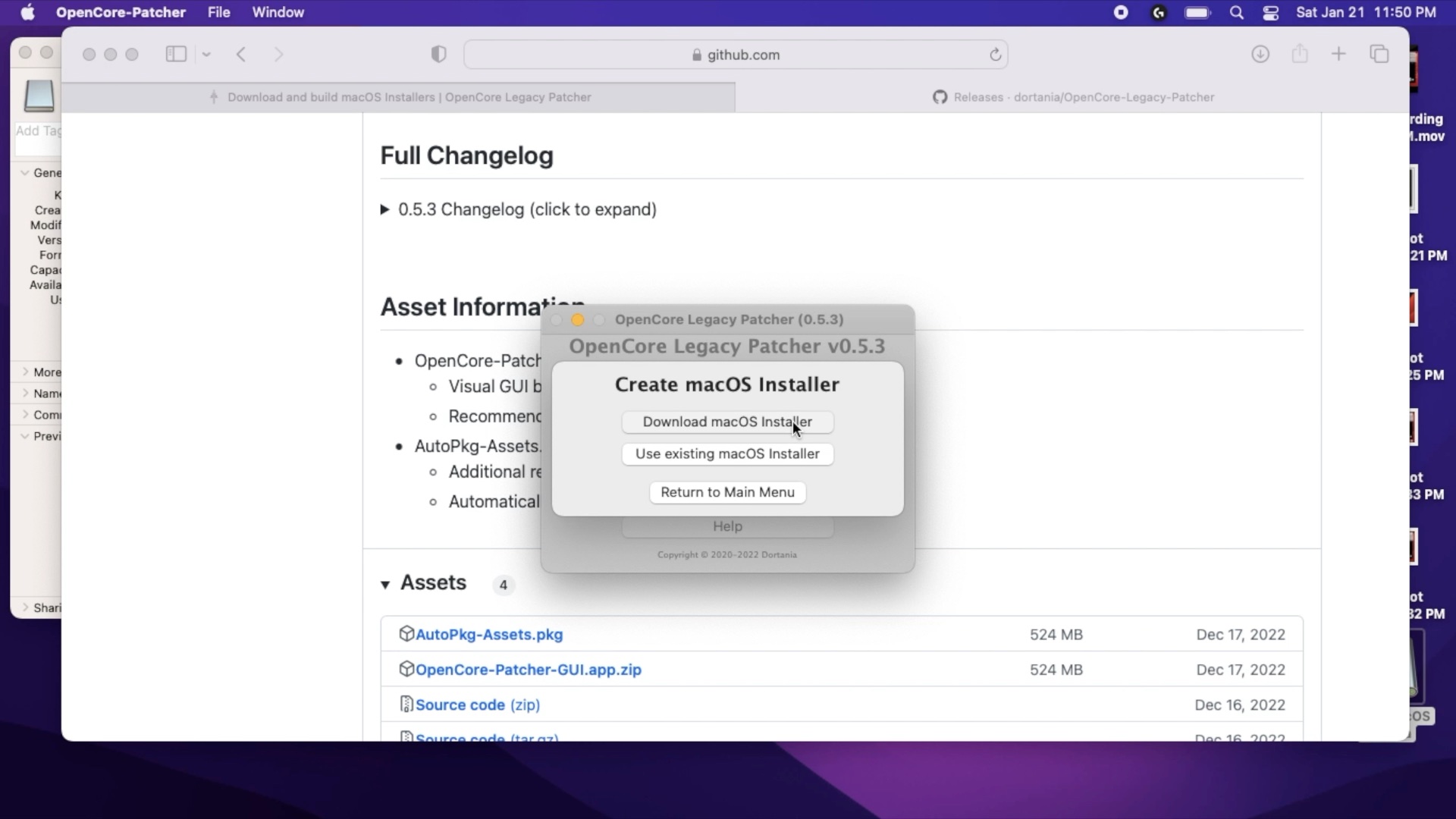

Step 3) Launch OCLP

Depending on your OS/Browser settings you may need to decompress the zip file by double clicking it. Launch OCLP and select Create macOS installer.

Step 4) Download macOS

On the "Create macOS installer" screen, select Download macOS if you do not already have a version downloaded on your Mac, and then select the version of macOS you'd like to download. Downloading will take some time as the installer is 12 GB.

Step 5) Select your OS

The installer should forward you to the select macOS screen and select your downloaded OS. If the OCLP utility does not automatically take you to this screen, return OpenCore's first screen and select create the installer, and select existing macOS.

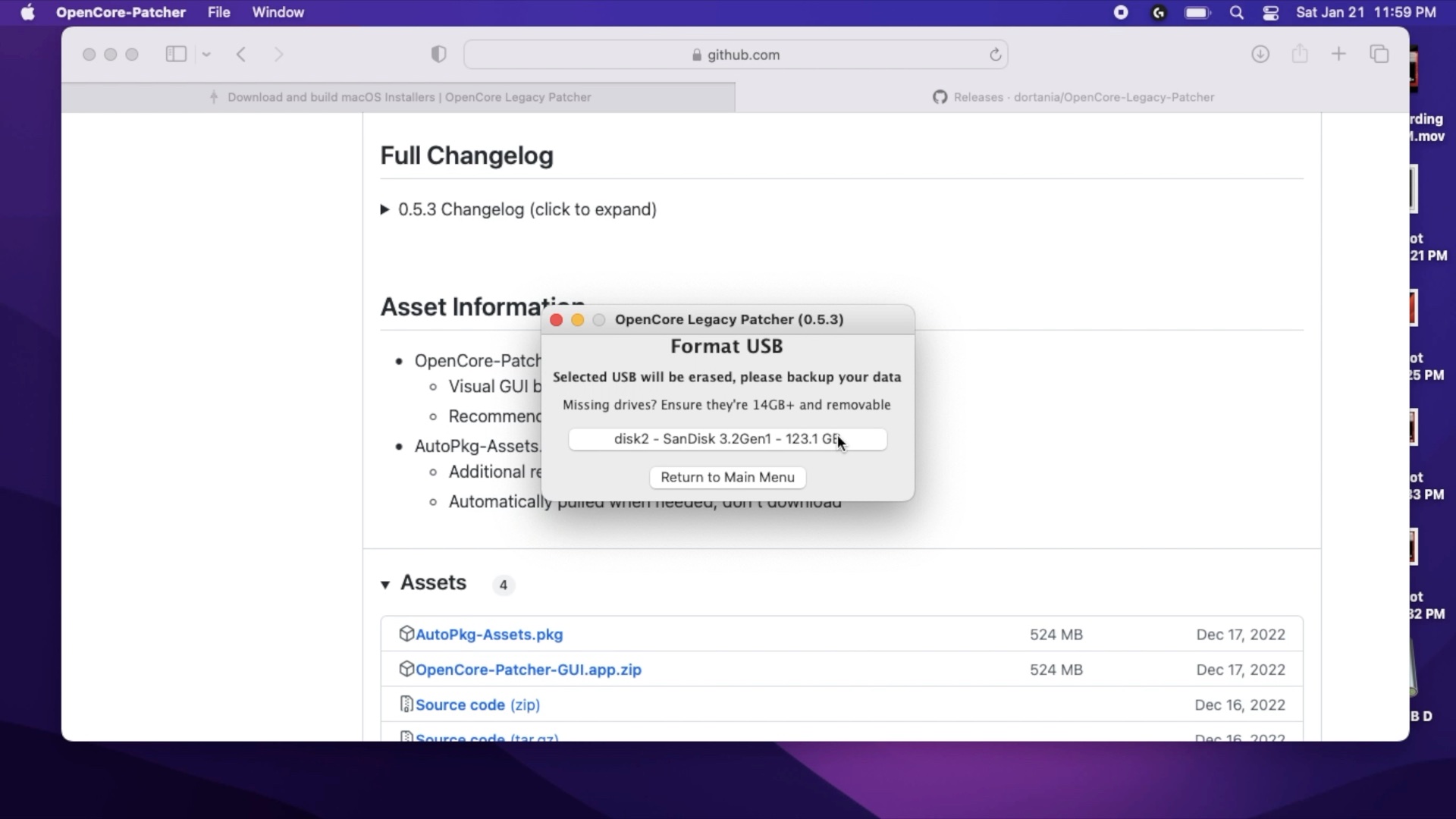

Step 6) Install to USB drive

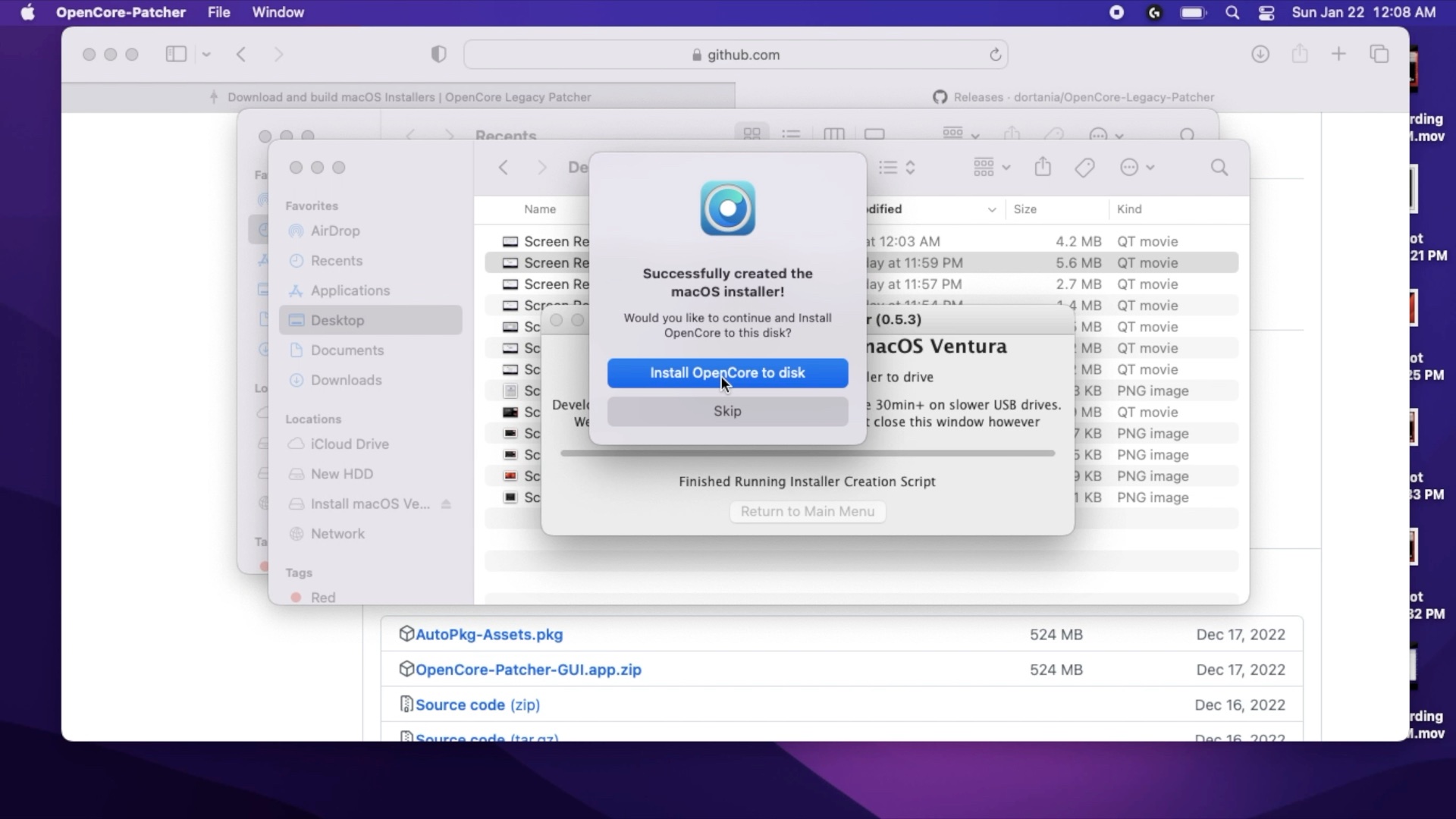

On the Format USB screen, select your USB drive. This will likely require your admin password. Enter it. This will take a significant amount of time, depending on your flash drive's speed and your Mac. This is a two-stage process as after it's copied over it will verify. You can leave this process in the background of your Mac while you perform other tasks or leave your computer unattended while this process completes. The installer will warn that it will take roughly a half hour although I found it took less time.

Step 7) Install OpenCore To Disk

Once the installer has copied the installer to disk, it should ask you if you'd like to install OpenCore to disk. Select install OpenCore to disk. It'll build the OpenCore settings for your Mac. Select install to disk again, and select your USB drive.

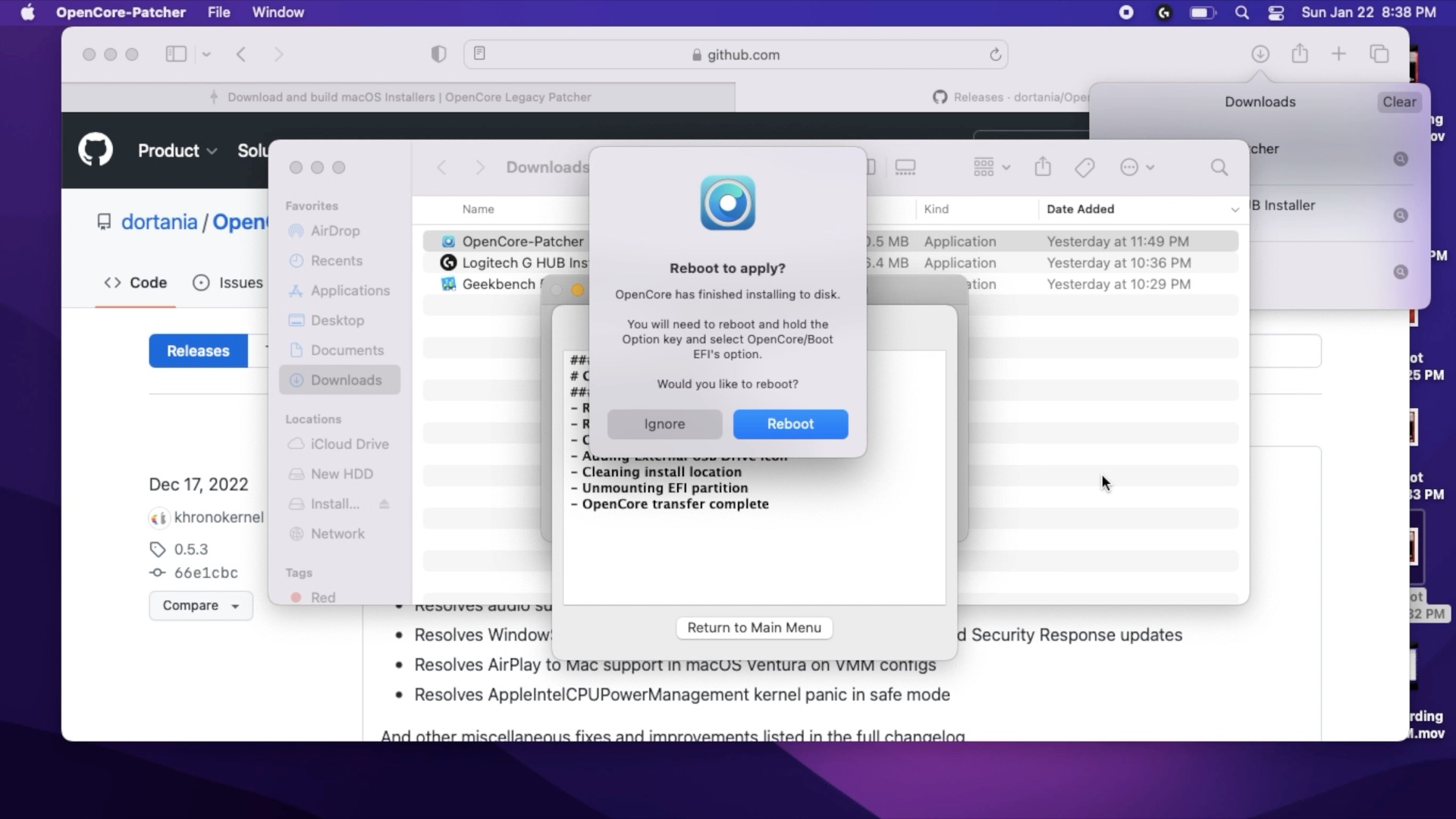

Step 8) Reboot

Once the installer has finished, it should ask you if you'd like to reboot. It should display the following: "You will need to reboot and hold the Option key and select OpenCore/Boot EFI's Option". Holding down the option key while your Mac is booting will cause it to boot into the boot picker.

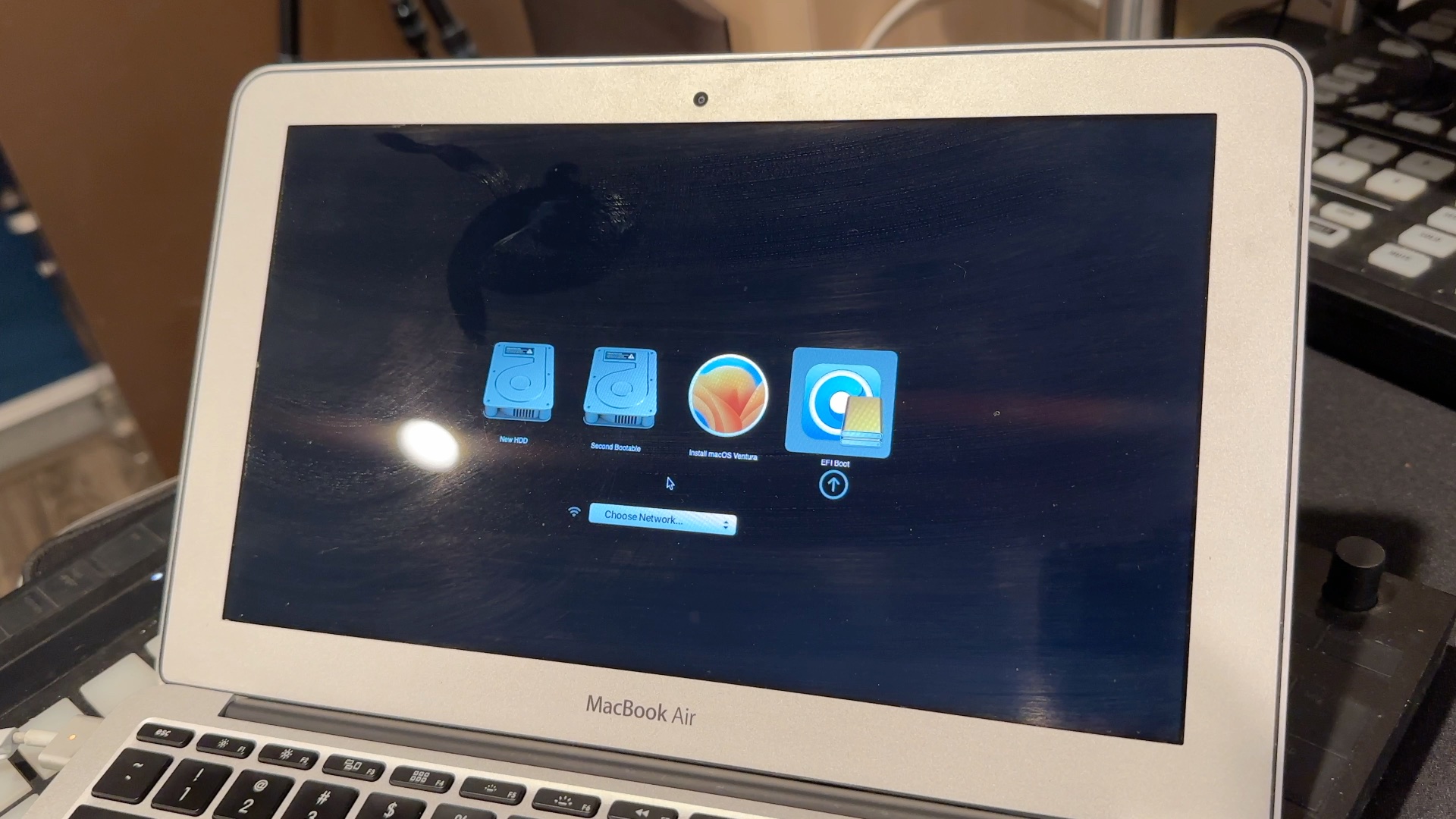

You can reboot now or later. When you reboot, you will need to hold down the option key on your computer and then select the OpenCore EFI partition. This will be the icon with the OpenCore logo behind it.

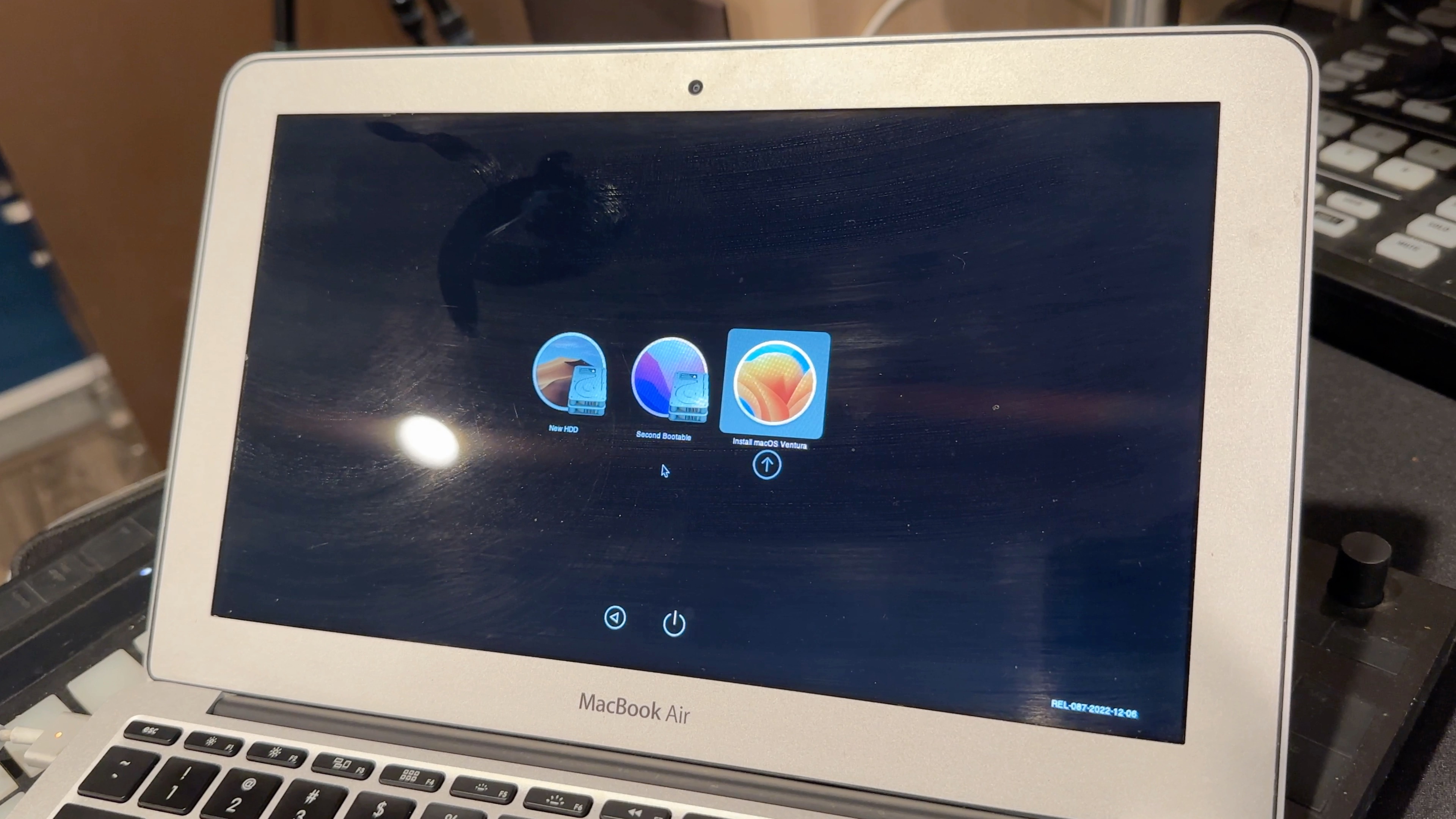

Step 9) Select OpenCore from the bootpicker and then the installer

Once you've selected the OpenCore EFI you'll be taken to another boot picker. Select the installer. At this point, you have booted into OpenCore, and thus all the configured hacks are loaded in that will enable your Mac to be able to install macOS.

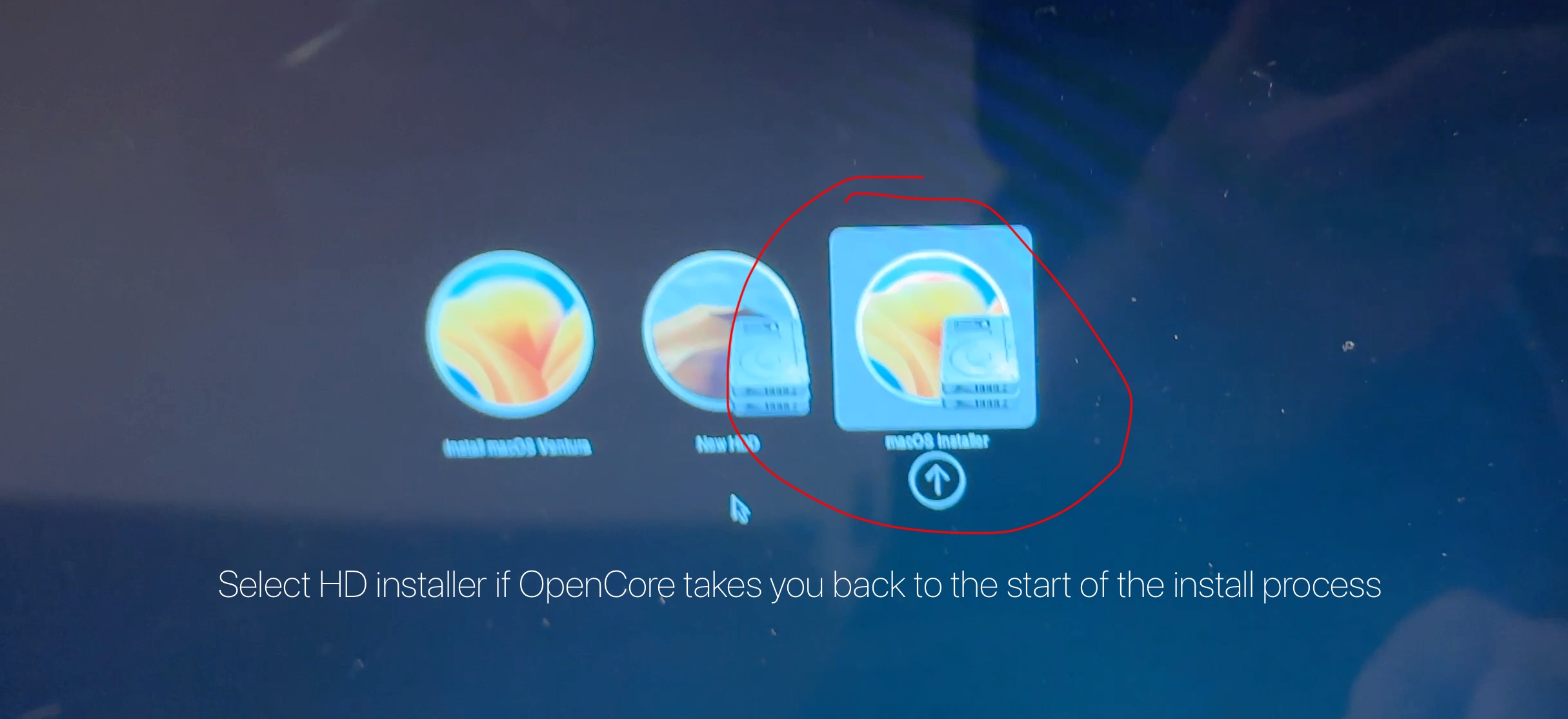

Step through the installer as normal. With modern macOS installs, the installer will need to reboot several times. It may reboot and bring you back to the beginning of the install process. If this happens, you'll need to restart your computer and from the OpenCore boot picker, select the incomplete install and not the USB installer. This will resume your install.

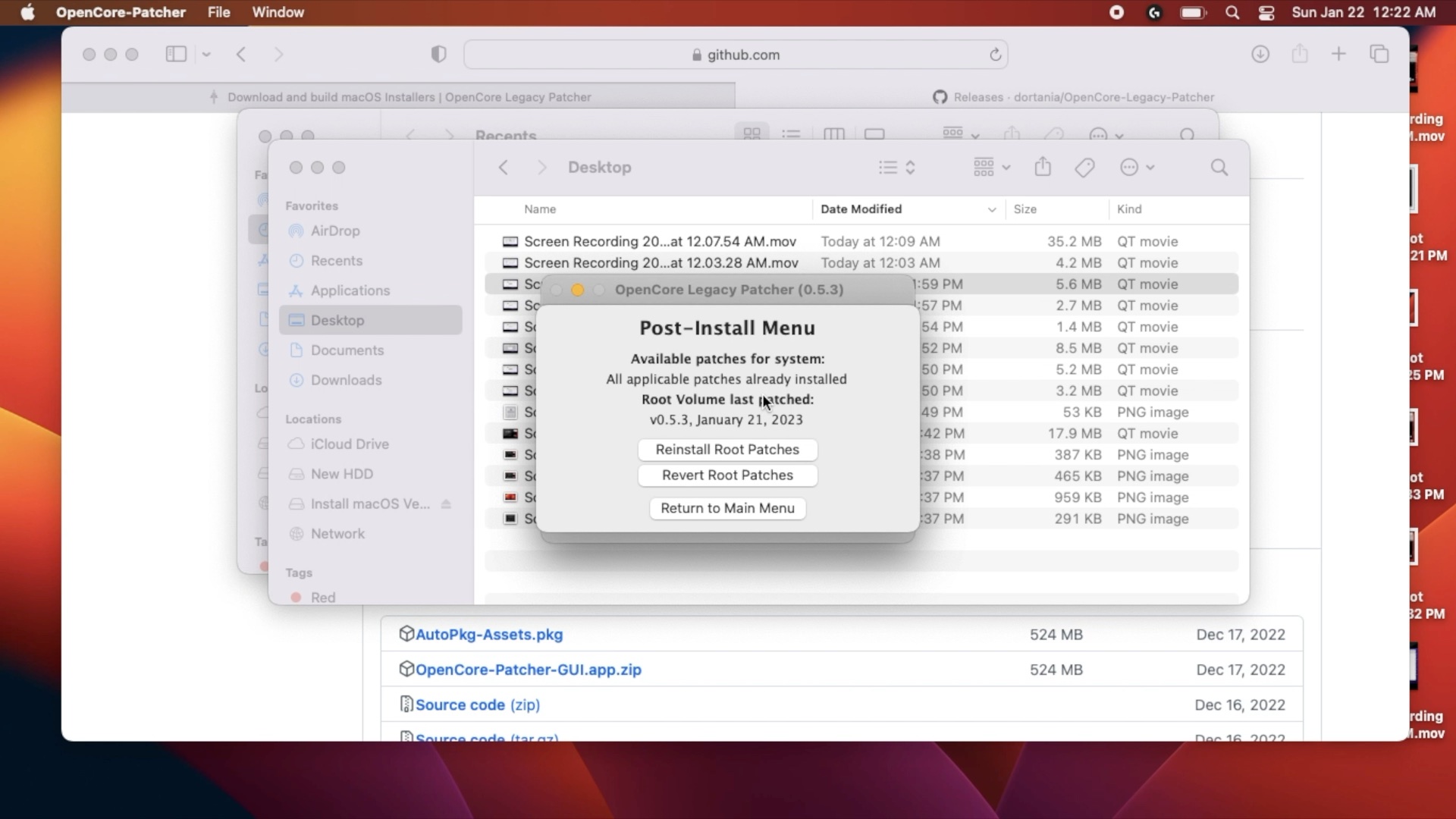

Step 10) Post Install

Finish setting up your Mac. Run OpenCore Legacy Patcher one last time and confirm that post-install modifications have been installed.

More OpenCore Content!

I installed OpenCore onto my Mac Pro 3,1 using OpenCore Legacy Patcher and made a video about my adventure.

-

macOS Activation Lock Vulnerability discovered

iCloud with Find My Mac offers the ability lock your Mac if it's lost or stolen, placing it into a state known as "Activation Lock". Activation lock should prevent a user from being able to access the device without first re-entering a pin or password as it is a lock screen before the computer boots. This isn't nearly as powerful as the iOS version due to the lack of persistent internet connections. A stolen Mac will likely not have an active internet connection. However, if the thief can log in to the Mac and sign into a wifi access point or connect to a known WiFi access point, they would then find the Mac forcible rebooting and locking itself, leaving the Mac in a protected state. This was discovered on reddit, although failed to gain much traction as the initial report required a bit of sleuthing to fully understand the problem and the discoverer was a bit frustrated after Apple dismissing the problem when they submitted a bug report.

It will even lock a user out of booting into recovery mode (it slightly differs on Apple silicon). However, you can bypass this on certain Macs, thus far only Intel Macs seem to be affected. The original discoverer reported using a MacBook Pro 2019 (specs unknown) and I confirmed it on a MacBook 2017.

It requires the following steps:

- Lock a Mac using iCloud's Find My (the Mac to have to Find My enabled and must connect to the internet after the lock has been initiated)

- Wait for the Mac to reboot the activation lock screen. Reboot the Mac into recovery mode by holding command + R.

- Reboot the Mac again. It will now get back to the login screen, defeating the Activation Lock.

I personally confirmed this on a MacBook 2017 (12 inch) multiple times, but was unable to replicate it on a MacBook Pro M1 Max. The affected Mac specs are as follows:

- MacBook 2017 - MacBook10.1 - Core i5 - 8 GB RAM -

- OS version: macOS 13.0 Ventura

- Firmware Version: 499.40.2.0.0

- OS Loader Version: 564.40.4~27

- SMC Version: 2.42f13

-

Was Snow Leopard 10.6 the greatest OS X release? Demystifying a legend

This is the adapted script from my "Was Snow Leopard 10.6 greatest macOS release ever? An OS X essay" as I know many people prefer written versions (often my self included). This version departs from the original script to better accomedate written word.

Intro

If you were to ask a group of long-time Mac users, what's the best Operating System Apple has ever released? It'd likely be nearly unanimous.

Mac OS X 10.6 Snow Leopard It's one of the most loved products Apple has ever released, renowned even a solid dozen-plus years later as the king of Mac OS.. Many long-time users consider the high watermark of Mac OS to be Snow Leopard.

9 to 5 Mac wrote an article titled called "The Myth, The Legend: How Snow leopard became synonymous with reliability ." The article is a nice attempt to contextualize Snow Leopard in the greater narrative of OS X, but it falls a bit flat. It makes some decent points, and I don't want to take that away from those, but I think I have a better explanation for why Snow Leopard was so dearly loved. Plus, I have my own opinions about which release of OS X/macOS is the greatest.

A Brief OS X History

Mac OS X started its life with a lot of promise and not a lot to show for it. (I recommend both Ars Technica's OS X 10.0 and OS X 10.1 reviews) Yes, it was a brand new operating system that was based on the FreeBSD kernel, but 10.0 and 10.1 were buggy, slow, lacking critical software that most users depended on, and the hardware running it didn't do a lot of favors for it. To illustrate the incompleteness of early OS X, 10.0's public beta installer doesn't have a disk utility to format a hard drive so you could install the OS. You had to do this with Mac OS 9 first.

Native application ports were very few and far between in the early days of OS X., Many of the early applications relied on the Carbon Framework, which functioned as an intermediate way to port Mac OS 9 Applications to OS X with less friction rather than doing a fully native port to Cocoa. That's assuming they ran in OS X at all.

It wasn't until 10.2 Jaguar that OS X started to come into its own. Jaguar saw the introduction of HFS+, MPEG4, address book, Bonjour for Networking, Quartz Extreme, iCal, and iChat. Quick aside, I can't stress what a big deal it was when Apple released Quartz Extreme in 10.2.8, which used the GPU to accelerate the UI. This was huge as the Aqua user interface burned many CPU cycles live resizing and dragging windows.

By 2003, 10.3 Panther was the first OS X I used almost exclusively, as previously, I would dual boot between Mac OS 9 and OS X. My guess is this was the same experience for many other long Mac users. It was less to do with the new features like Font Book, File Vault, Exposé, much faster preview, and better stability, but rather most major applications had a Cocoa or Carbon version that could be run in OS X without using the Classic Emulation, and Apple started shifting to OS X only computers like the G5.

Then one of the biggest rumors came to fruition. In 2006 10.4.4 Tiger became the first release of x86 OS X, starting with the iMac Core Duo. This was on the heels of Tiger, which introduced some core technologies that are still with us, such as Spotlight replaced Sherlock, 64-bit support, Core Image, Core Audio, and some important improvements like Quicktime 7, and introduced a strategy we'd recognize today, Rosetta, a compatibility layer to translate PowerPC binaries to x86.

Mac OS 10.5 Leopard really brought Mac OS into the modern era, as it had Core Animation, Bootcamp, Time Machine, Spaces, Full Unix compliance, App Sandboxing, and app code signing. The UI was maturing. The security features were rolling in.

Snow Leopard

However, despite the significant changes in the previous OSes, 10.6 is the one that hangs above the rest. 10.6 wasn't devoid of features. In 2008, it introduced Multitouch support for trackpads, better Bootcamp support, improvements to Time Machine, the introduction of Grand Central Dispatch for better multicore performance and OpenCL, and the much-needed rewrite of many core applications to 64-bit. And while these features are great, these aren't the reasons why people love this damn OS so much.

That is because there's one absolutely huge asterisk I have not mentioned yet, and almost no one seems to remember.

Snow Leopard dropped PowerPC support. This was the CPU of choice for Apple since 1994. So remember the first intel iMac, the dual-core Core Duo? It was released on January 10th of, 2006. I don't think I'm making this point clear enough. Let me explain. At its release, the absolute oldest computer Snow Leopard was ever installed on was less than two and a half years old. The previous iMac G5 was a single-core CPU, whereas the iMac Core Duo was dual-core. Plus, the performance gap between PowerPC and x86 was wide. Secondly, Apple only shipped the Core Duo Macs for mere months. The new MacBook lineup shipped with a Core Duo in May, and by November, it already had the new, much faster 64-Bit Core 2 Duo. Oh yeah, Intel was making meaningful gains during this era. Snow Leopard never had to support shitty hardware for its day.

In 2009 alone, Apple refreshed its MacBook, MacBook Pro, MacBook Air Mac Mini, iMac, Mac Pro, and the Xserve. Yep, that was the entire lineup. Between 2006-2010, this was basically normal. Each year, Apple would refresh entire lineup would be refreshed yearly sans a few outliers, like the MacBook Air since it was introduced in 2008, and the Mac Mini, Xserve with less frequent updates, so the chances are if you used Snow Leopard, it was likely on very new hardware.

It's easy to see why many Mac users also consider this the high watermark for Apple and the Macintosh platform. It's hard to overstate this, but the perception of Apple during this era was completely different.

While Apple was doing very well, it wasn't the world's richest company yet. In 2009, the iPhone was only two years old, and the iPad was still a full year out. This is why I argue that a lot of Snow Leopard's clout still commands has to do with the hardware it was running on.

A top-of-the-line Quad-Core (Dual cpu/dual core) G5 could barely playback 1080p without dropping frames, and then two years later, a 2008 Mac Pro could playback multiple streams of 1080p video without dropping a single damn frame. The biggest single hardware upgrade I've ever experienced in my time using a computer was going from a 2004 Dual CPU G5 to a 2008 Mac Pro. A lowly MacBook could watch HD Video. The differences in everyday performance were stark. The x86 Macs even booted faster.

Here's something for the youngins, it's easy to forget that OS updates used to cost money to the sweet tune of $129. Snow Leopard reduced its price to $29. I have to give 9to5Mac credit as this point is a good one. Many users probably went straight from Tiger, skipping Leopard, to Snow Leopard. Windows Vista had been driving users away in droves. Many first-time Mac buyers skipped out on Windows 7, which was only released to manufactures before Snow Leopard (although the public would not have it until September), and went straight to Apple. First impressions matter, and Snow Leopard was a fantastic one to have, especially coming from the frustrations of the often misaligned Vista.

Sadly, Snow Leopard was the last macOS that supported Rosetta, meaning some users stuck with Snow Leopard 10.6, resisting the tides of change. It was an optional download and allowed users to run much of the earlier OS X software at surprisingly usable speeds. In what is to become a reoccurring theme, Apple unlike Microsoft, was completely willing abandon the old without extending an olive branch by offering optional legacy support. Notably, it's been speculated that Apple did not renew a software license to continue using Rosetta 1.

Lastly, as previously mentioned, Snow Leopard represents a time when the Mac was still at the center of the Apple universe. The iPod lineup was still going strong but tied to iTunes. The iPhone 3GS was blowing the minds of consumers, journalists, and pretty much everyone who touched one after the introduction of the app store, but it still was a tethered experience and required a computer to set it up. The iPhone wouldn't become an untethered experience until iOS 5 in 2011. At the heart of it, all was OS X and iTunes.

Also, for many cultish Mac-acolytes, their savior, Steve Jobs, was still alive. Even today, it's still a trope to hear Mac users lament, "It wouldn't have happened if Steve were still alive." This was time of reverence as our modern cynacism still hadn't manifested. Social media in the late 2000s was nascent, darker side of smartphones addiction hadn't appeared, and Amazon and other large online retailers were only just started to gut main street America.

I'm certain that Steve's image would have slowly been ebbed away as the pervasive influence of big tech has turned the public image once prodigies like Mark Zuckerberg, Jeff Bezos and Elon Musk into megomaniacs, hopelessly out-of-touch and ruthless businessmen.

The end of the cats

Apple's 10.7 and 10.8 weren't total duds, but they had some early woes. 10.7 had a few bizarre installer issues like the CUI CUI CUI error, and 10.8 had permission issues that prevented Time Machine restores (and other Time Machine problems), much to the chagrin of troubled users. 10.7 Lion brought a lot of mainstays like auto-save, auto-correct, emojis, and push notifications, finally replacing growler, face time, airdrop, iCloud, and more multitouch gestures. However, two of these were negatives. The way Apple first introduced Autosave was confused and felt dumbed down. 10.7 also had to inherit the iCloud debacle, which added to its buggy perception. 10.8 Mountain Lion lacked many of the big features that OS X users were used to seeing in previous OS releases. Part of this was because macOS was maturing, but also, it felt like Apple was devoting all its resources to the iPhone and iOS. It didn't help that many of the big features of OS X were lifted from iOS. Things like notification center, game center, messages, airplay mirroring, and Gatekeeper felt like more iOSification of OS X.

One of the great ironies here is that macOS was actually getting faster. The legendary speed of Snow Leopard doesn't translate much into real-world performance. CNet showed that Lion outperformed Snow Leopard.

Mavericks

But now it's time we finally get to talk about the true king of OS X releases, the one, and only severely underrated Mavericks. It was the culmination of both the Lions and the total lack of additional notification, focusing strictly on performance. It felt like a hardware upgrade had shipped in a software update, and it was the first mac OS that was free! This meant anyone could update it without paying a damn cent.

It didn't feature any radical UI changes beyond the much-needed tabbed finder and the reduction of some of the skeuomorphism found in the address book notepad, iCal, but it did bring the UI into a glorious, whole new world of resolution independence, not just for retina Macbook owners but for everybody, including 4k displays. Big massive things happened under the hood. Timer coalescing was introduced, a massive win for power saving in the MacBook lines, but the much bigger win was Virtual Memory compression. Virtual Memory compression functions using lossless data compression for anything memory-related, RAM, virtual disk swaps, and save states. In short, this means you can make much better use of the RAM you have, and it requires less ram for daily use, especially in multitasking. This also speeds up virtual spaces as less disk space is required, thus fewer transfer times and less power usage. It drastically improves performance on multicore machines, thus, longer battery life. Virtual Memory compression is the gift that keeps on giving. It's also the driving tech behind App napping, which was also introduced in Mavericks! App napping puts apps not being used to sleep, so they're not using system resources, which improves performance and, you might have guessed, saves battery life!

Mavericks also introduced minor features like centralized task scheduling, which made more mindful use of the battery, for example, not running Spotlight indexing when not plugged into a wall. Ars Technica measured Mavericks with an incredible 3 hours longer battery life than a MacBook Air over running OS X 10.8. I highly recommend reading the entire review.

Mavericks also get bonus points for being stable from the get-go. After, two OS releases that weren't Mavericks deserve kudos for that. Oh, for my Mac Pro, homies? It was also the first OS to support more than 96 GBs of RAM. I feel so strongly that Mavericks is the best macOS release because all these changes just happened as a user. You didn't have to know a damn thing about them. It was a huge performance boost, was more stable, got you more battery life, and unlocked the world of resolution independence. Everything just gelled with Mavericks. Let's not forget this upgrade was free. Like Snow Leopard, a lot of its perception and legacy has to do with the OS that follows it.

Mavericks was booked end by what I'd consider the worst release of x86 macOS ever, 10.10 Yosemite. It was an absolutely terrible overcorrection on the UI and had one of the most brutal bugs that resulted in the worst networking performance I've ever experienced on a modern OS. At least the networking eventually got fixed, unlike the Johny Ive flat UI.

The next California releases

After Yosemite is what I'd consider a successful string of OS releases, Sierra, High Sierra, and Mojave were all improvements, one after the other after the other. They also cleared some pretty good technical hurdles, like switching from HFS+ to APFS. We finally saw a replacement for OpenGL in the form of Metal, the UI was fixed, and the OS was fairly stable.

Apple accompanied a few lax hardware requirements that axed support for many older models resulting in the popularizing patching scripts that enabled support for older hardware, Pike's Script and DOSdude1 allowed older Macs to continue age gracefully into this era despite Apple's desires.

Of this string of releases, 10.13 High Sierra often is one of the more loved releases, like Snow Leopard. Due to Mojave dropping support for a significant amount of computers by not offering Metal drivers for old GPUs, High Sierra became one of the end-of-the-roads for many users until they upgraded. I encountered this as an end user, as I had invested in an Nvidia GeForce 1060 without realizing Apple in roughly 2 years would petchulantly block release of all future Nvidia macOS drivers. I'd later purchase an AMD Vega 56 after a year, when it was apparent Apple would not reverse course. High Sierra was not a bad release to be stuck on as I found it rock solid and snappy on an aging Mac Pro.

What didn't happen during this string was any revolutionary changes, Windows after some missteps like Windows 8 and 8.1, radically improved with the release of Window 10 and gained a lot of ground on macOS. Its UI might have still been mind-boggling, like what the fuck are there still control panels, but Microsoft on the same hardware was the clear performance winner over Apple in cross-platform benchmarks. It also boasts something Apple is terrible at Legacy support. Windows 10 and 11 can run via emulation layers, apps written for Windows 98.

A 2006 Mac Pro is forever locked out of modern macOS releases, but fear not, it will install Windows 10 off of an unmodified Windows 10 DVD and once installed, it runs surprisingly well. Windows 11 can even be hacked to run a 2006 Mac Pro. And during this dry time for Mac, Windows even gained proper Bash/ZSH support via Ubuntu as a virtual layer. It is convoluted, but at least common applications and environments like Git and Node can be run near natively now.

Windows also beat macOS to a few features that it shouldn't have, such as 10-bit support and HDR, after Apple clearly aced them with resolution independence. Apple has drug its feet in some really strange places. It wasn't until very recently that CoreAudio finally front-ended into surround sound in a sane manner (if you can find any software to use with it), whereas windows have had this for 15 years. It was until 10.15 Catalina that Apple added DRM support to appease the streaming services for higher resolution video, despite iOS devices having this for years. Meanwhile, Catalina was a step back with its security over corrections, dropping 32-bit support, which turned out to be a field burner for ARM support and not nearly enough meaningful updates. In a better world, Apple would have let x86 die with the ability to run 32-bit code. We know where Microsoft would have landed on this because they already have. They finally stopped developing a 32-bit version of windows but have made no moves to drop 32-bit binary support. In fact, Windows 11 on ARM supports Rosetta-like translation for both 32-bit and 64-bit applications.

The walls of the gated community

We cannot judge macOS releases in a vacuum. Thus, we must call out the narrowing gap between Windows and Mac OS. Older hardware also has a distinct advantage over windows, and this is through drivers and longer-term API support. Apple doesn't make it easy on developers because it constantly changes requirements. Or worse, Apple will actively block the release of drivers, like in the case of Nvidia.

Being a Mac user often feels like living in a gated community with an overbearing homeowners association.

Before I end on a downer for macOS, Big Sur and Monterey run seamlessly on x86 and ARM, which still can't be said about Windows 11. Microsoft is working on its answer, and it's only a matter of time before Windows ARM SoCs.

Whatever your concerns may be about the hardware, the software transition to Apple Silicon has been nothing short of amazing. Big Sur on an M1 did not feel like a first gen product, and Apple always has its eye on consumers. I hope Apple takes its professional-class users more seriously in the future. Apple Silicon has been exciting for its raw performance but offers nothing in the way of user serviceability, and an upgradable professional computer shouldn't cost $6000.

As a professional, I want the best from both Apple and Microsoft. I feel I shouldn't have to make the compromise between long-term usability and support versus a superior user experience, and why do I feel this way? We had it in the past.

Ultimately, I think when most people say Snow Leopard is the best operating system Apple has put out, or when I say its 10.9 Mavericks, we're saying that we want an Apple more focused on the Mac platform and meets our needs better, not theirs.

The Apple Silicon era thus far has been impressive, albeit imperfect. Without even the ability to swap out the parts most likely to die, like the RAM or, more importantly, SSDs, Apple Silicon is a cynical product, meant to be disposable technology, destined for eWaste bins when Apple decides they no longer are willing to support said computers.

Apple's long-term support has been spotty, especially during its architecture transitions. The difference is this time. We won't be able to service the machines long after Apple stops supporting them. If the planned obsolesce is our gated community's walls, they keep getting closer to our house.

Bonus content

This article is not anti Snow Leopard, in fact I'm quite the fan. Awhile back I took the time to upscale and hand correct all of the Mac OS X Snow Leopard 10.6 Nature backgrounds and the Abstract backgrounds to 5k for use on your Mac /iOS device for free download. Enjoy!

-

Radeon RX 6600 XT and 6800 for the classic Mac Pros (4,1/5,1)?

Update: Syncretic has done it again, you can download the patched ROM from MacRumors for AMD RX 6600 / RX 6800 /RX 6900 XT cards.

Original article below.

It has been nearly a year since I wrote the end of the classic Mac Pro after selling my classic Mac Pros for a 2019 and yet here we are, OpenCore 0.7.9 can run macOS 12.3.1 with very good results.

The big news comes from MacVidCards.Eu and the 6000 AMD GPUs. MacVidCards.Eu is an affiliate of MacVidCards.com, but I'm not sure of the business relationship. MacVidCards.com is a service that flashes GPUs with custom firmware that is Mac EFI compatible.

In my excitement and haste to post a video, I incorrectly stated that it's a RX 6800 XT and not a 6800.

The Mac Pros EFI implementation predates UEFI, the Unified Extensible Firmware Interface, which replaced BIOS computers. Apple's implementation uses Universal Graphics Adapter Protocol (UGA). The more modern UEFI replaced UGA with Graphics Output Protocol (GOP) Thus, any UEFI GPU will not output video before drivers are loaded. This meant for years, Mac users who bought any sort of non-OEM GPUs did not display a boot screen until OpenCore. OpenCore is a boot loader, meaning it launches before the operating system and has the ability to perform functions before the OS is loaded. It can inject the low-level driver support, thus giving classic Mac Pro users a boot screen, among many other features.

MacVidcards.com offered an alternative for aftermarket GPU upgrades. It would flash GPUs with its custom hacked ROMs for a fee. MacVidCards.eu had some business arrangement with MacVidCards.com, as MacVidCards.com didn't ship to Europe. MacVidCards.Eu is now selling flashed 6600 XTs and 6800 XTs for classic Mac Pros with screenshots to back up the claim. I'm inclined to believe these are real, and here's why.

Syncretic of the SurPlus fame had a look at the ROMs found on the 6000 series AMD GPUs and postulated it was due to bad code on the ROM. During the init, the ROM checked for UEFI HII (Human Interface Infrastructure) protocols but didn't have any error handling. Apple's EFI implementation does not have UEFI HII support. Thus the ROM on the card would look for these settings, and it'd fail to return a value. When the GPU hit the unexpected null state, it'd hang, thus interrupting the boot process.

Synchretic theorized patching this error in the ROM on the GPU would allow the boot sequence to continue, and thus you could use the GPU.

My guess is that MacVidCards.eu figured out how to do this and is now selling these GPUs. MacVidCards.com, interestingly is not. I have to stress that I haven't had any firm confirmations that these are real, but I'd most likely wager they are.

First, it's coming from a reliable source. The MacVidCards group(s) have shipped working EFI hacked GPUs for years. Even if the MacVidCards.com in the US has a negative reputation for customer service, plenty of people can attest their products work.

Second, thanks to community research, we know (at least part of) the scope of the problem for these GPUs. It's not an insurmountable fix

Third and final, the benchmarks pass the "sniff test" . They are about 6% slower in the Metal scores for a 6800 vs a 2019 Mac Pro with the same GPU. This checks out as PCIe 2.0 vs 3.0 generally only incurs about 5% hit for PCIe 3.0 GPUs as GPUs aren't as bandwidth-intensive as most people assume they are. Side Note: This will likely change with technologies like DirectStorage in Windows, where the GPU can bypass the CPU for accessing NVMe but for now, there's not a huge advantage for larger PCIe buses when concerning GPUs.

While currently this is only avaliable in Europe, this news should make all Mac Pro owners excited as it means there's just a few more drops left in the tank for the classic Mac Pros. Perhaps we will see a community solution for the ROM now that we know it's possible.

-

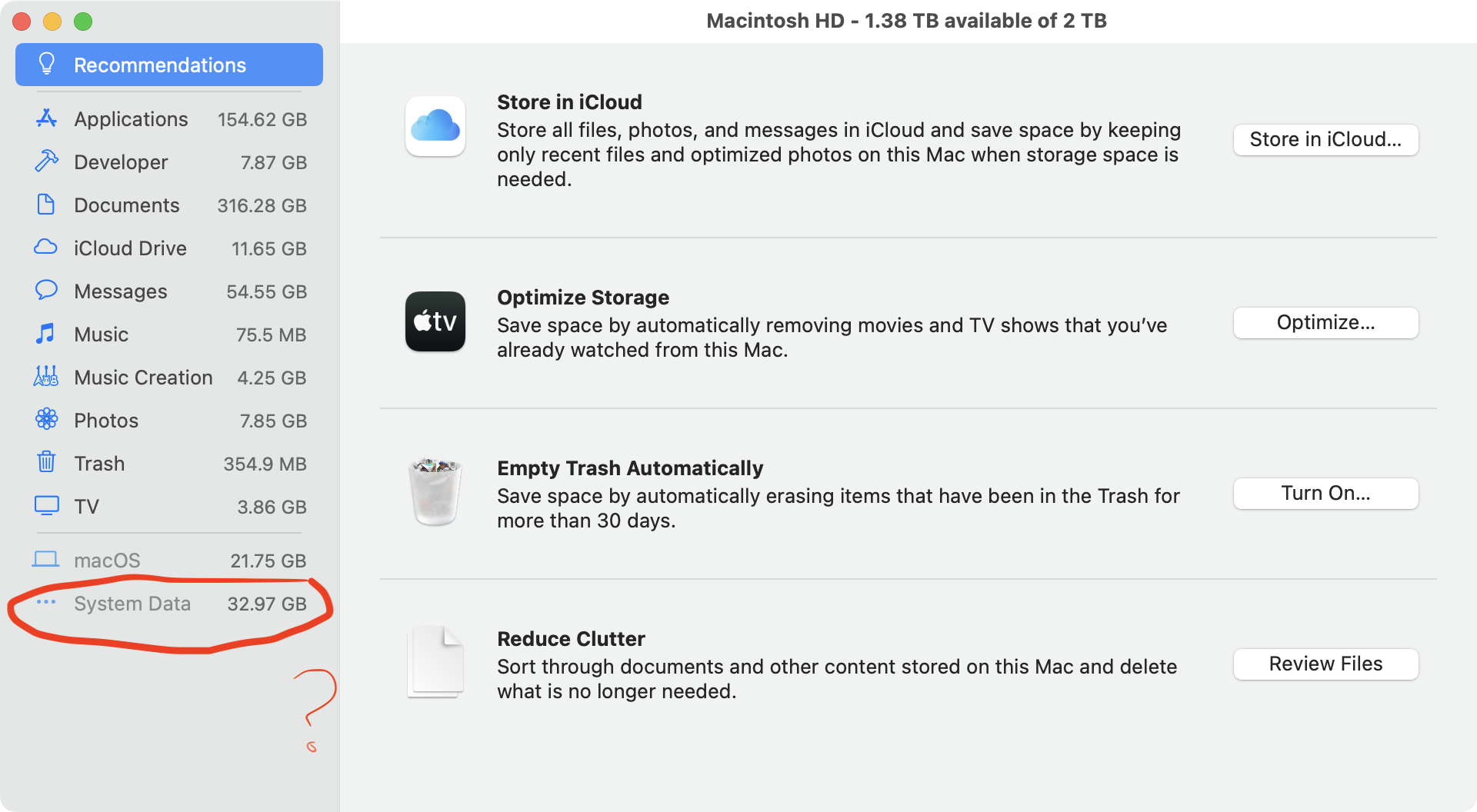

Reclaiming storage/space from 'System Data' in macOS: A tutorial on understanding the System Data usage.

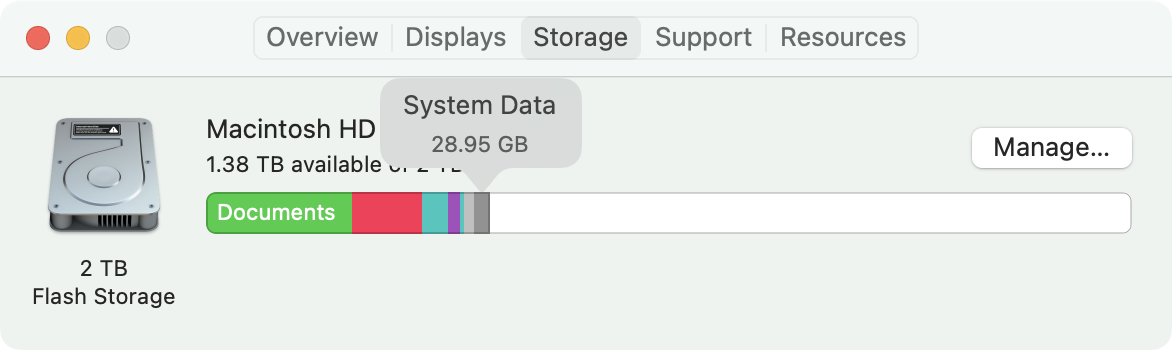

macOS is pretty great and bad at the same time, communicating how and what is taking up storage on one's Mac. Most users are probably familiar with using About this Mac -> Storage. Clicking on Manage will give you a more detailed view. The one point of contention is "System Data," as it's ominous and nebulous.

Below is a video that walks through the strategies of reclaiming space from the System Data, it's recommended as a companion to this blog post.

You can't just delete System Data... or can you?

I see pretty frequent posts on Reddit posts like "Can someone please explain how to get rid of the "sYsTeM dAtA" this?" or hyper verbose " Why do I have 130 gigs of system data 💀 (and how do I get rid of it cause a normal mac barely has like a 20 gigs or so of system memory) (I checked the usual culprit i.e. editing cache data but thats not it this time and I can't figure it out)".

This isn't because these individuals are incapable, rather that Apple does not clearly communicate what is happening nor give you any meaningful course of action. One user might have "System Data" that is is only 10 GB and another might have 250 GB. Why this difference is so large or why this difference even exists at all is not explained.

What is System data

System Data is the tally of the contents of the following:

-

/Library /System~/Library-

/usr - Invisible Files in the

~/

All these can be managed by the user with

/Systembeing the outlier. This is confusing as there are at least two Libraries on your computer and more if you have multiple users on a single computer.Hint: Tilde (~) indicates the home directory of the user, this is a *nix convention that macOS carried over.

/System- This is where macOS itself resides. Under modern macOS this resides on a separate partition that isn't manipulatable by the user. To run macOS, you need this, and Apple protects its users from tampering with the/System./Library- This is the global Library accessible to all users. Things like Fonts, Audio plugins, support libraries for applications (Such as the Adobe CC suite), and assets for Final Cut Pro end up in this folder. Audio plugins end up/Library/Audio. Fonts go into/Library/Fonts, and the bulk of Application libraries intoApplication Support~/Library- This is hidden by default (more on this in a minute), but it uses a very similar structure to/Librarywith a large number of files landing in~/Library/Application Support, things like Apple Messages, Apple Photo Libraries, Xcode Simulators, Crossover Bottles (games), Docker Containers, and Steam games within~/Library/Application Supportor~/Library//usr- This is where CLI utilities installed by Homebrew and other applications end up.

Generally, over time, when installing various Applications and utilities, they will also install items into the Libraries and accumulate. A fresh install of macOS will have very little "System Data". An old install after many years can eat up a fair amount based on the types of applications and how frequently applications are installed. Deleting the Application via the Finder will not automatically remove them. This creates orphaned files. Official uninstallers do a much better job, as do applications like App cleaner attempt to remove files that are associated with an Application, but this is not 100% effective. In some cases, the official uninstallers from reputatable companies purposely do not uninstall entirely, like from Adobe.

Unfortunately, this requires intervention on the user, which we will cover.

Displaying the Library folder in the User directory

In the OS X days, the

~/Library(the Library folder found in/Users/your-user-name/) was a visible folder that you could easily poke around in. In modern macOSes, this is hidden, which is both good and bad. It's suitable for the basic user who probably shouldn't be manipulating it but bad for anyone with an intermediate level of familiarity with the underpinnings of their Mac. It obfuscates where storage is going on behind the scenes.There are still multiple vectors to viewing the contents of the ~/Library. The easiest route is to go to the user directory and hit "Command Shift ." to display visible folders. Another method is to navigate to it from the Finder, select under Go, "Go To Folder..." and type in

~/Library.You can also make the Library permanently visible either using "Get Info" from the finder (on the user directory) and checking "Show Library" or using the terminal and running the following command:

chflags nohidden ~Library

Calculating Folder Sizes

For whatever reason, still to this day, one of the advantages of macOS is the ability to calculate folder sizes from the list view. This is done by using view options "Show View Options" under View or using the keyboard shortcut, Command J. Calculating folder sizes will take time, depending on how many files are inside a folder.

Sorting folders by size makes it easy to spot where the largest folders reside.

Managing the ~/Library

The most common place to reclaim storage is from the

~/Library.Deleting items from

~/Libraryis tricky as there are important files that could break applications and a few valuable system files. There's no hard-fast rule. For example, deleting a Steam game via the Finder is safe from~/Library/Application Support/Steam/steamapps, but deleting the entire Steam Directory will cause issues. The best advice is to tread lightly. Generally, (but not always) items that land in~/Librarycan be managed elsewhere. For example, Steam Games can be removed , and Apple Messages cache can be controlled via the Apple Messages app by clearing out data over a month old.A short but incomplete list of common data hogs in

~/Library~/Library/Messages- Apple Messages can creep up in size with the number of large media files now typically shared among friends and family. Every lousy gif sent to you via text message by an aunt, gets cached in/Messages. Use Apple Messages to get a handle on your Messages.~/Library/Containers- these are freeze and sandboxed states for macOS, generally from the Mac App Store, sometimes these get orphaned. For example, if you install NBA 2K21 Arcade Edition from the Mac App Store, it'll install the application in your Apps folder and a 4 GB file within/Containers. If you delete the app by dragging the game to the trash folder from your Applications folder in the finder, you will not delete data within the container and thus will need to do this manually.~/Library/Containers/Docker- Docker is generally a requirement depending on a toolchain for developers, but containers/images are downloaded into the/Containers/Dockerfolder, the CLI utility is the best way to manage these.~/Library/Containers/UTM- UTM is a popular QEMU-based emulator for creating virtual machines and other operating systems. Installing the UTM virtual machines by default will install into/Containers/UTM. Virtual Machines often are multiple GBs per virtual machine, so this can be a place to reclaim a lot of space.~/Library/Containers/com.apple.mail/Data/Library/Logs- Log files for Apple Mail. In mail, select window/Connection Doctor Uncheck Log Connection Activity (Credit to JeremyAndrewErwin)

~/Library/Mobile Documents- This is the iCloud driver folder. The easiest way to manage this is to go to Apple ID within the system preferences and, under iCloud drive select options.~/Library/Developer- This is the location where Xcode installs its simulator environments. This can be managed within Xcode using preferences -> Components and caches cleared from the Storage "Manage" in about this Mac.~/Library/Photos- This contains the Apple Photos library and Photo management can be done via the Photos app. The entire Photos library can be uploaded to iCloud (assuming you have a large enough iCloud subscription).~/Library/Caches- Caches are application-specific temporary data. Depending on the application, these can be deleted with little repercussions. Generally, applications provide ways to manage their own caches. Deleting them is often temporary, as using an application will cause it to create new cache files as needed. It's recommended to do this every so often as you add and remove applications and upgrade them, you may end up with orphaned caches. This is best thought of as a spring cleaning activity as opposed to daily or even weekly maintenance. The same can be done with~/Library/Logsas occasionally, some applications can eat up hundreds of MBs and even GBs of data in log files.~/Library/ScreenRecordings- These are screen captures by QuickTime. Quicktime doesn't provide a smart way to manage these, and they are best deleted via the finder.~/Library/Application Support- this is where a bulk of Applications install user-specific data.~/Library/Application Support/Steam- Steam is a popular application store for games and game interaction, providing community features like in-game chat, and user profiles alongside its massive amount of videogames. Steam provides ways to delete games via it's user interface, but games can be found and deleted in the/steamappsfolder.~/Library/Application Support/OpenEmu- OpenEmu takes an interesting approach of stashing games in the Applications Directory (as do a few emulators). Games can be managed from the UI but also deleted fromGame Library/romsand artwork fromGame Library/artwork~/Library/Application Support/com.splice 2.Splice- The popular subscription-based Sample library app, Splice, stores its cache within Application Support instead of ~/Library/Caches`. It can be dumped.~/Library/Application Support/RetroArch- RetroArch has a habit of stashing quite a bit of resources in/Application Support, but deleting this directory should only be done when deleting the entire emulator.

~/Library/Application Support/Devonthink- Makers of document organization software, this can be a data hog (Credit to JeremyAndrewErwin)~/Library/Application Support/PFU- Scansnap scratch files, can be safely removed (Credit to JeremyAndrewErwin)

In summary, viewing the contents of your

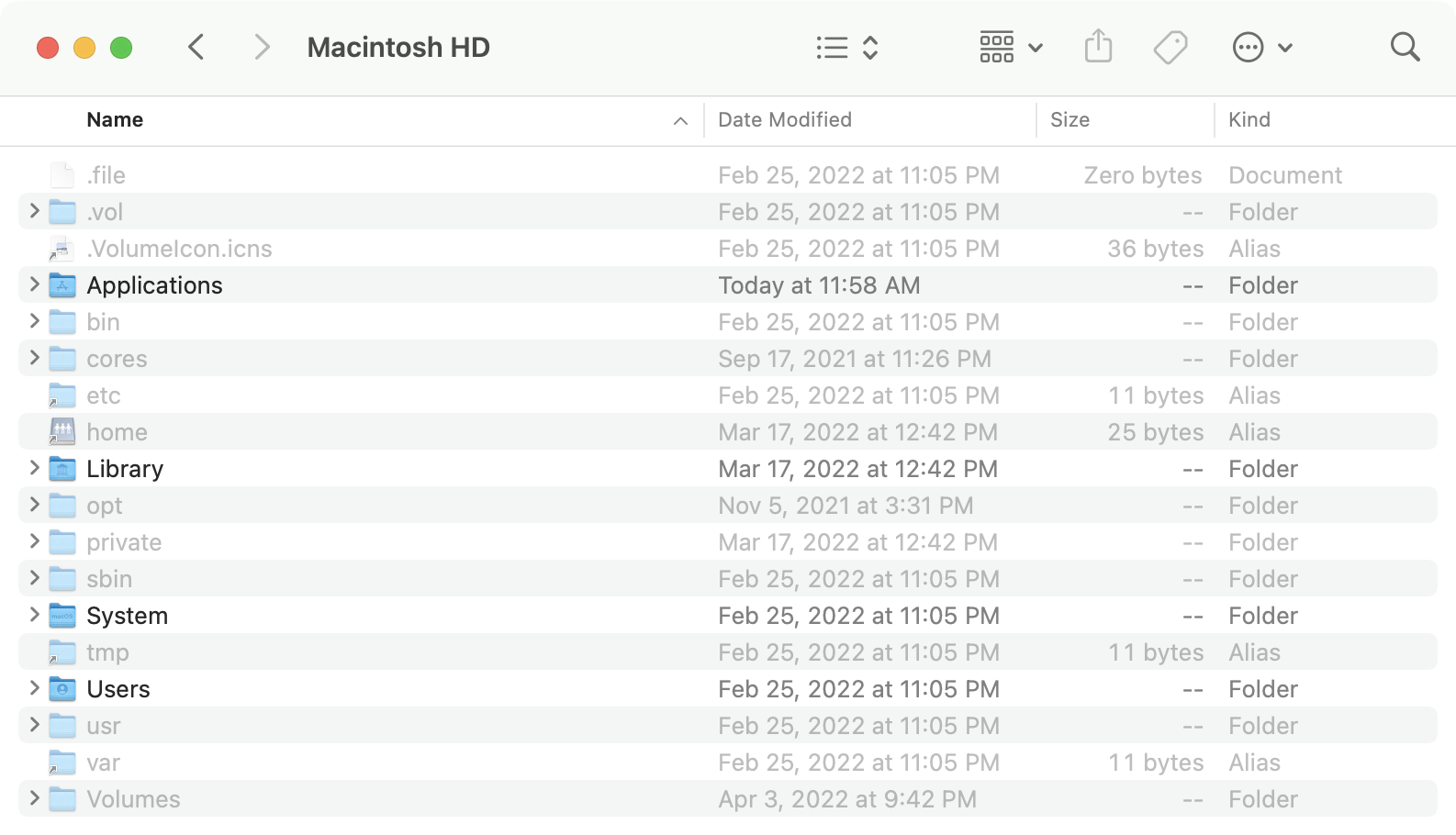

~/Librarygives you an idea of where your data is going. Once you have established what is taking up space, you can then check said application to see if you can delete or remove packages/support files/items from that application.The Scourge of invisible folders

Toggling hidden files only a keyboard shortcut away, in the finder hit Command + Shift + . (period).

macOS has a surprising amount of invisible folders. Most are located at the root of the hard drive. These are (mostly) related to the *nix underpinnings of macOS and are essential for proper operation.

This includes

bin,cores,etc,opt,private,sbin,tmp,usr,varandvolumes, however in the~/(your user directory) has many more, as a general rule any in that start with.are created by applications and ones that are not, are OS related, which should only beTrash, which is where your Trash directory is.Homebrew users may find that they've installed a significant amount of utilities to their

usr, and it's highly recommended that you use Homebrew to remove undesired packages.These probably will not be very large for the average user, but for developers, various versions of Node, Ruby Gems, and VScode files can end up sapping 100s of MBs if not GBs. I had an issue with Node v14 installing improperly with my M1 Max and eating 8 GB per Node V14 version.

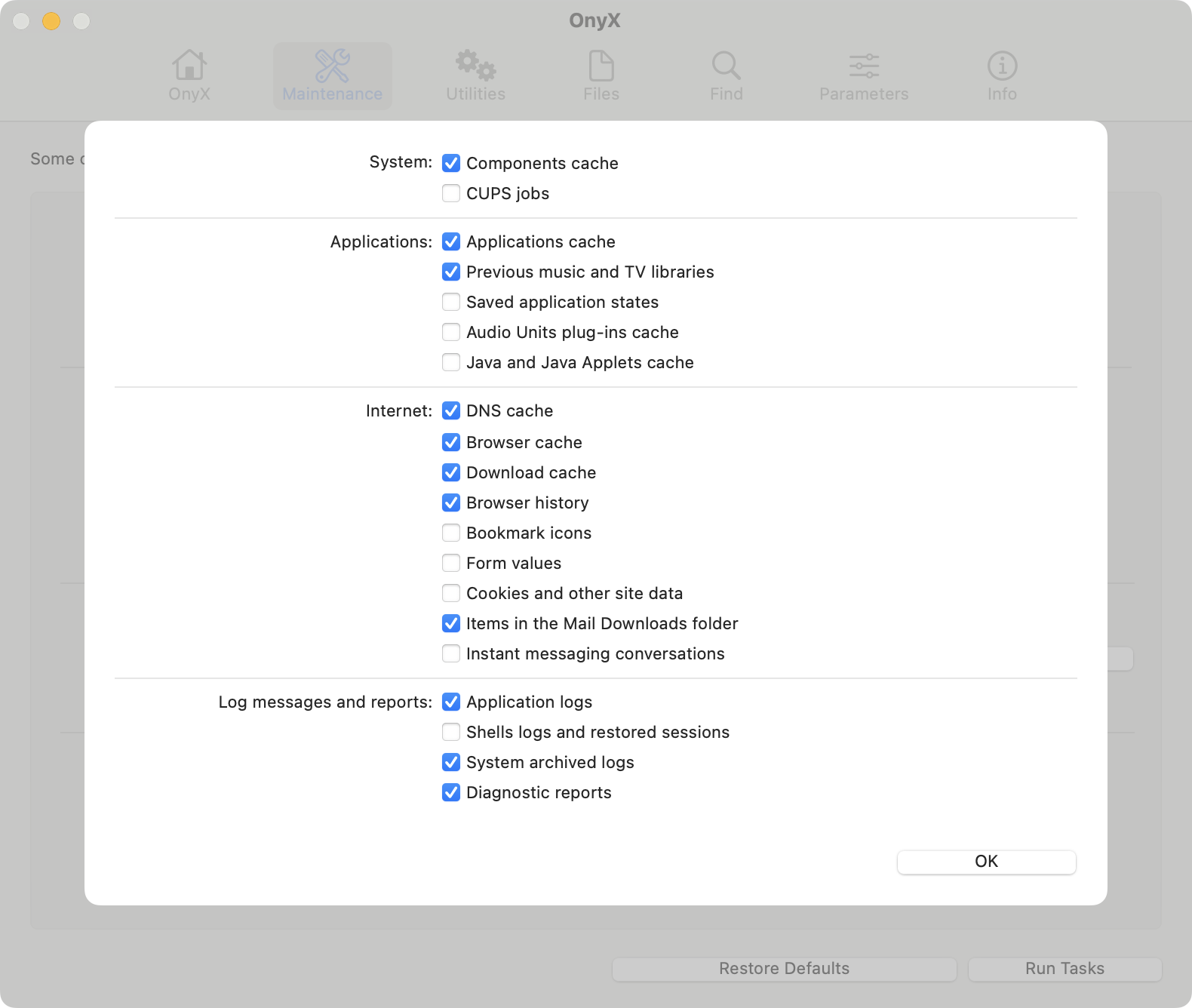

Utilities

There are a lot of not-so-great "disk cleaner" utilities that help grapple with disk storage. I've linked two tried and true free open-source utilities that have been around for a decade plus. These aren't the only valid utilities but both allow you to understand macOS better and of course, are free.

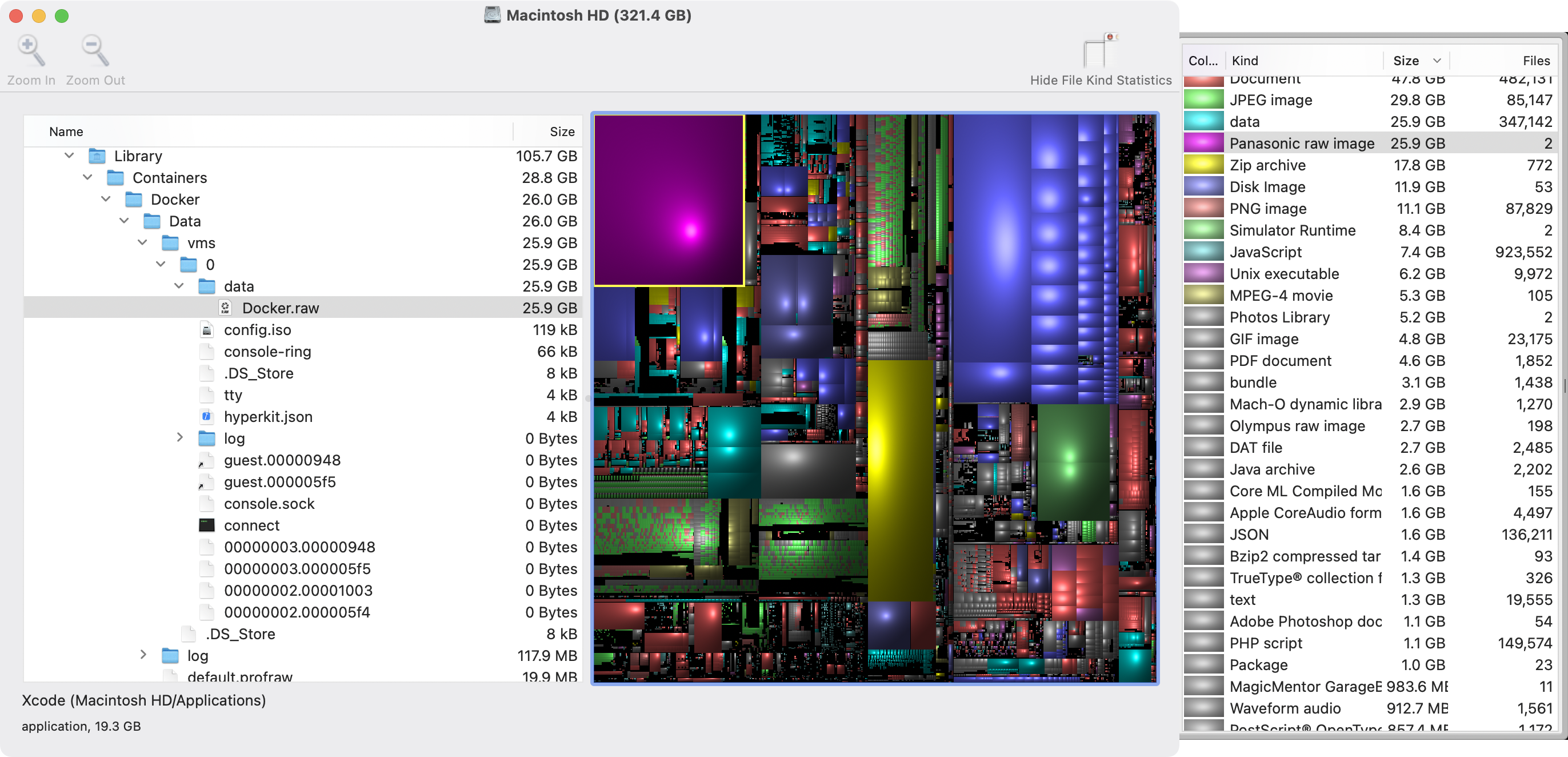

Disk Inventory X

The old standby, Disk Inventory X still works under macOS 12 Monterey but requires right-clicking and opening to bypass security alerts. Disk Inventory X scans your entire Mac similarly to macOS's internal utility but does allow you to more quickly view what's taking up space in a Finder-like experience.