-

An (albeit flawed) counterpoint to the M1

PC World published Apple M1 vs. Ryzen 5000: MacBook Pro and Asus ROG Flow X13, compared article. While this is entirely leaning on the GPUs found in x86 laptops, it’s also leaning entirely leaning GPUs found in X86 laptops.

Thus far the biggest concern with my M1 is the GPU, I can edit 4k video and its fast long as I stay on the happy path, using Final Cut Pro X. It's easy to scoff at the PC World article for not including web browsing benchmarks, compile times, and other CPU bound tasks but the fact remains Apple's fight for total performance supremacy will be much tougher on the GPU front.

-

You've been programmed to believe conspiracy theories

It's been awhile since I've linked a single article, but I absolutely loved the Popular Mechanics article, "How You've Been Conditioned to Love Conspiracy Theories"

"In addition to xenophobia, O’Leary fell victim to “proportionality bias,” the logical fallacy that believes the cause of an event should feel as important as its impact. Proportionality bias lies behind many of the most popular conspiracy theories. For instance, it feels disproportionate that JFK, the so-called most powerful man in the world, could have been assassinated by one disturbed individual, or that Princess Diana,—a powerful, famous, real-world princess—was killed in a car accident."

I wish I could say I had "proportionality bias" as part of my lexicon prior to this as I certainly intuitively understood that many conspiracies are a desire to assert order in a chaotic world and an artifact of the "Tiger in the brush" scenario". It stipulates that it was evolutionarily advantageous to spot false patterns than none at all. If you believed there was a tiger in the brush, then regardless if there is actually a tiger in the brush, you'd still be rewarded with a continuous existence. Thus, evolution tolerates, if not rewards, a baseline paranoia, and overactive imagination. It's this same process that allows many to find meaning in the noise, be it the infamous Virgin Mary in peanut butter to believing that 9/11 was an inside job.

About the only thing missing is that from my (albeit somewhat limited) observations, conspiracies act as currency among the indoctrinated which is its own chaotic agent and generally any rebutal is met as further evidence of the conspiracy as it's just that much deeper. It many ways, conspiracies have filled the void as Americans become increasingly less religious although clearly no more logical.

After a year of being stuck, tethered to social media, the claws of conspirancies turn family members into born-again believers and we're all the worse for it.

-

If you're wondering what the next x86 Mac Pro specs will be...

Largely ignored by the media outside of a few tech journals, the 3rd Gen Intel Xeon Scalable Processors were announced. Operating under the pretense that Bloomberg's reporting was accurate after predicting the latest Apple event, we can expect at least one more iteration of x86 Mac Pros along side Apple Silicon Mac Pros. Many Mac Pro enthusiasts lamented the Mac Pro's lack of PCIe 4.0, even if there isn't a massive point to it beyond future-proofing. There also was a bit of a disconnect. Intel has not had a PCIe 4.0 chipset until very recently, and Mac users were expecting the impossible out of Intel. GPUs simply do not benefit from PCIe 4.0 as the bandwidth requirements just do not require the increased bandwidth (AMD has shipped 8x PCIe 4.0 GPUs, which is equivalent to 16x 3.0), and there's barely a speed penalty still running the latest GPUs in PCIe 2.0. That leaves mostly SSDs as the sole component that benefits from PCIe 4.0, although I'd argue two things:

- While not ideal, ASM2428 chipsets have allowed NVMe SSDs (based on the 4x standard) more bandwidth by address more lanes. Currently, in the PCIe 2.0 realm, users can achieve 3.0 speeds using an 8x or 16x slot (as well as multiple SSDs on motherboards that do not support bifurcation). It's likely we'll see similar chipsets allowing PCIe 3.0 cards that address more PCIe lanes to attain PCIe 4.0 speeds in regards to SSDs.

- Latency and random read/write and caching mechanisms will have more perceivable benefits for daily use than increased bandwidth at this point, considering 3000 MB/s is only going to be a prohibited speed in extreme transfers.

The interesting specs

So what will the new Mac Pro specs look like?

- 10 nm, "up to 40 cores per processor" - This is using the Sunny Cove instead of the Cypress Cove variant, which is 14 nm.

- "46% more performance" - As of late, Intel has taken to dubious stats, and it is sad to see. Considering they're moving from 28 -> 40 core maximum, roughly 40% more cores, this isn't a surprising or impressive claim.

- " 8 channels of DDR4-3200" - Same as previous Xeons.

- "64 lanes of PCIe Gen4 per socket" - There's a lot of ways that PCIe lanes can be handled, but this is a beast amount of bandwidth, and with bandwidth switching certainly enough for workstations (servers are a different beast). Each CPU can negotiate a theoretical 128 GB/s of throughput via PCIe, although that might not be entirely accurate.

It's pretty easy to extrapolate that we're going to see a PCIe 4.0 Mac Pro with up to 40-Cores, roughly the same memory ballpark max-memory cap that'll still have the blistering prices we've seen before. I don't expect Apple to expend much energy redesigning the case, but we'll see the (likely) the final x86 Mac Pro setting a very high bar for Apple Silicon to follow. Still, mostly, the consensus is users want PCIe + upgradable memory and storage to continue.

-

Foolproof way to update the 2010 - 2012 Mac Pro 5,1 to the 144.0.0.0.0 firmware

Updating the firmware on a Mac Pro isn't difficult, but it is possible to "miss" firmware upgrades. This guide is for anyone looking to get to the latest (and most likely last) firmware released for the Mac Pro 5,1s, without having to install Mojave 10.14.x, or if you already have installed Mojave, or are looking to install Mojave. My first try, my firmware was stuck at 138.0.0.0.0.x even when running Mojave 10.14.6. Updating the firmware adds key funcitonality to the Mac Pro 5,1s, most notably native NVMe m.2 boot support. To learn more about Firmware and the Mac Pro 5,1s, see the Firmware Upgrades section of my Mac Pro Upgrade Guide.

These aren't the only instructions on the web, as MP5,1: What you have to do to upgrade to Mojave (BootROM upgrade instructions thread) for firmware upgrading. However, this the method I've found most reliable for users who are having firmware troubles.

Step 0: Remove unsupported GPUs

The biggest change for macOS Mojave is the deprecation of OpenGL and OpenCL. OpenGL has been a thorn in Apple's side for quite some time, as it's been nearly dead for years. Vulkan, the OpenGL successor, wasn't quite ready for primetime when Apple originally created Metal for iOS and thus decided to port it macOS. Despite the annoyingness of having to meet the requirements, it was a necessary evil. Mojave will not install if you have a non-metal supported GPU.

Note: some users are reporting they had to remove all PCIe cards sans their storage controller (SATA card) and GPU to install the firmware update. I did not. If you encounter issues, try removing additional PCIe cards.

Step 1: Have a 10.13 drive

Unfortunately, this is the biggest pain if you've already updated. You'll need a separate volume to boot into 10.13. Amazon and Newegg each have 120 GB SSDs for under $20 USD if you need a temporary drive to install macOS 10.13 on. (upside is you can buy a USB case and turn into a very fast USB 3.0 drive afterward or return it). You can get old versions of macOS via the Mac using DosDude1's installer if you can't access it. If you have no intention to upgrade to Mojave or already have it installed., don't worry. We won't be installing Mojave.

Step 2: Boot 10.13

The next step is pretty straight forward, boot into your install of macOS 10.13 if you haven't already.

Step 3: Download 10.14.6 Combined installer

Fortunately, firmware flashing does not require updating in a particular order. I went from 138.0.0.0.0 to 144.0.0.0.0 without any problems. There's several avenues for this, including the Mac App store, but when I used the Mac App Store route, I didn't get the combined OS installer (The Mojave installer + all the updates to Mojave). The easiest way to obtain the final combined update for Mojave is to use Dosdude1's installer. Much like before, download the OS DosDude1's installer, even though we have supported hardware but with the patcher for 10.14.

Clarification: You do not need to use the DosDude1 installer, as you can grab the update via the App store or other sources but I found this easier. Apparently this link was posted this on MacRumors and a few posters didn't read the full instructions and suggested that I was advocating using DOSdude1 on the OS. I am not. The Mac Pro 4,1/5.1 does not need DOSDude1, so do not run the patcher on Mojave. The Patcher just happens to be extremely reliable about fetching the correct version, and skips the hassle of the App Store.

- Go to DOSdude1 Mojave patcher and download it

-

Launch the patcher.

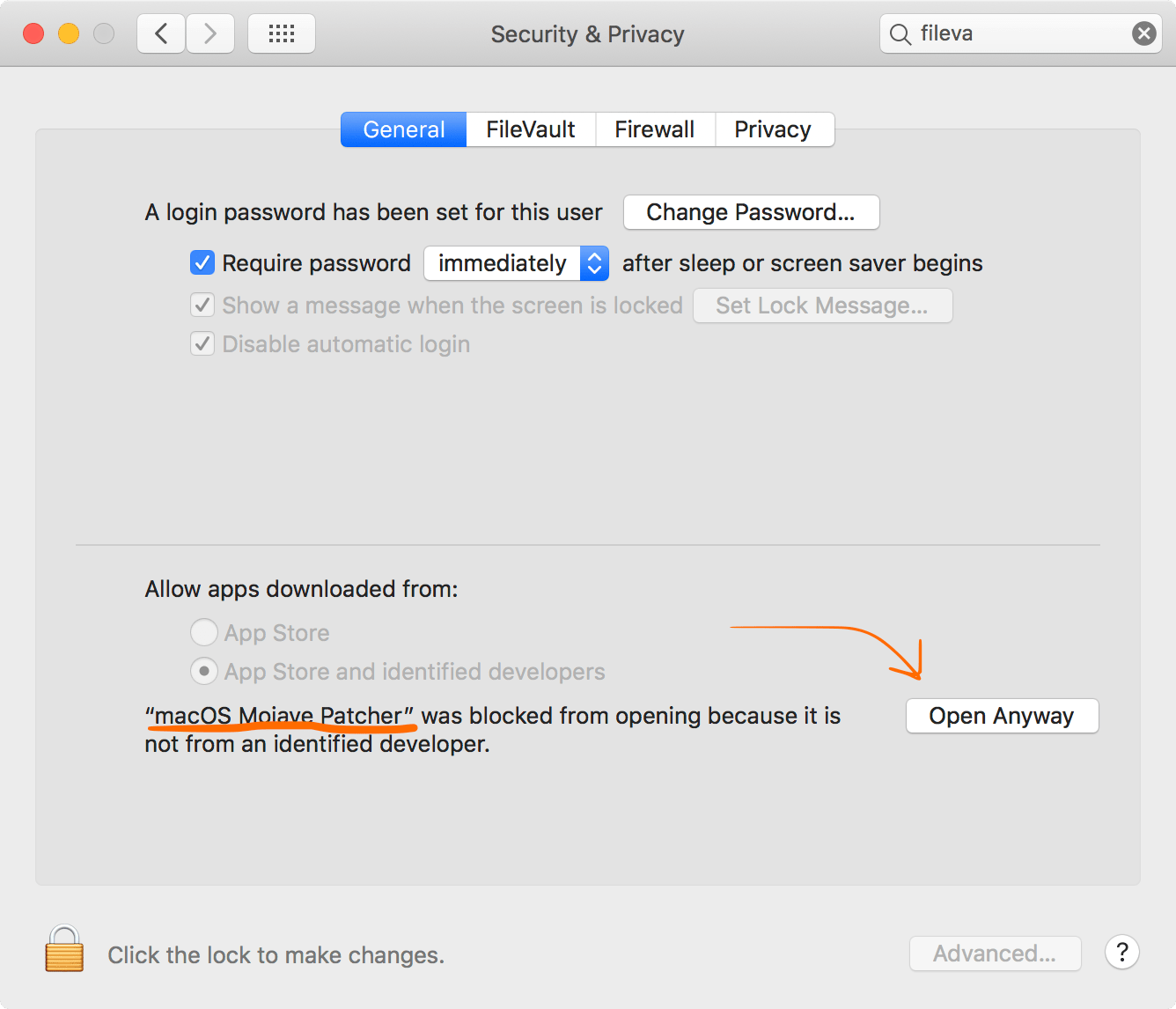

Depending on your security settings, your mac may suggest it's from an unverified developer. Go to the system prefs, Security and Privacy (general), and allow the app to open.

You'll be bugged one last time.

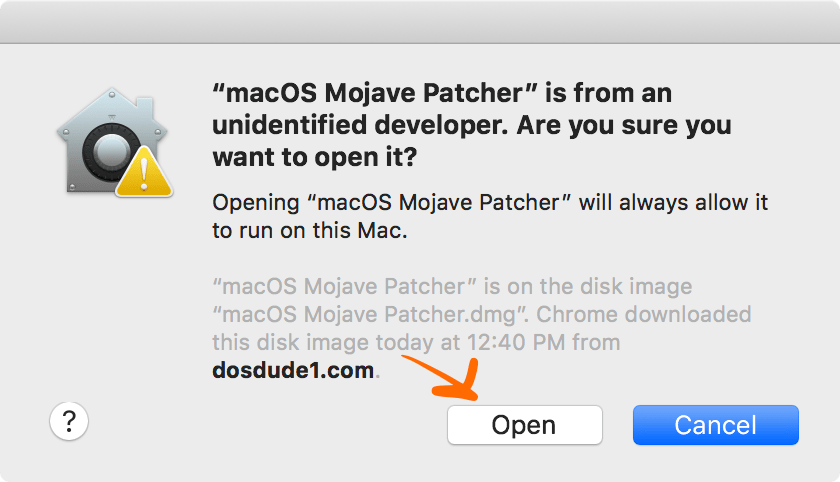

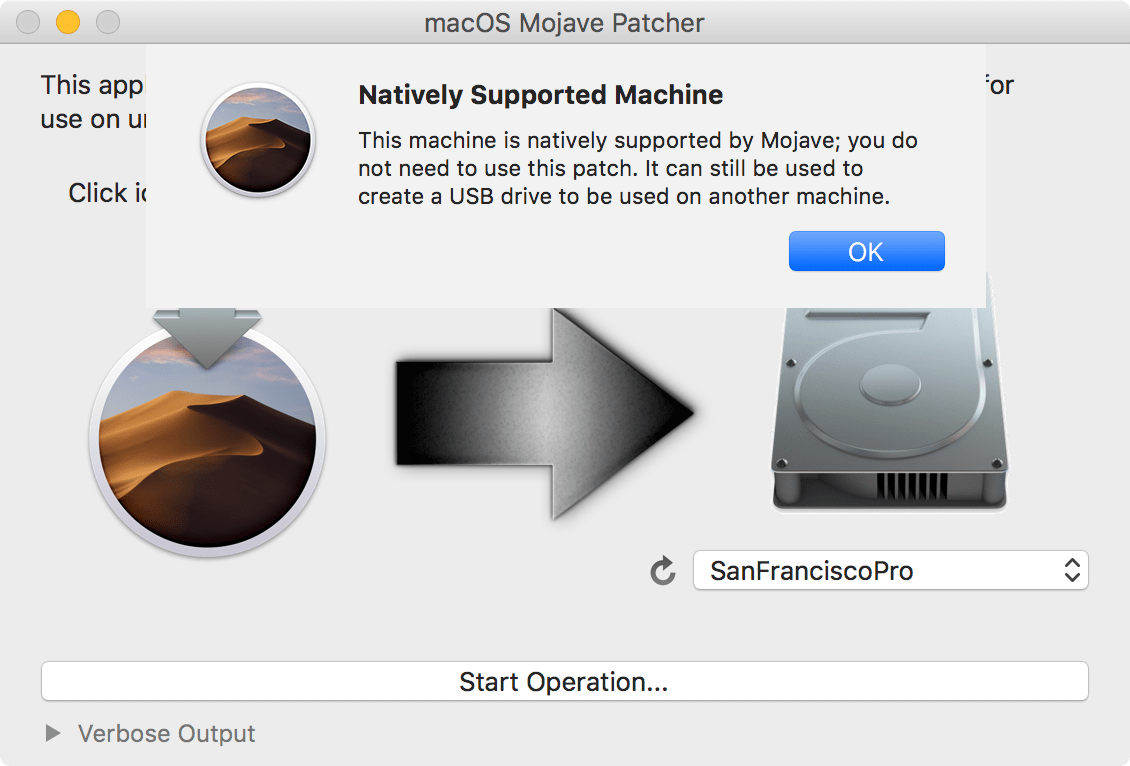

The patcher should warn you that you are on supported hardware.

This is fine, ignore the message. Within the patcher, select the download Mojave from the Tools menu.

- Once the download has completed, quit DOSdude1's patcher.

Step 4: Launch the installer and click shut down

The installer should bring up a message about firmware and a shutdown message. This will not start the Mojave installer, only the firmware.

Step 5: Boot the Mac

Using the instructions in the previous image, press and hold the button until it blinks. If you do not have an EFI enabled GPU (see more about EFI in my Mac Pro Upgrade guide), you will not see any video output.

I trimmed down the video, as it took about 15 seconds of holding before the button flashed. After the button flashed, the internal speaker emmitted a long lowfi "boop" sound.

Step 5: Verify

Go to About this Mac, and click system report. Under the first screen, look for the "Boot Rom" text. This should list your firmware version. From here, you can continue using 10.13.6, upgrade, or boot to your 10.14 volume.

The 144.0.0.0.0 firmware works with any version of macOS your Mac Pro supports.

Updated: November 13th, 2019 MacRumors feedback

Updated: November 4th, 2019 based on Feedback from Mac Pro Users user group on Facebook.

-

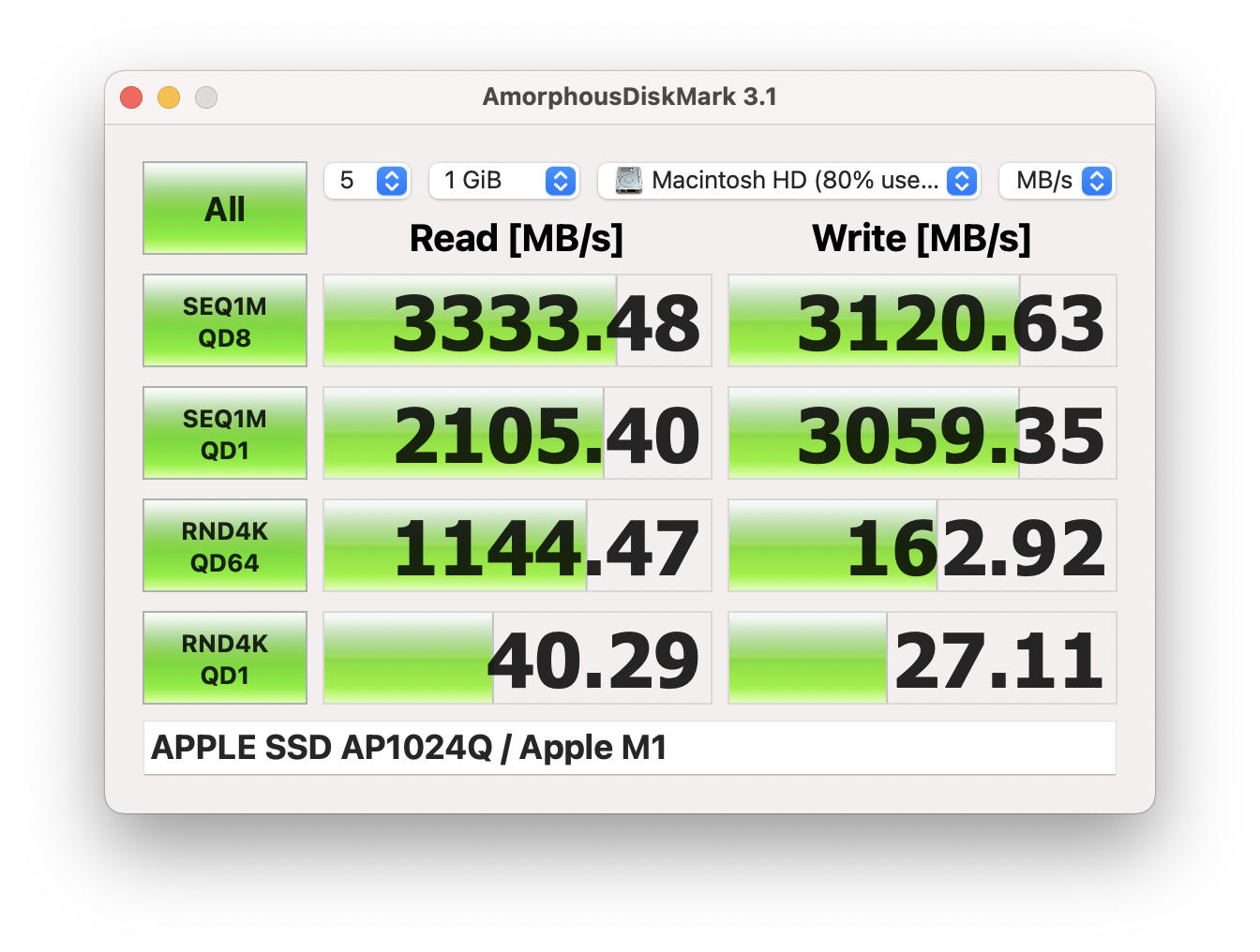

AmorphousDiskMark is CrystalDiskMark for macOS; lets all stop using BlackMagic Disk Speed Test and AJA Disk Test

Benchmarking a MacBook Air M1's SSD.

Awhile back, I made a video about USBc and the classic Mac Pro but lamented yet ago the terrible benchmarking on macOS. The first commenter on FaceBook pointed out that we finally have a good disk benchmark utility AmorphousDiskMark. While it isn't a direct port, it's heavily inspired by the famed and loved Windows utility, CrystalDiskMark.

So why am I always complaining about BlackMagic Disk Speed Test

BlackMagic's Disk Speed Test only tests one thing, continuous throughput. This is useful but only measures one aspect of an SSD, and doesn't necessarily mimic accurately how most disk interactions occur. Random Read and Write tests are as important, if not more so, as many SSDs can deliver fast maximum continuous read and writes but much less so for random small data blocks. CrystalDiskMark tests random reads and writes both as queued requests and single requests. The default depth is pretty high for the test. Usually, an OS wouldn't have that deep of a queue, but the Q1T1 does mimic a singular request. Also, CrystalDiskMark measures IOPS (Input/Output Operations-per-second), which is similar but also a different measure of disk speed.

Better but not perfect

There's plenty of aspects that aren't covered, such as latency, burst performance, power consumed, and mixed random read/writes, but this is a massive step in the right direction for gauging SSD performance on macOS. Oh yeah, and it's free.

Let's retire BlackMagic's Disk Mark and embrance Amorphous Disk.

-

Gulp 4.x and .Kit work flow

Codekit has its own very simple templating language, .kit that's actually quite useful when you're not looking to spin up something like PHP or Python or in the Pug/Jade vain (which latter two I've never been able to commit to). The documentation is straightforward. You can find it here but passing off a project with CodeKit dependency probably isn't viable for many situations. Also, Gulp 4.0 is much easier to read and much more pleasant to work with. You'll need, of course, a current-ish version of Node to run this.

Rather than post a git repository, I've created a GitHub Gist for anyone looking to make .kit files work with Gulp. It features a really basic simple set for compiling .kit, Sass, JS and uses BrowserSync for both streaming CSS changes and live reloads after JS/Kit changes. The readme has the default directory structure. All the files land, and in a /dist directory, that's likely something you'd ignore in your .gitignore.

The Tasks

gulp- runs all the compiling processes oncegulp watch- launches browsersync server with live compiling/processing

Apologies to anyone using RSS as the embed won't work, but you can view the gist on github.

-

Oculus Quest 2 vs classic Mac Pro 5,1 (2010/2012)

The Mac Pro 5,1, despite its age, still can run many modern GPUs, so it shouldn't be a big surprise that the Mac Pro can use VR headsets for gaming (under Windows 10). The Oculus Quest 2 is probably the most attractive option as it's inexpensive at $300, includes VR controllers, now supports higher refresh rates/hand controls, and functions as a stand-alone game console. There are a few considerations that you need to be aware of.

- Windows 10

- Oculus Link Software

- Steam VR

- SteamVR Performance Test: Before taking the plunge, you can test your computer to see if it is VR capable in Windows using the free SteamVR Performance test software.

- USB 3.0 card that provides enough bandwidth: Cards with one controller for four ports may not provide enough bandwidth. The Oculus Link software will continue to work (with warnings), but you will experience blurred visuals due to higher video compression

- Proper USBc Cable: This might seem trivial, but USBc cables are not created equally. Many USBc cables use the charging spec but run at USB 2.0 speeds. Finding the correct cable is a huge issue. Oculus recommends its own $80 cable or the Anker Powerline+ USB C to USB 3.0 Cable.

- Active USB extension cable: Longer cables experience signal degradation over long distances. Active USB cables draw power from the USB bus to amplify the signal so data rates can be maintained. I personally used the CableCreation Active USB Extension Cable, but there are other options.

- GPU: Higher-end = better. I have a Radeon VII, but the Radeon 5700 XT is an equally viable option. The Vega 56/64/FE are also decent. Some people have posted usable results with the RX 580. If/When macOS is updated to support later model AMD GPUs, those will be the preferred option

Personal Thoughts

Tethered VR feels like a big step back from untethered, and regardless, VR still feels like it's in its infancy/gimmicky. This is partly because of the low resolution, and I imagine even when we get to 120 Hz and 8k per eye, it'll still feel low resolution. The Quest 2 works pretty well and is easy enough to set up, but with the space considerations, cost, and limited titles, it's hardly a must-have. I tried Skyrim VR, and I have to say, I found it nauseating trying to navigate the world to really bother trying to play it at length. The nausea effect differs person-to-person, and I seem to experience it worse than the average person. Games that do no feature 1:1 real-world movement are almost a no-go.

One of the most exciting features of VR is the ability to exercise while gaming. Boxing games are a huge workout. I found myself hitting 170 BPM while dancing around and swinging wild haymakers. When VR gets better, I can easily imagine many VR gamers being relatively fit.

-

Apple 2020 Report card

Every year Jason Snell puts together a round table of opinions from Mac pundits called the Apple Report Card. I particularly look forward to it as I inevitably play the game "How would I score it?" I probably should never be asked by anyone as a panelist for something like this as I'm much too dower.

`Mac: 3.5

The M1 is wildly exciting. I've written about it as I'm an early adopter, but there are some curious limitations that aren't appropriate for many mid-tier users, 16 GB of RAM, lack of eGPU support, or more than two displays. These are all things certainly to be addressed (except maybe eGPUs), but it does look like the death of user serviceability. The iMac will be the big canary in the coal mine for the Mac's future.

A computer is nothing without its OS, and Big Sur wasn't nearly as bad as Catalina, but I'd rather see a focus on "when it's done" than yearly OS releases.

The rumors for the Mac laptops sound amazing. No longer feeling the holy crusade against ports or thinness at the expense of everything else gave us in 2019 both the Mac Pro 2019 (even if its absurd) and the MacBook Pro 15 inch 2019. With Magsafe and SD card slots, rumored the Mac laptop will be at a new all-time high, besting the 2015 MacBook Pro as the best design/feature set. If I can get a 13 inch Mac Pro with 4 USBc ports, SD card slot, one more external display, MagSafe and more RAM in 2021, I'm selling off my m1 Air and dropping serious coin as this has been my dream and may finally be the first time a laptop as my primary computer in my entire life. That said, this in the future and not today and while the Apple Silicon Macs are very impressive, its not enough to ballast the software shortcomings. Also, for the millions of Intel Mac owners, I worry about long tail support.

Desktops? Much less so. Apple's GPU performance is scattershot, and a desktop without the ability to upgrade its RAM or GPU sounds horrible.

Phone: 3.75

The return to hard edges is wonderful, but Apple doesn't offer a pro mini, so yet again, I ended up with a larger phone that I want. As someone who lives an active life, I'd value some even easier to tuck away, but I also want the latest and greatest. There seems to be no compromise. I still miss the headphone jack, and I worry Apple will go portless to no-one's benefit. I have accessories that rely on the iPhone 12 beyond just charging, data, and audio, such as the Shure MV88.

Also, my iPhone XS had syncing issues with iOS14, which never were resolved no matter what I tried until I received iPhone 12. My mom had the same exact issue. The Apple support pages are flooded with people with this issue. If you're not on the receiving end of this, I'm sure you'd score it higher, but it is pretty frustrating that I was hit with some wacky battery issues and syncing in 2020.

The lightning port needs to go. It's a USBc world now. The lightning port is one Apple standard that I begrudgingly admit was a good idea for its time as it was a much more pleasant experience than mini/micro USB. Now we have USBc. The lightning cable standard should have died two years ago.

It still feels utterly suicidal to go caseless on an iPhone. I rolled caseless on the iPhone 4 and 5 without breaking my phone. The iPhone 6 was a different story. While I didn't expect to feel the speed bump from my XS, I did but I'd of traded it for longer battery life and durability.

In what's bound to be my most controversial take, iOS 14 beyond its privacy features, did not impress me. iOS 14 is still a mess. App organization is still the most tedious experience on mobile. The dashboards feel more gimmicky than useful. I relegated the few I like to the feed instead of anywhere on my screen.

iPad: 3.5?

It is kinda a stagnate year for the iPad, although I still wonder if the iPad Pro will be able to run macOS or a modified version of it when using a keyboard mouse combo.

I'm not a tablet person as it's too compromised, and the iPad Pro struggles to really be a pro device outside of its beautiful drawing capabilities. I constantly wonder "Who wants to make these sort of compromises?" Perhaps someone who's life is majority writing but creative professionals to developers, the iPad is a dead end still.

Apple also still has the bizarreness of a device that offers one set on Lightning port (kill already) and USBc (embrace it).

Despite my naysaying, the iPad still remains the best tablet by miles, and I wouldn't lie if I didn't want one for sidecar so I could draw. When I had an iPad 2 I found that I really didn't ever do anything beyond consumption and simplistic games and to this day, it still seems best suited for this. The limitations found on the iPhone are tolerable due to the contraints but nearing a laptop's size, the iPad still feels like a "big iPhone". There's certainly appeal in that but the iPad Pro feels more like a high end consumer device than anything professional beyond its beautiful pencil. Plenty of people love this product, and I do not. Perhaps we'll see the convergence of iPad OS with macOS with the ability to launch macOS apps on the Pro.

Wearables: 3

Watch: 4, Headphones: 1.5I still hate that Apple has a virtual monopoly on its headphones by locking out other headphones makes from reading text messages and not licensing its pairing chipsets. The Airpods remain one of the most mediocre sounding headphones on the market. I have a pair of the PowerBeats, which I use a lot more out of convenience than actually loving the product. Killing the headphone port meant switching to a lesser bluetooth experience and Apple knew it and thus served up a way to de-suckify Bluetooth without letting anyone else have it. If I sound jaded, I most certainly am as its the Apple tax at its worst as Apple actively blocks people from having a superior experience. I bought a pair fo the AirPods Pro and returned them as they sounded worse than the Powerbeats, and worst than my JVC Marshmellows. I was stunned as I figured I'd be buying into moderately better audio quality. As product, they're exceptionally well designed. As headphones they're bad. The Airpods Pro are only incredible if you're willing to ignore that if on a level playing field, the Airpods would be just "eh".

The Airpods Max is the product that'll certainly have its proponents, and the wireless over-the-ear noise canceling bluetoth market is filled with mediocrity. I've tried and returned Momentum 3s and Sony WH-1000XM4. Thus far I've yet to find over-the-ear headphones that justify the money. For them to be called the best sounding wireless noise-canceling headphones, is a low bar to clear as wired, non-noise canceling headphones will best them at 1/5th the price of the Airpod Max. Apple likely has the best pair, but the price is absurd for the quality as it suggests to an audio connoisseur such as myself the truly high end, but it's clearly not. Then the case? Oy, bafflingly bad, and top it off, heavy and not IPX rated for working out. These are office headphones in a world without offices. If this had been priced even at $400 or truly matched their price class at least with less expensive headphones in the wired world perhaps I wouldn't be as bitter if Apple allowed others to its proprietary pairing. These very much feel like a product waiting for a revision.

The Apple Watch is in a class of its own. As a personal health device, it's unmatched. The blood oxygen sensor isn't medically accurate which makes it more novelty than anything else. It's kept me active during covid, with my 820 calorie a day goal. I also pretty much use my watch minimally outside of exercise or while driving but I still love it even if I use only about 3-4 apps on it. I'd be inclined to give the Watch a 4.5 if it weren't for the syncing issues that plagued it.

The embargo of custom watch faces remains one of the pain points. The iPhone received (mediocre) customization, so why not the Watch? The number of analog watch faces flummoxes me as it feels silly to me on a digital face. Custom faces would be nice for me.

Apple TV: 0.5

Overpriced. Bad Remote. No updates in 3+ years. Yeah, there's the Apple Health app, but that is a tough pill to swallow. I haven't owned an Apple TV since the Apple TV 2, so I can't comment on the OS improvements or lack there of. The remote is still trash. If Apple wants to convince anyone its serious about gaming on the Apple TV, then it should come with a remote.

Services: 4

iCloud is pretty good. I mean really, it is. Apple News more expensive than it needs to be, Apple Arcade turned out to be just ok. Apple TV had a few pretty good TV shows that I enjoyed at no cost with my new iPhone. I really want though Apple to encrypt my iCloud backups.

Apple News' design is still pretty bad but the rest of it is fine to good.

Hardware Reliability: 3.5

Now that we're past the butterfly keyboard, the self-inflicted wounds are over. The iPhones still feel built to break, which is funny as the Apple Watch does not.

I saw plenty of issues in the wild this year, like display issues, but the MacBook Air M1 feels like a mature product despite being a first-gen product.

Software Quality: 3

I'm at the avant-garde, so I tend to find the hard edges being someone who is web developer/UX engineer, which means a large toolset that spans everything: development tools (Docker, Webstorm, VScode, Xcode, Android Studio, Homebrew, Node, Ruby, Git, React, Webpack, Gulp), Visual Design (Sketch, Pixelmator Pro, Photoshop, Adobe XD, Figma, Axure, Illustrator), Video (Final Cut Pro, Premiere Pro, Motion, Compressor, After Effects), Audio (Cubase, Ableton, Logic, too many plugins).

Catalina was utter chaos. Big Sur is much better, although prone to nebulous errors when trying to install or run certain applications like the infuriating "app doesn't have permissions," which requires, at best, chmodding or, worse, self-signing code. I can generally navigate these issues, but I also operate outside of most people, and to require my skillset to just get things to go seems absurd.

As locked down as Catalina was and tossing out legacy like it was going out of style, Big Sur seems content with the field burning and doesn't require much more land. I have many thoughts on Apple's out with the old as Windows 10 can still run on 2006 Mac Pros and run applications written for Windows 98.

Big Sur has been hell to pay as Serato still doesn't have a solution for Big Sur. Adobe doesn't really have workable solutions still for some of its Apps on the M1. Apple seems contented to punch developers of both macOS and iOS in the face repeatedly.

Big Sur works extremely well on M1s and is a mild improvement over Catalina, which is all kinds of impressive.

iOS14 was moving in the right direction but still doesn't address how terrible app organization is on iOS or how bad folders are.

That said, the meaningful updates to Logic Pro and a lesser extent, Final Cut Pro were very welcome. Logic represents the best deal in music production, hands down. It can score a blockbuster movie or record, mix and master an album, and is now encroaching on Ableton. Final Cut Pro X still isn't for Hollywood and has some annoying features like the librarying as opposed to per-project assets, but it's a pleasure to use. Motion needs more TLC as essentially, and it's the same app it was eight years ago. Motion is very good but desperately needs more. Also, the educational bundle at $199 for FCPX, Logic, Motion, Compressor, and MainStage is the best deal in software today.

I still wish Apple would reboot Aperture with tight integration with Photos or at least make a "Photos Pro" app.

Developer Relations: 2

Xcode is still a monstrosity. They gave devs a break for the first $1,000,000 for 15%, which is wonderful. Wildly changing the OS to break app support is frustrating just from the perspective of a user.

Social/Societal Impact: 3Pledging money to help fight racism was nice, and while problematic for free speech, de-platforming Parler was another win for common decency.

Trying to force App transparency for privacy and also causing the cold war with FaceBook to become hot suggests Apple is on the correct side of history. Without Apple, who would champion privacy in the SV? It seems like no one else.

Still, iCloud isn't encrypted, and developer relations aren't great, which mars a solid year with Apple dancing the 2020 shuffle without blundering hard, and Tim Cook was out in front to talk race (even if stifled) in a way none of the other big tech firms were willing to do. It's not perfect, but engagement counts for something in a year where the fabric of reality has been politicized.

Closing:

Apple's software the weakest link for Apple, and the feeling of macOS as a walled garden still persists even if it's more the hardware. After years of Windows playing catch up and offering better performance and longer tail support, its nice to see Apple toss out a beautiful transition to M1, but it also leaves a lot of asterisks around the legacy hardware and upgradability. It feels like the iOSfication of the hardware across the board. What was once only acceptable for MacBook Air is now standard for all Macs.

2021 is going to be one hell of a year for the Mac. The renewed focus on the Mac makes me happy as my professional and creative expression is entirely tied OS X's legacy as the mix of power and the polish of ease of use. I buy Apple computers for macOS entirely.

-

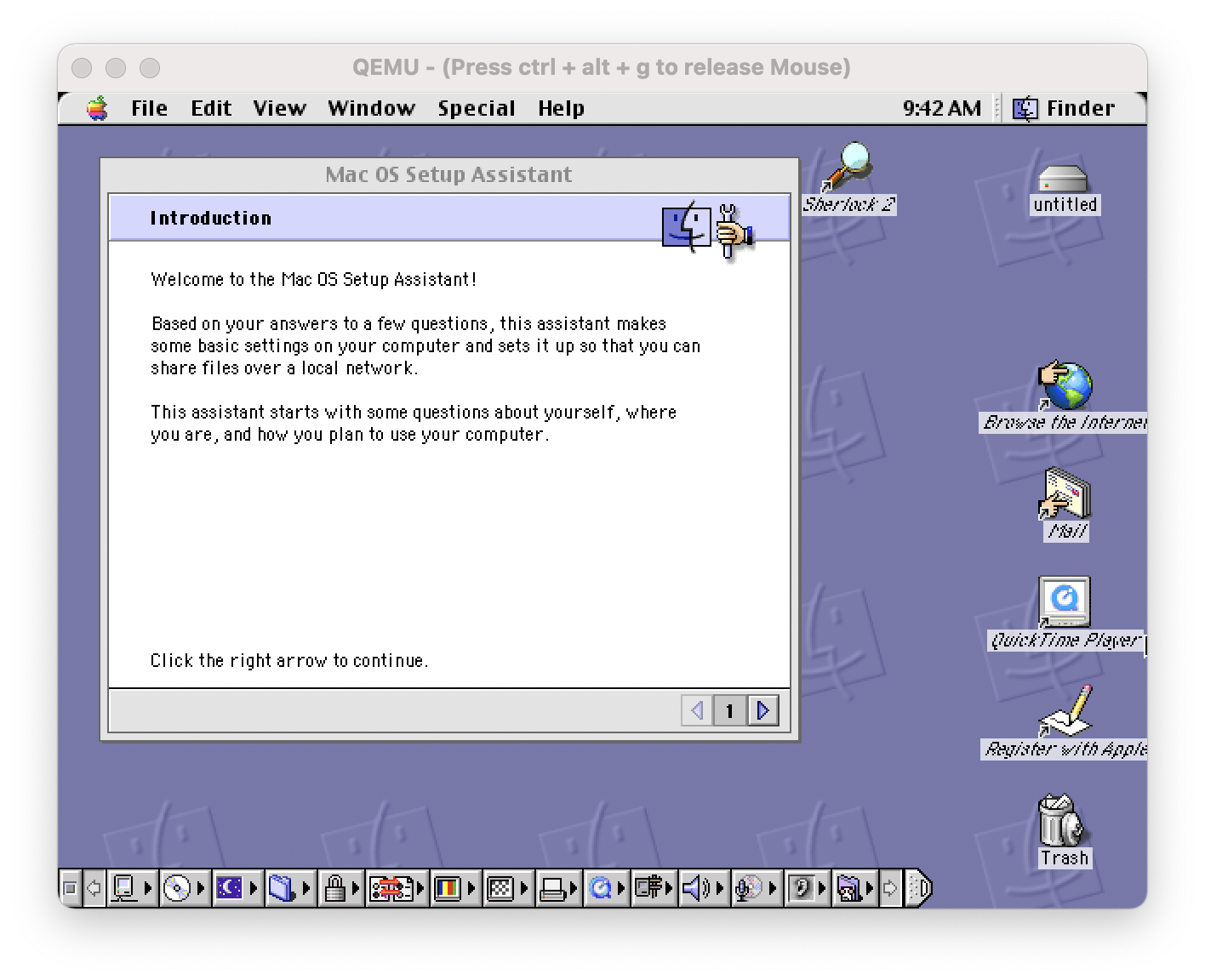

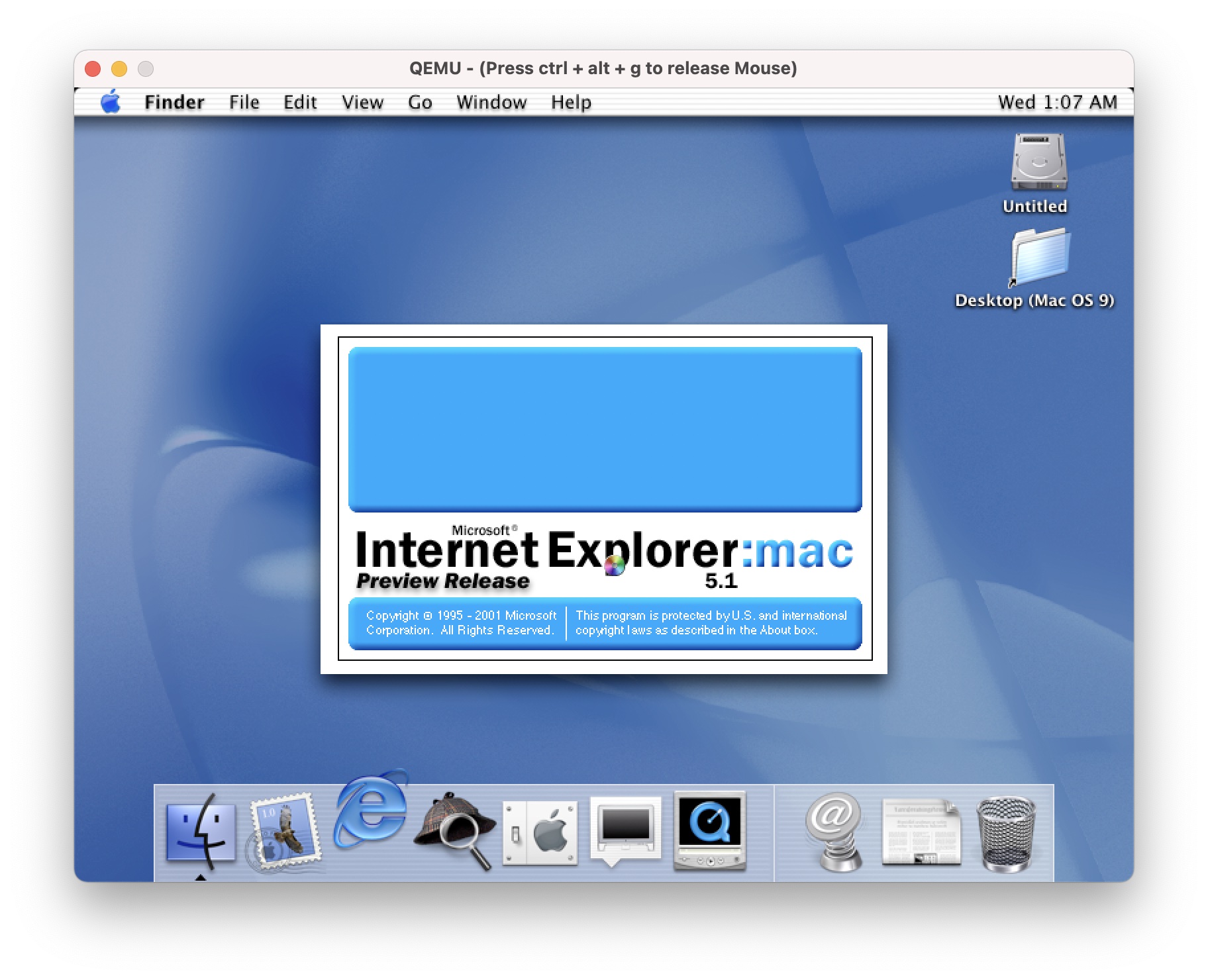

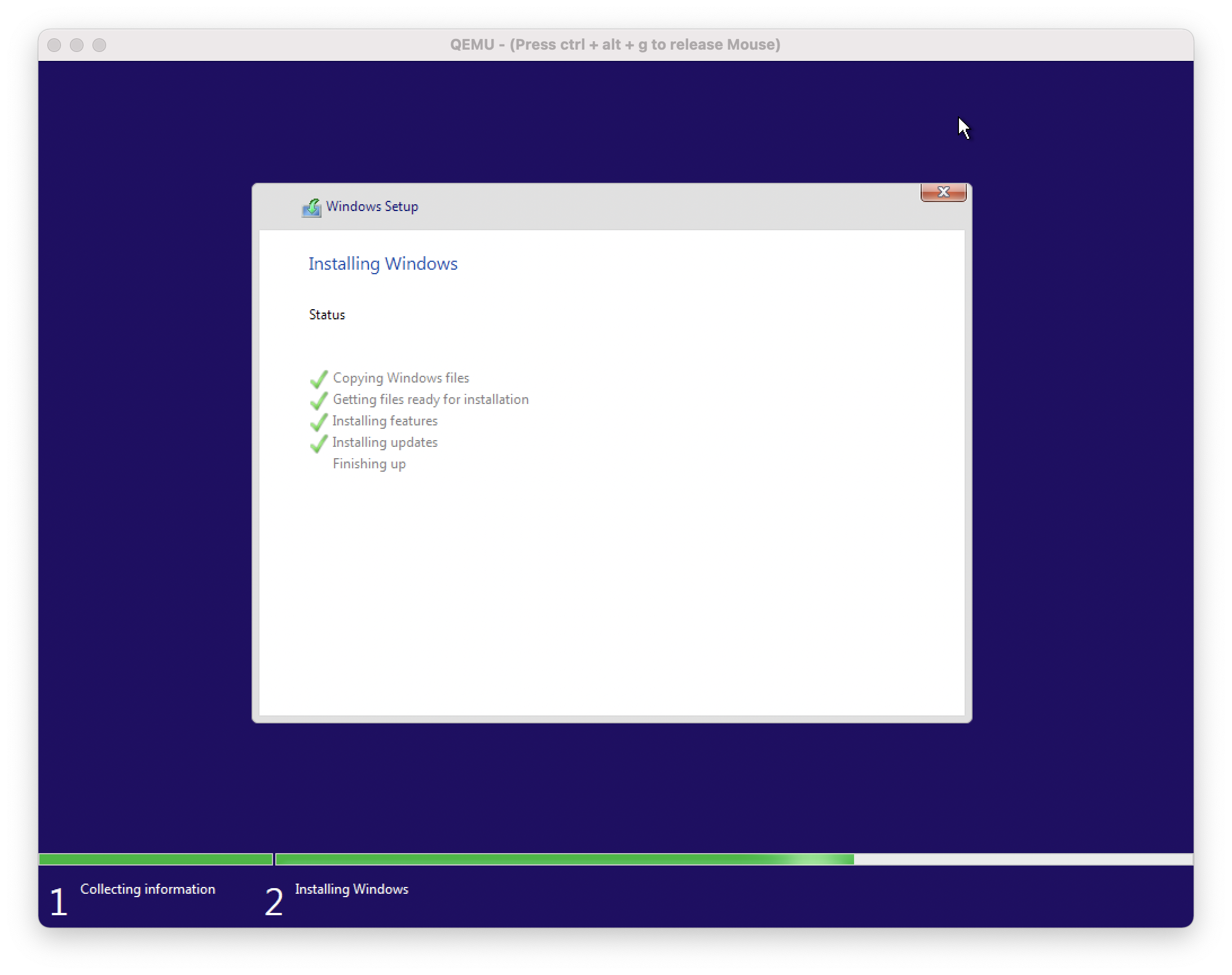

Running Mac OS 9 and Mac OS X 10.0 - 10.4 on Apple Silicon (M1) & Intel via QEMU

The info this guide is valid but I written a new one about QEMU screamer which emulates Mac OS 9 - OS X 10.14 with sound.

QEMU is an open-source emulator for virtualizing computers. Unlike VMWare, it's able to both virtualize CPUs and emulate various CPU instruction sets. It's pretty powerful, free, and has a macOS port. There are alternate versions and different ways to install it. Still, in this example, I'm using Homebrew, a package manager for macOS/OSX that allows you to install software via the CLI and manage easily.

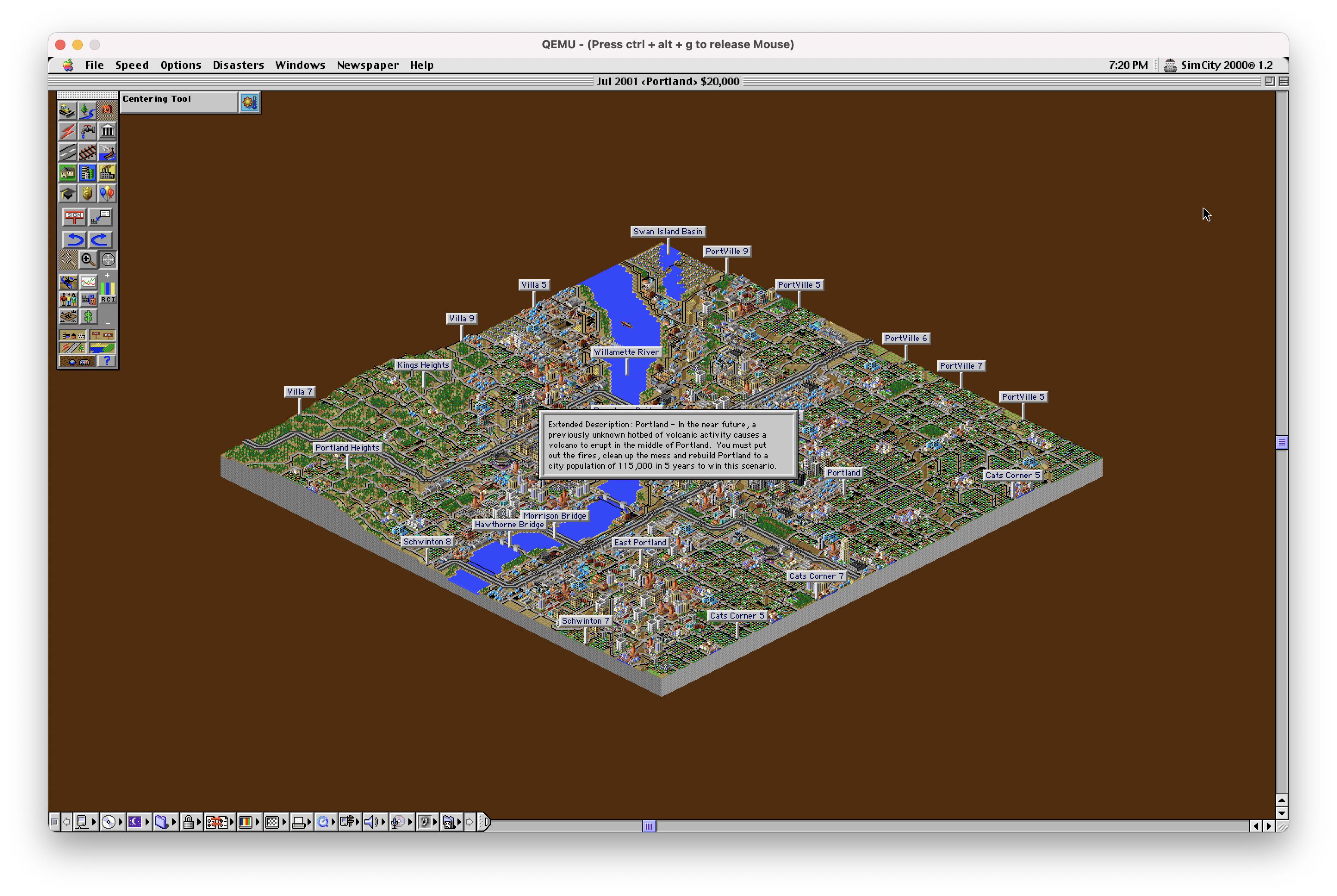

Now, this post wouldn't be very exciting if I tried this on my Mac Pro, but I decided to try it on my MacBook M1. Thus far, the community has succeeded in getting QEMU to install the ARM version Windows, so I decided to do the more silly path and get PPC and X86 working on Apple Silicon. I encountered very little resistance, which surprised me as I haven't seen/read anyone trying this route. It's surprsingly very usable but the usefulness is going to be limited. I was able to play Sim City 2000 on Mac OS 9.2 at a fairly high resolution. For the sake of brevity, I'm going to skip over installing Homebrew on an Apple M1, but you'll want to use the arch -x86_64 method, which requires prepending. I've gotten OS 10.0 and nearly gotten Windows 10 working on my M1.

12/19/21 Update

When I originally wrote this guide, there wasn't a native version of QEMU for Apple Silicon, I've updated this guide so it is now correct

Included below is the instruction for both Apple Silicon and Intel Macs.

Requirements

- Basic understanding of the terminal in OS X/macOS

- Apple Silicon (M1) computer (or Intel) Mac

- Xcode

- xcode-select (CLI Tools)

xcode-select --install - Homebrew

Step 1: Install QEMU

Now that there's a universal binary for QEMU for x86 and Apple Silicon, we can install it using the same commands on both architectures. Yay!

brew install qemuStep 2: Create a disk image

You can specify a path in fron to fthe image, but I just used the default pathing. The size is 2G which equals 2 GB. You can get away with much less for OS X OS 9. If you'd like more space, change the size of the simulated HDD.

qemu-img create -f qcow2 myimage.img 2GStep 3: Launching the emulated computer and the tricky part: Formatting the HDD

Now that we have a blank hard disk image, we're ready to go.

qemu-system-ppc -L pc-bios -boot d -M mac99 -m 512 -hda myimage.img -cdrom path/to/disk/imageLet's break this down so it's not just magic. The first command is the qemu core emulator, you can use things like 64-bit x86 CPU

qemu-system-x86_64or a 32-bit CPUqemu-system-i386, but we're using a PPC, so we are usingqemu-system-ppc.Next, we're declaring PC bios with

-L pc-bios, I'm unsure if this is necessary. This seems to be the default even in Mac QEMU. After that, the-bootflag declares the boot drive. For those who remember the days of yore, C is the default drive for PCs, D is the default for the CD-Rom like a PC. It's weird, I know.-Mis the model flag. It's pretty esoteric, but QEMU uses OpenBIOS, and mac99 is the model for Beige G3s. The lowercase-mis memory, expressed in megabytes, but you can use 1G or 2G for 1 or 2 gigabytes like the format utility.-hdais the image we're using. Finally,-cdromis the installer imageStep 3.5: Special considerations between operating systems

I discovered that OS X 10.0's installer has a significant flaw: It doesn't have a disk utility. The disk images are black disks thus have no file system. If you want to run OS X 10.0, you'll need to first launch an installer that can format HFS like OS 9 or later versions of OS X, run the disk utility, format the image and then exit out of the emulator. The process would look like this:

qemu-system-ppc -L pc-bios -boot d -M mac99 -m 512 -hda myimage.img -cdrom path/to/disk/macosx10.1 or macOS9Then format the drive from the utility, quit the emulator (control-c on the terminal window).

qemu-system-ppc -L pc-bios -boot d -M mac99 -m 512 -hda myimage.img -cdrom path/to/disk/macosx10.0Tiger and Leopard requires USB emulation so you'll need to add

-deviceflags for a usb keyboard and a usb mouse, also both like a few extra -prom-env flags.qemu-system-ppc -L pc-bios -boot d -M mac99 -cpu G4 -m 512 -hda myimage -cdrom /path/to/disk -device usb-kbd -device usb-mouse -prom-env 'auto-boot?=true' -no-reboot -prom-env 'vga-ndrv?=true' -prom-env 'boot-args=-v'Power PC Leopard I can get to boot but it crashed twice during installs, this could be

Step 4: after the installer fininshes

You will end up seeing a failed boot screen after the installer finishes. This is normal. Either quit the QEMU instance or use control-c in the terminal to close it. Now that it's installed, we want to boot off the internal drive.

qemu-system-ppc -L pc-bios -boot c -M mac99 -m 512 -hda myimage.imgMacOS 9 seems to do slightly better when adding the via=pmu and specifying the graphics.

qemu-system-ppc -L pc-bios -boot c -M mac99,via=pmu -g 1024x768x32 -m 512 -hda os9.imgStep 5: mounting disk images

There's not a lot to do with an OS without software. You can mount plenty of disk image formats

qemu-system-ppc -L pc-bios -boot c -M mac99 -m 512 -hda myimage.img -cdrom path/to/disk

Bonus round: Trying for x86 64 Windows 10

Step 6: Multi CD-Rom Installs or swapping Disk Images

Older applications and OS installers require mutliple disk images. This can be done from via the CLI inside QEMU.

On the QEMU window press:

- Control-Alt-2 to bring up the console

change ide1-cd0 /path/to/image- Control-Alt-1 to bring back the GUI

Thus far my Windows 10 experiment has been a lot less successful, I've gotten through the installer (it's unbearably slow) but it seems to hand on booting. It looks very feasible. I might have better luck using the 32 bit verison of windows.

qemu-system-x86_64 -L pc-bios -boot d -m 2048 -hda myimage.img -cdrom Win10_20H2_v2_English_x64.isoQEMU with Sound

Check my guide on QEMU screamer which emulates Mac OS 9 - OS X 10.14 with sound.

</section>

-

A Threat To Democracy Part II.5

I feel yet again compelled to post again about politics after doing some weekend reading.

A Reuters photographer on the scene said he heard at least three different rioters say they wanted to find and hang Pence, who supported certifying the results of the election. - "It was supposed to be so much worse", The Atlantic

Some of the MAGA mob rioters who stormed the US Capitol smeared their own feces throughout the building and left brown 'footprints' in their wake. - MAGA mob rioters smeared their own feces in US Capitol", Dailymail.com

There's a weird schism where reality has been politicized. There's a non-trivial number of people who believe that top democrats run a "secret" child-trafficking ring, liberals "hate America," even moderates like Biden are somehow both communists & socialists, that wide-scale voter fraud was committed and that COVID-19 is a hoax, Obama was either a secret Muslim/born aboard, and these beliefs lead people to storm our National's capitol. It's a literal shit-stain on democracy as we know it.

-

Beyerdynamic DT-770 / DT-880 / DT-990 Removable Cable Mod

I have a confession to make. I'm absolutely terrible with a soldering iron. When my 600 Ohm DT-990s cable went bad, I was able to fix it and knew I was more likely to damage my headphones than fix them. It's been a long-standing complaint that the high-end Beyerdynamic design has a hardwired cable, whereas many other high-end makes have detachable cables.

I tried looking locally and called a few audio shops, but none did repairs. Then I discovered a guy on Etsy who specialized in a detachable cable modification. I forgot about it until December when I was on Etsy, doing a bulk of my Christmas shopping, and decided to take the $75 plunge as it'd not only fix but improve my favorite pair of headphones I own. I could write quite a bit about my thoughts about the DT-990s, but the fact is if you're interested in this, you already own a pair.

I also did a video version of this blog post.

I have to say, I'm genuinely impressed with the headphones. Matt of demevalos, was responsive, even during the holidays when surely he had better things to be doing, and the turn around wasn't bad considering the massive log jam that is the USPS among holiday rush and defunding.

As far as changes to the headphone's quality, there's no introduced noise or degradation to the signal, granted my headphones have been out of commission for months so. They're as good as I remember. They're the same crisp, slightly overly bright, articulate, tightly coiled, deeply responsive bass.

Demevalos also does balanced cables for the DT-770s, DT-880s, and DT-990s and the 1770s/1990s. Besides being spending and correcting a design failure on Beyerdynamic's end, I have to say I highly recommend this mod.

-

A Threat To Democracy Part II

As much as I want to ditch my cynicism and write something unrelated to current events, the actions that stunned the world yesterday seemed all too predictable. There were some truly amazing photos, like the humorous, self-paradoxical, woman waving a flag of herself waving a flag or the blood boiling, traitor flag being paraded through the capitol.

The greater irony is these actions were agitated by Donald Trump, Rudy Giuliani, and for months if not years. I'm unsure how we proceed from here as we've politicized reality. Nearly as quickly, despite all the evidence, the right-wing blamed Antifa and Black Lives Matter, and when inevitably the political affiliations of the rioters who were arrested are revealed, they'll claim either a cover-up, or some deeper conspiracy. At least there's some humor to be found in the irony that their anti-mask beliefs are making it easy for them to be identified.

If you asked me 4.5 years ago my biggest concerns, it'd been something like "healthcare access and reform" or "the environment". It's now been reduced to protecting democracy, hardening institutions of voting, and free and fair elections. The Democrats better not squander their chance to harder the guardrails of democracy.

I still earnestly believe we can deescalate a little, and dial down the tone over the next four years, but it won't be easy.

-

CyberPunk 2077 is now playable on a classic Mac Pro

When CyberPunk 2077 launched, it required AVX, a set of CPU instructions not found on any classic Mac Pro. Clever hackers had removed the requirement for steam and, GOG distributions. In a show of how fast things move, while writing this blog post version, 1.05 was released, which now removes AVX requirements from CyberPunk, effectively mooting the tutorial I had planned.

CyberPunk 2077 surprisingly runs fine on older hardware long as you have a beefy GPU. Due to limited macOS support for GPUs, the best GPUs supported by both macOS and Windows 10 for gaming currently are the Radeon VII and 5700 Xt, neither of which pack enough muscle to play at 4k with maximum settings. That said, nearly maxed 1440p is totally achievable (I only disabled motion blur as, for some reason, I find it bothers my eyes and lowered texture filtering and volumetric shading too high). If you lower settings further, higher resolutions are available. Like most games, the limiting factor is the GPU. Users with lesser GPUs like RX580 should expect acceptable framerates 1080p with reduced settings.

Step 1: Update your GPU drivers to the latest

AMD released specifically for CyberPunk 2077 a GPU update, version 20.12.1 (or greater). These can be downloaded via the Windows AMD Control panel or via their website.

If you don't, the game will likily crash after the create a character screen.

Step 2: Make sure you're running CyberPunk 1.05 or later

Next, make sure you've updated before starting. Otherwise, the game will generally crash after the prologue.

In my limited testing, the game runs perfectly fine on my Mac Pro without any (additional) glitches.

-

Life with the MacBook Air M1 from a web developer

Last week I received my MacBook Air (16 GB/1TB). It's the first brand new computer I've bought personally in 12 years, the latest being the 2008 Mac Pro. I've had a slew of work provided MacBook Pro 15 inchers, 2013/2015/2017 (current), and my main desktop is still a 12-Core 2010 Mac Pro with Radeon VII 3 SSDs and 96 GBs of RAM, so these are going to be my comparison points. I've seen many benchmarks but less about what it's like to be a developer with one.

It's fast in very unconventional ways, resizing the resolution doesn't even flash, and it wakes instantly and unlocks fast in a way my work MacBook Pro 15 inch 2017 doesn't. It's also fanless, which is just outright incredible. It's, of course, dead silent. The last computer I had that didn't have a CPU fan was a G4.

I have to mention the keyboard. It's really ridiculous that we should talk about keyboards at all on a luxury brand like Apple. For years Apple had the best laptop keyboards of any make, then from 2016-2019 changed to the dreaded butterfly keyboard (which caused horror stories of individual keys failing) and added the horrible touchbar as an additional insult. I would have paid extra not to have the Touchbar. The MacBook Pro has the Touchbar (along with slightly better mics and speakers, a fan, and larger). The MacBook Air does not. The typing experience is much better than my MacBook 2017 and reminds me of my 2013 and 2015 MacBooks.

Development is a bit clunky. Homebrew requires prepending everything with

arch -x86_64to use the Intel binaries as Homebrew is barely alpha for Apple Silicon. NodeJS is Intel. NVM is Intel. Ruby via Homebrew is Intel (ruby comes preinstalled, but if you want to use pain-free global packages, you'll be using the homebrew version). Git is Intel. Webpack is Intel. Android Studio is Intel, but the emulator is ARM native. WebStorm is Intel. There are a few like BBedit and VSCode that have dual binaries. It feels fast, but due to the amount of Rosetta 2 binaries, not nearly as much so. Speedy but not so much more than my 2017. I've yet to try Docker as it was just released.Firefox on the m1 is as fast I've ever seen time-to-paint and JS execution. It's really fast. It didn't even blink when I loaded up a TweenMax animation I made with a complex SVG with thousands of polygons that kicked my 2017 into leaf blower. The M1 absolutely shines surfing the web as it's incredibly fast. It's the fastest I've experienced. I do not say this lightly as a significant part of my professional life has been coding web pages/web apps to render quickly.

Apple Motion performance is a bit of a grab bag. It certainly does well for an ultra-light laptop, but if you're working with big ass PSDs, multi-video clips, and so on? The RAM and middling GPU performance show, whereas codec mashing is pretty impressive. The M1 with Pixelmator's ML functions smoke my MacBook Pro badly. 16 GB of RAM is still 16 GB of RAM. Rendering to RAM is still as constrained as my MacBook Pro.

Unified Architecture

The M1 isn't just a CPU as it includes the GPU, and the neural network cores, image signal processor (ISP), an NVMe storage controller, Thunderbolt 4 controllers, and a secure enclave coprocessor. The M1 is a SOC. The CPU itself has four high-performance Firestorm cores and four energy-efficient Icestorm cores. Rather than paraphrase, it's best to quote Wikipedia for the following:

The high-performance cores have 192 KB of L1 instruction cache and 128 KB of L1 data cache and share a 12 MB L2 cache; the energy-efficient cores have a 128 KB L1 instruction cache, 64 KB L1 data cache, and a shared 4 MB L2 cache. The Icestorm "E cluster" has a frequency of 0.6–2.064 GHz and a maximum power consumption of 1.3 W. The Firestorm "P cluster" has a frequency of 0.6–3.204 GHz and a maximum power consumption of 13.8 W - Wikipedia: Apple M1

We've come a long way in dynamic frequency scaling since the Pentium M (one of the first widely available CPUs with the ability to adjust its frequency). Dynamic frequency scaling allows a CPU to change its own clock speed based on how much stress the CPU is under in order to save power. Every Intel Mac made has this ability. The M1's CPU clock speed ranges from 600 MHz - 3.2 GHz in the high-performance cores and 600 MHz to 2.064 GHz in the low power cores. It's quite a range.

The hype train about the M1 and RAM being different is being misrepresented. Unified memory means the GPU and CPU share the same memory, reducing the latency of caching data into the RAM to then be transferred to VRAM. Both the GPU and CPU have access to the same pool, thus speeding up the process. This comes at the cost of not having a separate VRAM buffer, which can be used independently for parallel processing/render buffers, as well as the more "typical" functions like caching textures. Unified memory architecture isn't new as game consoles for decades have used it to great benefit. It's one of the reasons why a seemingly underspeced console like Xbox 360 with only 512 MB of RAM was able to effortlessly produce HD graphics in 2005 below the cost of a single GeForce FX 5950 Ultra. I suspect a good deal of users over-estimate their RAM usage as memory pressure in modern macOS is far more indicative of RAM performance than memory usage. macOS since 10.9 Mavericks uses RAM compression, which the M1 architecture fully embraces. Also, modern OSes intelligently cache less accessed memory spaces to disk buffer. Combined the previous with the speed of NVMe SSDs, virtual memory isn't nearly the speed hit. While I'll routinely stress my MacBook Pro 2017 with its 16 GB of RAM, it usually does pretty well with a large suite of utilities (I'd like more, of course, as it'd speed things up. In the era of M1 Macs, RAM is just as important as it ever was regardless of what MacWorld says, as it's now doubling as your VRAM too.

I can certainly see a future where none of the Macs feature upgradable RAM thanks to unified memory (unless engineering allows otherwise). This feels especially problematic at the pro level, a Mac Pro 2009 can use 128 GBs of RAM, and Apple has only produced a grand total of 4 computers since then that can use more than 64 GBs of RAM (the Mac Pro 2010, 2013, and 2019 and the iMac Pro). There's certainly going to be some resistance to paying upfront, especially when some edge cases users are thinking hundreds of gigabytes if not a terabyte of RAM. The benefits might be outweighed by the sticker-shock of a machine that's performance locked as there will be no RAM or GPU upgrades. Whereas on the PC side of things, you'll be able to offset the cost by waiting to upgrade. Sure, it might not be as fast out of the box, but in two years, you won't need to buy a new computer to achieve modern GPU performance. This alone is places Apple Silicon takes its speed advantage at an economic disadvantage.

The question is, "How will this calculate?" The only way this math makes sense is when buying computers that already face this issue like laptops (where upgrade options are sparse if available at all), or you believe Apple will be so far ahead of the curve that the issue becomes moot. Apple already drove en mass the VFX and a large chunk of the video world away from Apple with it's double-fault of the tandem releases of the 2013 Mac Pro and Final Cut Pro X, and later a childish feud with NVidia. While I doubt it'll be near as a dramatic shift, I can see a future where Hollywood further pivots from Apple. Hopefully, Apple will allow discrete GPUs along with its own GPU cores as Nvidia and AMD wildly ahead of Apple and not slowing down for them to catch up.

Back to the M1, the video output is pretty disappointing as it can only drive one external monitor. The GPU does what it needs to do and works well in video editing and codec mashing but less so elsewhere. I imagine that this is probably enough for most people, but I am not most users. I used two 4k monitors (32-inch + 43-inch) plus my 15-inch display on my MacBook Pro for work. Fortunately, I knew this limitation going in, but it is pretty pitiful considering the Intel MacBook Pro 13 can drive two 4k Monitors at 60 Hz, and the 2019 MacBook Pro can do four 4k displays at 60 Hz. The GPU is pretty weak sauce for gaming. It's better than the Intel integrated GPUs, which isn't exactly something to brag about.

A few Mac publications gleefully posted that the M1's GPU's performance is that of an Nvidia GeForce GTX 1050 Ti, an ultra-budget GPU released in 2016 with an MSRP $109 at launch. They also compare it to the RX560 and mention that it requires 75w. That's true for the desktop version, but it has a mobile version that does not draw 75 watts. It is found in the MacBook Pro 2017s and can drive three external monitors, and incidentally will produce higher framerates in games in Windows than the M1 can in macOS. All that nerdage aside, the 13 inch Apple laptops have never had dedicated GPUs, so this is a welcome upgrade but not an eyebrow-raising one and strangely limited with external displays.

Battery revolution?

That 16-hour battery life? Ha, no. Not when doing development. It seems like I could make it more than the 2-3 hours my 2017 does but more like 4-5 hours. I imagine when we see more M1 binaries, this will improve, but the constant disk swapping for memory is always going to be a battery cost. Right now, a bulk of the toolchain is Intel. I assume this is a bigger battery hit. I also noticed when trying SourceTree, and my battery was being zapped. Perhaps this is a temporary state thanks to the x86 emulation, but ARM's supposed battery magic seems less exciting if your eyes have wandered to the PC market as of late. PC makes are routinely advertising 15-hour batteries at lower price points than the MacBook Air and surprisingly at similar weights. I cannot attest how accurate they are, but it is surprisingly combing Costco to see laptops serving up solid machines at $800 with 512 GB NVMe SSDs and 16 GB of RAM with late gen Intel or the newly minted AMD chipsets, all with the battery life associated with the M1, nearly half the price of the M1 with the same RAM/storage. ARM isn't going to be the extreme battery life extension that some had hoped, but the M1 firmly puts it at the top of the pack in laptops.

Yes, you can still install whatever apps you like

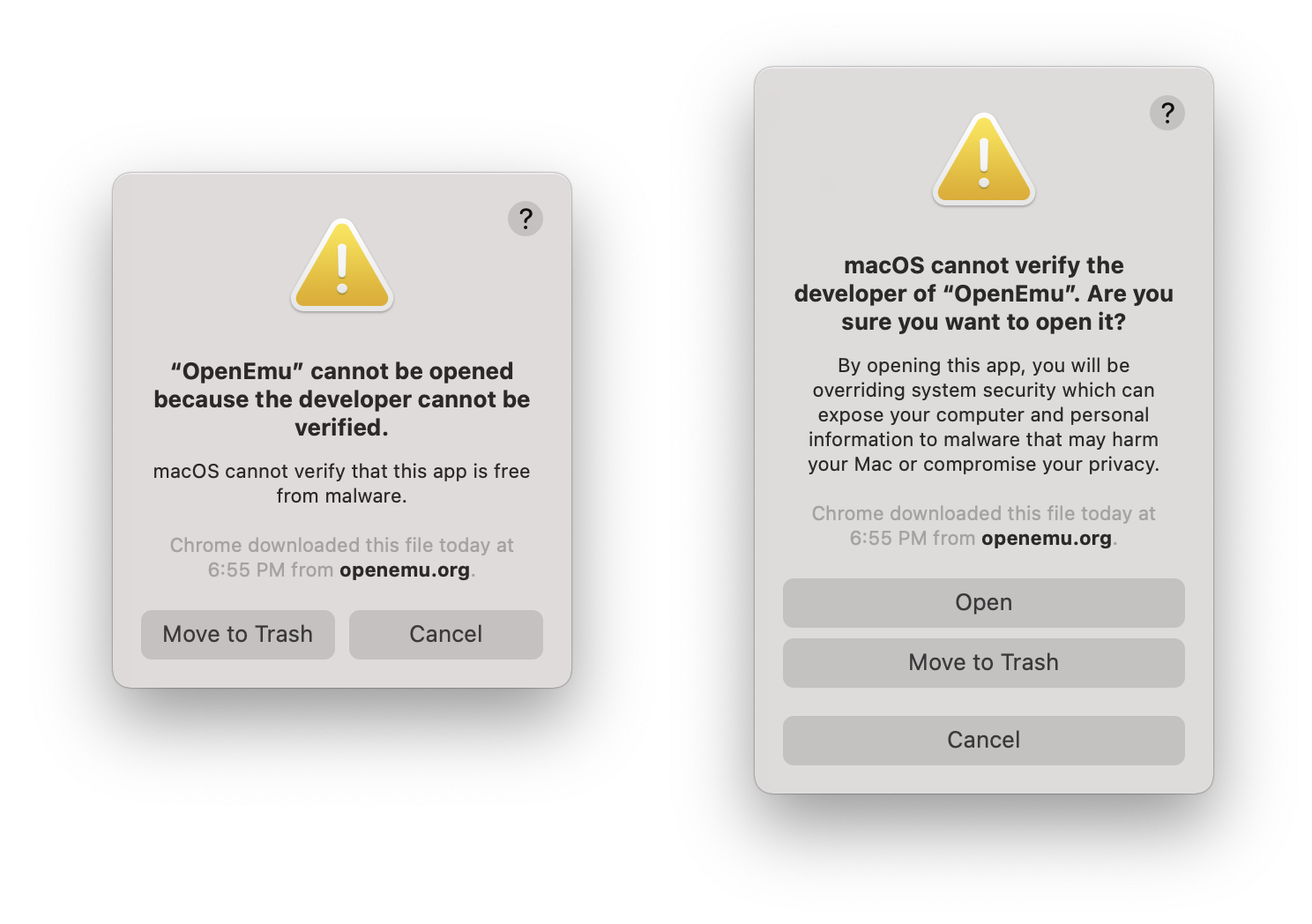

There's a lot of misinformation about the M1 and Apple lock boxing it. I can assure you it's not what you think it is. See the screenshot below.

Left: the warning users will receive without right-clicking open. Right: warning message when right-clicking/option clicking bypass

Not condoning it, but if you really fear the inability to run illicit software, yes, you can do that, even Apple binaries. I've also run unsigned x86 code. SourceTree and OpenEmu both gave me spooky warnings, but I was able to run them after right-clicking. The fear-mongering is just that.

So how does it compare to my Mac Pro? Mostly faster with just a few asterisks. Even my 2017 MacBook Pro is often quicker in many tasks since a lot of web development (javascript) is so single core tied. Anything Node uses a single core per instance. The Mac Pro, though, still is my preferred box, but as my first new computer purchased for myself (I've had a flurry of work 15 inch MacBook Pros: 2013, 2015, 2017) since my 2008 Mac Pro, it's a damn fine computer.

iOS Applications and macOS

Running iOS apps is next to worthless. I thought it'd be nice to have a dedicated HBO Max app, but you cannot scale the app windows. When I tried to download Slack just to compare iOS vs. the shitty Electron app, I couldn't find it. It turns out it's listed as incompatible Same went for Gmail. You need to pull the IPA off your iPhone or iPad with a utility like iMazaing. Annoyingly, I do not have an iPad, so I cannot compare Slack's experience via Electron vs. native. The use cases seem pretty narrow, but perhaps with some hardware that that iOS only via Bluetooth, it'd work via the laptop. Apple's love and affection often seem too focused on the iPad Pro. It's a device that's not terribly compelling to most professionals as everything in its workflow is a compromise and functions as a spiffy niche device. However, the possibility of dual-booting to macOS or at least entering a "Finder" app when a keyboard with a trackpad is plugged means Apple could, in theory, make the iPad Pro a Mac. I'd be very surprised if Apple isn't playing with this idea. To date, I haven't seen this intuitive leap made by anyone other than me, but I cannot be the only one. I also can see the other side of the coin where Apple fears cannibalizing the near-non-existent pro app market on the iPad.

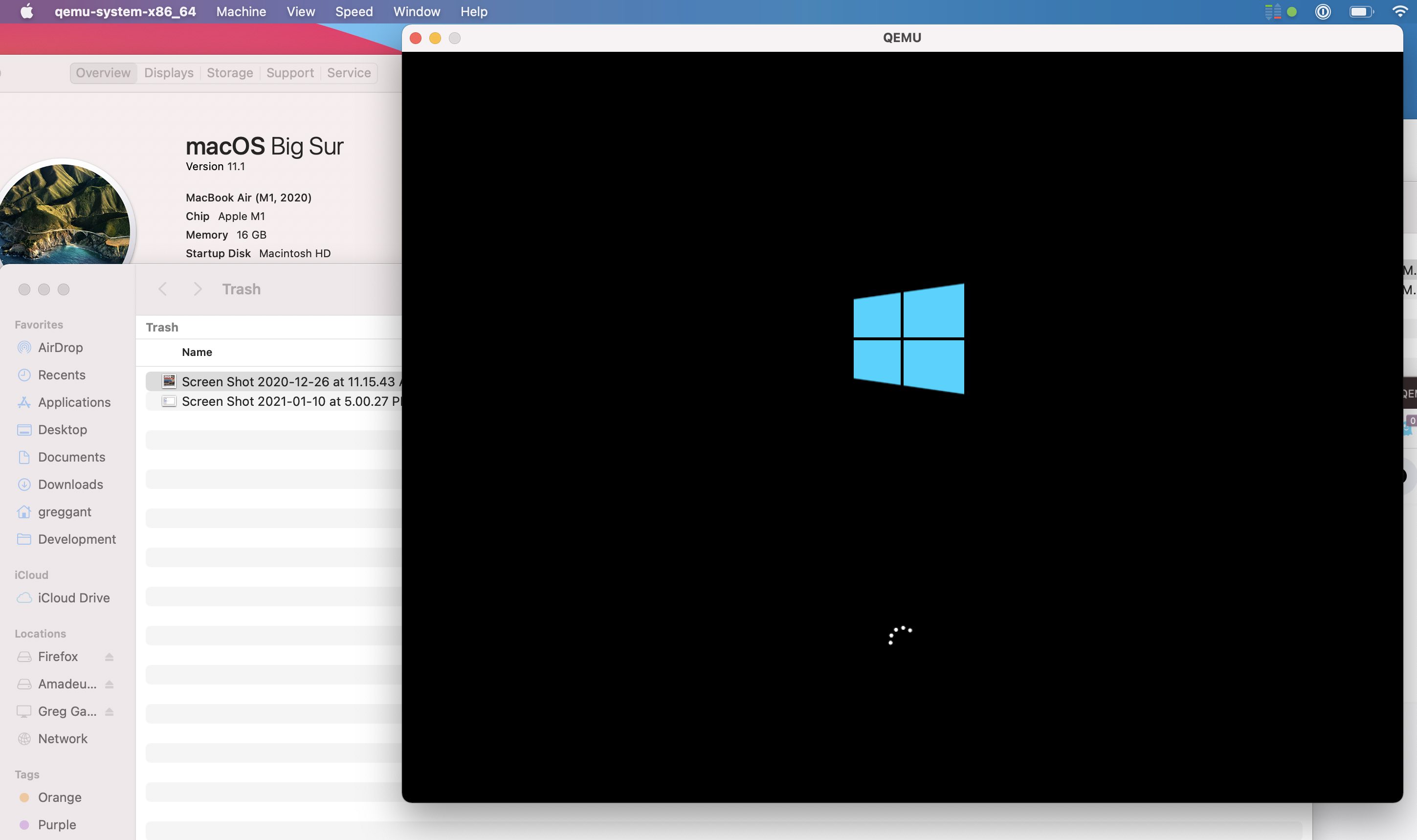

What about Windows?

Windows does have an ARM build, and with a Rosetta-like translation layer that currently only supports Win32 binaries (not 64-bit). That will certainly change as Microsoft has the technical chops. Apple's official policy has been "Really up to Microsoft." I highly recommend reading the linked article. Thus far, there isn't a way to run Windows natively but you virtually run Windows via Qemu. The performance is impressive.

I can't say if Redmond will move to make Windows ARM run on the Apple Silicon, but Apple doesn't seem like it'll be adversarial. There's still a question if Windows will include proper drivers for the ML engine (or if Apple would be game to at least provide support) and GPU but it seems promising. Fingers crossed, we'll see a return to dual-booting Macs or at least ultra-high quality offerings from either VMware or Parallels.

Final Thoughts

For most people, this is the laptop you want unless you're a professional. I would not have bought this as my primary work computer due to the software hurdles of being an early adopter and some of the hardware limitations (lack of video out beyond a single display, and only 16 GB of RAM). I don't regret it as I was heavily considering buying a MacBook Air for travel last year when we could do such things, but we're gonna see some monstrous CPU performance when the M1X (or M2) hits the shelves in the MacBook 16 inch. That said, there's a bit of an asterisk. It's unclear if we'll see nearly the year-over-year gains once Apple ratchets up its core count. Right now, we see the whiplash of a new architecture. Apple's year-over-year performance on the iPhone has been breakneck so there's no reason to assume the Macs won't be the same. I expect Apple to be the leader in portable performance but desktops? Not really. AMD has Zen 3 due out in 2021 with bold claims of 50% improvement on processing power to watt efficiency and then early 2022, Zen 4, again boasting another 50% improvement on processing power to watt efficiency on a 5nm die with PCIe 5.0 bringing a 16x PCIe slot to 64 Gigabytes (512 Gbps). AMD already has today in 2020, 128- Core Epyc CPUs. Brute has its merits too.

If I sound a bit negative, over the years, I've learned is my deep-seated skepticism generally doubles as the closest thing I have to intuition. The Apple MacBook Air M1 is impressive, especially in the class of laptops without dedicated discrete GPUs. Its single-core performance is so good (as is Rosetta 2) that running x86 is often faster than other Intel Macs, and native applications are even faster. macOS feels less pokey on an M1. Thanks to years of optimizations in the iOS arena for things like javascript, Apple Silicon is incredibly fast. In some ways, it's the fastest computer Apple makes, which is mind-boggling. In others? Not nearly as much so. Most pros probably should hold off as too many tools are x86 or using less-stable nightly builds or, worse, not working (audio apps are hit especially hard). Everyday users, though, will find the M1 incredibly zippy, especially if coming from several-year-old computers.

Apple's willingness to through caution to the wind and continually kill long-term support is the gift and the curse. Windows X can run many Windows 98 applications in an emulation layer. Windows X finally stopped distributing a 32-bit version of it's OS. Apple won't even support 32-bit binaries. MS's support now ranges in the decades for a considerable amount of software and hardware. In some facets, MS makes Apple look silly. The supposed bloated Windows X is faster> than macOS in many tasks (macOS is usually the bottom rung when compared against Windows or Linux), but it's also marred by a maddening UI that has two control panels, an absurdity when it comes to *nix flows (but hey, it has Ubuntu that can run as a virtualized kernel). Meanwhile, Apple has going 68k -> PPC -> x86 -> ARM with the Mac platform, and two OSes (as Mac OS 7-9 and OSX/macOS are effectively two separate operating systems). Apple isn't above criticism, but betting against Apple is foolish.

Due to the architecture of the current M1s, I think we'll see computers with real quirks. It's quite possible we'll see 27 inch 5k iMacs capable of editing 8k video but unable to play games maxed out even at 2560 x 1440 or connect to more than two external displays, let alone with the latest features like ray tracing. Typical GPU tasks like codec mashing and machine learning/tensor flow will fly on the Apple Silicon but leaving the happy path to perform tasks like digital noise reduction (DNR), gaming, and 3D rendering will be vastly behind AMD/Nvidia. ARM doesn't implicitly mean non-modular, SOC only, as we've already seen. For the portable class of computing, the Apple Silicon looks like it'll be unmatched (and expensive). Brute Force vs efficiency will be the story of x86 versus Apple Silicon versus ARM, and I suspect there won't always be a clear winner.

Welcome to the next decade of computing.

-

Blog Upgrade

This is my first post from the first new computer, a MacBook Air M1, that I have bought personally since 2008, a Mac Pro. I've owned a flurry of work laptops MacBook Pro 15 inchers from 2013, 2015 and currently, a 2017, and I bought a 2010 Mac Pro a few years back. I haven't had my own personal laptop since 2013.

I finally updated to Jekyll 4 which was annoying as I had to upgrade pagination. I think I've finally hit a place where I've outgrown Jekyll for blogging software. There's some new static site generators that look a bit nicer, as I still write my posts by hand in HTML as if it were 1994. I can write HTML in my sleep (and probably have) but it detracts from writing. If there's any general weirdness on the blog, its due to the upgrade even if I'm still content with my hyper minimalist presentation.

Update: what a pain in the ass Jekyll is, the old parser for Markdown isn't supported, but the majority of my post are actually HTML. My previous parser literally spit out whatever was HTML content, whereas this one processes the HTML. This doesn't do anything besides actually cause a lot of problems if you have any HTML errors like a mission " or an improperly closed tag. Thus, a few pages were borked requiring me to go through and try and find the HTML misrenders. I think I got almost all of them but I think my days with Jekyll are coming to an end. I started this blog on Tumblr (mistake) and moved to something I could control in 2016 as it was clear not owning the platform I was on was a negative, and I completely misunderstood Tumblr by actually blogging on it. It in hindsight, it was absolutely the correct move as a closed platform meant I was subject to the whims of the owners, and even better, the hardened SEO has meant I've had much better SEO even if my SEO strategy is entirely anti-SEO beyond accessibility.

I've never gotten my FTP process to automate so I still use FileZilla and I write in HTML. Newer frameworks exist. Right now I'm leaning towards a React based system that renders a static site long as it can be used even when JS is disabled, adds very little in payload (my blog is insanely light weight with a payload 11k of CSS, 90k of JS (mostly jQuery, time to move on there) and roughly 10k of HTML for many posts.

-

iPhone 12 Pro & iOS 14 reactions

In the past, I've recorded my thoughts when I've purchased a new phone with my iPhone XS & iOS 12 impressions and Initial Reactions to the iPhone 7 . It's interesting to see any predictions I make either come true (3rd camera lens) or burn in flames (force touch).

Since there are far better reviews on the web of the iPhone than what you'll find here, I've instead opted to record just my initial reactions thoughts after 2+ weeks.

- Getting my phone past first boot took roughly a halfhour, but getting all my applications and photo previews took pretty much the entire day. Ugh.

- I still miss the headphone jack.

- At first, MagSafe seemed cool, but seeing the real-world applications, I've yet to purchase a single accessory that uses it. I don't see myself doing that any time soon.

- LThe lightning port needs to die in this USBc world. It served its purpose as a big upgrade over the other USB formats of its conception for usability, but USBc solves it and is widely used by everyone, including Apple. It feels more and more like a cash grab.

- The iPhone 12 Pro needs a mini version. I'd love that. The iPhone 5 remains the greatest iPhone design for size/weight to screen.

- The iPhone 12's hard edges are so much better. It's a return to the iPhone 4 and 5. I imagine we'd seesaw between round and hard edges in 5-year cycles until the iPhone's death as a way to shift the look and feel between generations.

- Apple's premium phones feel fragile still. It's less so than the XS, but a case feels required.

- There's a noticeable increase in sharpness that I didn't expect over the iPhone XS. Photos look as good as ever but still not as sharp as any DSLR with even mediocre glass. It's all about the physical surface area of the sensor to capture those photons.

- The iPhone 12 Pro's cameras big improvements over the 11 seem to be software that feels a bit spiteful of the hardware.

- II didn't expect to notice the speed increase over the XS, as even with my iPhone 7, the performance had hit "fast enough." A lot of it appears to be the 6 GB of RAM. Apps rarely reload. I wish they'd just ripped the bandaid off and gone with 8 GB for a bit more future proofing, but I suppose they need something to upgrade in 2 years. While I'll certainly be wrong, in the iPhone's constricted form factor, I doubt we'll be asking for 16 GB of RAM in 2022.

- The CPU upgrade shows in exporting vids. It's noticeably faster over my XS.

- The Wide-angle is pretty useful. I wish we'd finally get a quad lens setup with 0.5x/1x/2x/4x as that'd be 13mm, 26mm, 52mm, 78mm, or perhaps 0.5x/1x/2.5x/5x for 13mm, 26mm, 65mm, 130mm as that'd cover the bases for standard photography. Reviewers complaining about the lack of increase are missing the fact that a tiny phone with a huge optical zoom stretches the limits of handheld photography. A 2x is far more useful as 52mm is close to the stand by considered the "human" lens (although eyes are not cameras). A 78mm would be near the photojournalist 85mm "portrait" lens, which does wonders for outdoors with its compressed Z access.

- Night mode works fairly well but doesn't work miracles. It's a massive improvement over my XS and bound to be improved upon each generation (mostly through software).

iOS 14

- iOS 14 was problematic for health data and required a reinstall on the phone to work properly with my XS. Stupid

- Widgets are overhyped, but users love them apparently. I'm not big into customizing my phone. I run it in dark mode and reduced motion to conserve battery life, and I have the same lock screen as the iPhone 5 and the same background as my iPhone 7. Wigets far best used on the widgets screen. Polluting my home screen doesn't do much for me. There's not a lot of data I need every single time I look at my phone. On the widgets screen, I've added the battery life.

- I wanted smart folders but this implimentation? It sucks.

- App organization is still god awful. Folders are artificially small. They do not have custom icons, and they can't do folders in folders. Also, why can't we vertically scroll on-screen like Android? iOS 14 failed to deliver much meaningful change.

- Am I alone that Memoji is tacky? I'm probably an island of one here.

- Small Siri is nice, but I liked seeing what my phone interpreted my voice commands as real-time instead of waiting and seeing.

- Picture-in-picture mode is probably great on an iPad, but it doesn't feel very meaningful on my phone.

- Translate looks cool, but I can't travel now because, y'know...

- Apple sign-on is great.

Things I was wrong about

- FaceID: It's overall an improvement over Touch ID and lateral than I thought it'd be.... but now we all wear masks.

- I wasn't willing to ditch the larger phone format with dual cameras. Now I want three. If they add a forth, I'll want that too.

- I regretted not getting 512 GB of storage.

-

The day after

Pictured: friendly debate with people from my home town. I hearted this post. This is gonna be a really awkward Thanksgiving for a lot of Americans and I'm thankful my immediate family is who they are.

This made me chuckle, as my heart is filled mostly with irony but we have 70 million voters who potentially share this world view. Even thinking fractionally, that's a frighteningly large number of people who are "angry, spoiled, racially resentful, aggrieved, and willing to die rather than ever admit that they were wrong". I can't say I wasn't above gloating, just a little after repeated attacks on the democratic process from a failed real estate agent $1,000,000,000 in debt. My team won (better than it felt), but we have a lot of work to do.

I don't hold any greater hope that we can fix everything or convert many to our egalitarian outlook; a worldview that places more value on assisting those in need, the environment, science and education. Just maybe we can deescalate a little, and dial down the tone over the next four years. Outside of hottest-take-wins Twitterati warriors of this world, I think the vast majority people, here and also abroad would appreciate a little more civility... and a little less conspiracy.

-

All .dev URLs return Unknown Host Error in macOS (OS X)

So... I discovered a strange problem, any website using a

.devsuffix would give me an unknown host error. First I figured it was my DNS, swapping servers and clearing the DNS cache, trying my VPN etc which didn't work. Pinging any .dev URL would return pings absurdly low responses suggesting somewhere something was routing all requests to local/127.0.0.1.Checking my

etc/hostswas a bust as well as Apache's vhosts. After about a half hour of reading I found the following thread, Pinging test.dev after Laravel Valet install returns "Unknown Host" .Posters mentioned dnsmasq utility. To fix the problem, users used brew (OS X package manager) reinstall/restart it. Being semi-familiar with brew, I used Brew's list command. I found that I indeed had it installed. I tried the perscribed method which didn't work but I felt like I was on the right path.

Not giving up, I dug up the

dnsmasq.conffile located inusr/local/etcand noticed a single entry,address=/.dev/127.0.0.1. I deleted it and after a reboot, every thing was back to normal.

-

Cme Xkey 37 Le Review

--- layout: post title: "CME Xkey 37 LE Review" date: 2020-12-15 categories: front end development tags: [front end development] ---Earlier this year my old reliable first edition Korg MicroKey 37 died, after 7 years of service. I've bought multiple midi keyboards including a 88 key hammer action weighed keyboard which I quickly discovered was far too big for my use case. Since then, I've favored the 37 key for my sometimes music production mostly for the size and for the selection as there happens to be a bit more options at the 37 size over the more practical 49 keys.

With 2020 being what it is, I've revisited my audio studio setup and decided to buckle down and actually learn to play the keyboard. I had bought the current microKey only to discover... I hate slim-width keys while trying to actually play anything.

The search for the ideal 37 key

Searching for high end small keyboards is a bit of an oxymoron. Here's my observations:

- The most expensive keyboards in the 49 and below form factor generally pack in "gee-wiz" features, pads, knobs and sliders. These are nice but drastically get away from my "small-as-possible" ideal. I also have an Ableton Push 2 and a NI Maschine MK II which are far more capable in the pad/knob department.

- Weighed/Hammer Action Weighted keyboards simply do not exist sub 61 key. There's a few 49 semi-weighed keybaords like the Akai MPK249, which falls into the "gee-wiz" style, and certainly isn't small. 37 semi-weighed keyboards are pretty much nonexistent.

- Most of the keyboards designed for travel use slim keys.

This meant I had to reset my expectations, once I did I zeroed in on the CME Xkey 37.

Low Travel / High quality

weThe Xkey is its own beast in a number of ways. First off, it's an aluminum chasis, which immediately sets it apart. After years of cheap midi keyboards, it doesn't feel frail but rather substantial. Next up is the lack of a standard pitch-bend/mod wheel. Instead, they're replaced with touch sensitive pads. Also, it's absent of a sustain pedal, instead featuring a sustain button, which is a mild disappointment but not a fail like the original Korg Microkey 37 which did not feature a sustain pedal at all. All of these choices are in the name of space saving as mod wheels and pitch bends take up space, as well as the 1/4 inch cable requirement for a sustain pedal.

Lastly, there's the keys... The big pivot is the Xkey's keys are low travel, akin to a laptop. Pressing them hard results in the "clack" more associated with a alphanumeric keyboard than a musical keyboard, they're still velocity sensitive, and surprisingly features polyphonic aftertouch. Keyboards that feature after-touch continue to record the pressure of a keyboard press, but usually as a global. Polyphonic records the aftertouch per-key.

timing chain / cam sprockets

-

Drupal 8 Upgrade Fails with Fatal error: ClassFinderInterface not found

Upgrades for Drupal 8 can sometimes go south rather badly and result in an error such as this:

Fatal error: Interface 'Doctrine\Common\Reflection\ClassFinderInterface' not found in /var/www/html/core/lib/Drupal/Component/Annotation/Reflection/MockFileFinder.php on line 14I'm not PHP guy it took a while for me to unearth as the documents are thin and solutions vague. Googling it will suggest that the composer version is to, be blamed but adding to composer.json

"doctrine/common":">2.8"probably won't work, it didn't for me. I went on a wild multi-day adventure to solve this and the fix is actually pretty simple as it has to do with composer, the dependency manager for PHP. Rather than recount my frustrations with updater composer in my docker setup, I'll skip to the goods.So here's the fix:

Note: If using docker, you'll need to docker exec bash into the container running PHP.

- Merge in the drupal updates from the Drupal repository

- Delete the

/vendordirectory and thecomposer.lockfile - Run

composer self-update - optional add

"doctrine/common":">2.8"to yourcomposer.json. This may/may not be required - Run

composer updateto update all of Drupal's dependencies.

Troubleshooting

Problem

Running

composer updateresults in the long error message:[ErrorException] "continue" targeting switch is equivalent to "break". Did you mean to use "continue 2"?or[ErrorException] "continue" targeting switch is equivalent to "break". Did you mean to use "continue 2"?or it results inFatal error: Uncaught Error: Class ‘Drupal\Composer\Plugin\Scaffold\Operations\AbstractOperation’ not found in /var/www/html/vendor/drupal/core-composer-scaffold/Operations/ReplaceOp.php:15Solution

Delete the

/vendorandcomposer.lock