Life with the MacBook Air M1 from a web developer

Last week I received my MacBook Air (16 GB/1TB). It's the first brand new computer I've bought personally in 12 years, the latest being the 2008 Mac Pro. I've had a slew of work provided MacBook Pro 15 inchers, 2013/2015/2017 (current), and my main desktop is still a 12-Core 2010 Mac Pro with Radeon VII 3 SSDs and 96 GBs of RAM, so these are going to be my comparison points. I've seen many benchmarks but less about what it's like to be a developer with one.

It's fast in very unconventional ways, resizing the resolution doesn't even flash, and it wakes instantly and unlocks fast in a way my work MacBook Pro 15 inch 2017 doesn't. It's also fanless, which is just outright incredible. It's, of course, dead silent. The last computer I had that didn't have a CPU fan was a G4.

I have to mention the keyboard. It's really ridiculous that we should talk about keyboards at all on a luxury brand like Apple. For years Apple had the best laptop keyboards of any make, then from 2016-2019 changed to the dreaded butterfly keyboard (which caused horror stories of individual keys failing) and added the horrible touchbar as an additional insult. I would have paid extra not to have the Touchbar. The MacBook Pro has the Touchbar (along with slightly better mics and speakers, a fan, and larger). The MacBook Air does not. The typing experience is much better than my MacBook 2017 and reminds me of my 2013 and 2015 MacBooks.

Development is a bit clunky. Homebrew requires prepending everything with arch -x86_64 to use the Intel binaries as Homebrew is barely alpha for Apple Silicon. NodeJS is Intel. NVM is Intel. Ruby via Homebrew is Intel (ruby comes preinstalled, but if you want to use pain-free global packages, you'll be using the homebrew version). Git is Intel. Webpack is Intel. Android Studio is Intel, but the emulator is ARM native. WebStorm is Intel. There are a few like BBedit and VSCode that have dual binaries. It feels fast, but due to the amount of Rosetta 2 binaries, not nearly as much so. Speedy but not so much more than my 2017. I've yet to try Docker as it was just released.

Firefox on the m1 is as fast I've ever seen time-to-paint and JS execution. It's really fast. It didn't even blink when I loaded up a TweenMax animation I made with a complex SVG with thousands of polygons that kicked my 2017 into leaf blower. The M1 absolutely shines surfing the web as it's incredibly fast. It's the fastest I've experienced. I do not say this lightly as a significant part of my professional life has been coding web pages/web apps to render quickly.

Apple Motion performance is a bit of a grab bag. It certainly does well for an ultra-light laptop, but if you're working with big ass PSDs, multi-video clips, and so on? The RAM and middling GPU performance show, whereas codec mashing is pretty impressive. The M1 with Pixelmator's ML functions smoke my MacBook Pro badly. 16 GB of RAM is still 16 GB of RAM. Rendering to RAM is still as constrained as my MacBook Pro.

Unified Architecture

The M1 isn't just a CPU as it includes the GPU, and the neural network cores, image signal processor (ISP), an NVMe storage controller, Thunderbolt 4 controllers, and a secure enclave coprocessor. The M1 is a SOC. The CPU itself has four high-performance Firestorm cores and four energy-efficient Icestorm cores. Rather than paraphrase, it's best to quote Wikipedia for the following:

The high-performance cores have 192 KB of L1 instruction cache and 128 KB of L1 data cache and share a 12 MB L2 cache; the energy-efficient cores have a 128 KB L1 instruction cache, 64 KB L1 data cache, and a shared 4 MB L2 cache. The Icestorm "E cluster" has a frequency of 0.6–2.064 GHz and a maximum power consumption of 1.3 W. The Firestorm "P cluster" has a frequency of 0.6–3.204 GHz and a maximum power consumption of 13.8 W - Wikipedia: Apple M1

We've come a long way in dynamic frequency scaling since the Pentium M (one of the first widely available CPUs with the ability to adjust its frequency). Dynamic frequency scaling allows a CPU to change its own clock speed based on how much stress the CPU is under in order to save power. Every Intel Mac made has this ability. The M1's CPU clock speed ranges from 600 MHz - 3.2 GHz in the high-performance cores and 600 MHz to 2.064 GHz in the low power cores. It's quite a range.

The hype train about the M1 and RAM being different is being misrepresented. Unified memory means the GPU and CPU share the same memory, reducing the latency of caching data into the RAM to then be transferred to VRAM. Both the GPU and CPU have access to the same pool, thus speeding up the process. This comes at the cost of not having a separate VRAM buffer, which can be used independently for parallel processing/render buffers, as well as the more "typical" functions like caching textures. Unified memory architecture isn't new as game consoles for decades have used it to great benefit. It's one of the reasons why a seemingly underspeced console like Xbox 360 with only 512 MB of RAM was able to effortlessly produce HD graphics in 2005 below the cost of a single GeForce FX 5950 Ultra. I suspect a good deal of users over-estimate their RAM usage as memory pressure in modern macOS is far more indicative of RAM performance than memory usage. macOS since 10.9 Mavericks uses RAM compression, which the M1 architecture fully embraces. Also, modern OSes intelligently cache less accessed memory spaces to disk buffer. Combined the previous with the speed of NVMe SSDs, virtual memory isn't nearly the speed hit. While I'll routinely stress my MacBook Pro 2017 with its 16 GB of RAM, it usually does pretty well with a large suite of utilities (I'd like more, of course, as it'd speed things up. In the era of M1 Macs, RAM is just as important as it ever was regardless of what MacWorld says, as it's now doubling as your VRAM too.

I can certainly see a future where none of the Macs feature upgradable RAM thanks to unified memory (unless engineering allows otherwise). This feels especially problematic at the pro level, a Mac Pro 2009 can use 128 GBs of RAM, and Apple has only produced a grand total of 4 computers since then that can use more than 64 GBs of RAM (the Mac Pro 2010, 2013, and 2019 and the iMac Pro). There's certainly going to be some resistance to paying upfront, especially when some edge cases users are thinking hundreds of gigabytes if not a terabyte of RAM. The benefits might be outweighed by the sticker-shock of a machine that's performance locked as there will be no RAM or GPU upgrades. Whereas on the PC side of things, you'll be able to offset the cost by waiting to upgrade. Sure, it might not be as fast out of the box, but in two years, you won't need to buy a new computer to achieve modern GPU performance. This alone is places Apple Silicon takes its speed advantage at an economic disadvantage.

The question is, "How will this calculate?" The only way this math makes sense is when buying computers that already face this issue like laptops (where upgrade options are sparse if available at all), or you believe Apple will be so far ahead of the curve that the issue becomes moot. Apple already drove en mass the VFX and a large chunk of the video world away from Apple with it's double-fault of the tandem releases of the 2013 Mac Pro and Final Cut Pro X, and later a childish feud with NVidia. While I doubt it'll be near as a dramatic shift, I can see a future where Hollywood further pivots from Apple. Hopefully, Apple will allow discrete GPUs along with its own GPU cores as Nvidia and AMD wildly ahead of Apple and not slowing down for them to catch up.

Back to the M1, the video output is pretty disappointing as it can only drive one external monitor. The GPU does what it needs to do and works well in video editing and codec mashing but less so elsewhere. I imagine that this is probably enough for most people, but I am not most users. I used two 4k monitors (32-inch + 43-inch) plus my 15-inch display on my MacBook Pro for work. Fortunately, I knew this limitation going in, but it is pretty pitiful considering the Intel MacBook Pro 13 can drive two 4k Monitors at 60 Hz, and the 2019 MacBook Pro can do four 4k displays at 60 Hz. The GPU is pretty weak sauce for gaming. It's better than the Intel integrated GPUs, which isn't exactly something to brag about.

A few Mac publications gleefully posted that the M1's GPU's performance is that of an Nvidia GeForce GTX 1050 Ti, an ultra-budget GPU released in 2016 with an MSRP $109 at launch. They also compare it to the RX560 and mention that it requires 75w. That's true for the desktop version, but it has a mobile version that does not draw 75 watts. It is found in the MacBook Pro 2017s and can drive three external monitors, and incidentally will produce higher framerates in games in Windows than the M1 can in macOS. All that nerdage aside, the 13 inch Apple laptops have never had dedicated GPUs, so this is a welcome upgrade but not an eyebrow-raising one and strangely limited with external displays.

Battery revolution?

That 16-hour battery life? Ha, no. Not when doing development. It seems like I could make it more than the 2-3 hours my 2017 does but more like 4-5 hours. I imagine when we see more M1 binaries, this will improve, but the constant disk swapping for memory is always going to be a battery cost. Right now, a bulk of the toolchain is Intel. I assume this is a bigger battery hit. I also noticed when trying SourceTree, and my battery was being zapped. Perhaps this is a temporary state thanks to the x86 emulation, but ARM's supposed battery magic seems less exciting if your eyes have wandered to the PC market as of late. PC makes are routinely advertising 15-hour batteries at lower price points than the MacBook Air and surprisingly at similar weights. I cannot attest how accurate they are, but it is surprisingly combing Costco to see laptops serving up solid machines at $800 with 512 GB NVMe SSDs and 16 GB of RAM with late gen Intel or the newly minted AMD chipsets, all with the battery life associated with the M1, nearly half the price of the M1 with the same RAM/storage. ARM isn't going to be the extreme battery life extension that some had hoped, but the M1 firmly puts it at the top of the pack in laptops.

Yes, you can still install whatever apps you like

There's a lot of misinformation about the M1 and Apple lock boxing it. I can assure you it's not what you think it is. See the screenshot below.

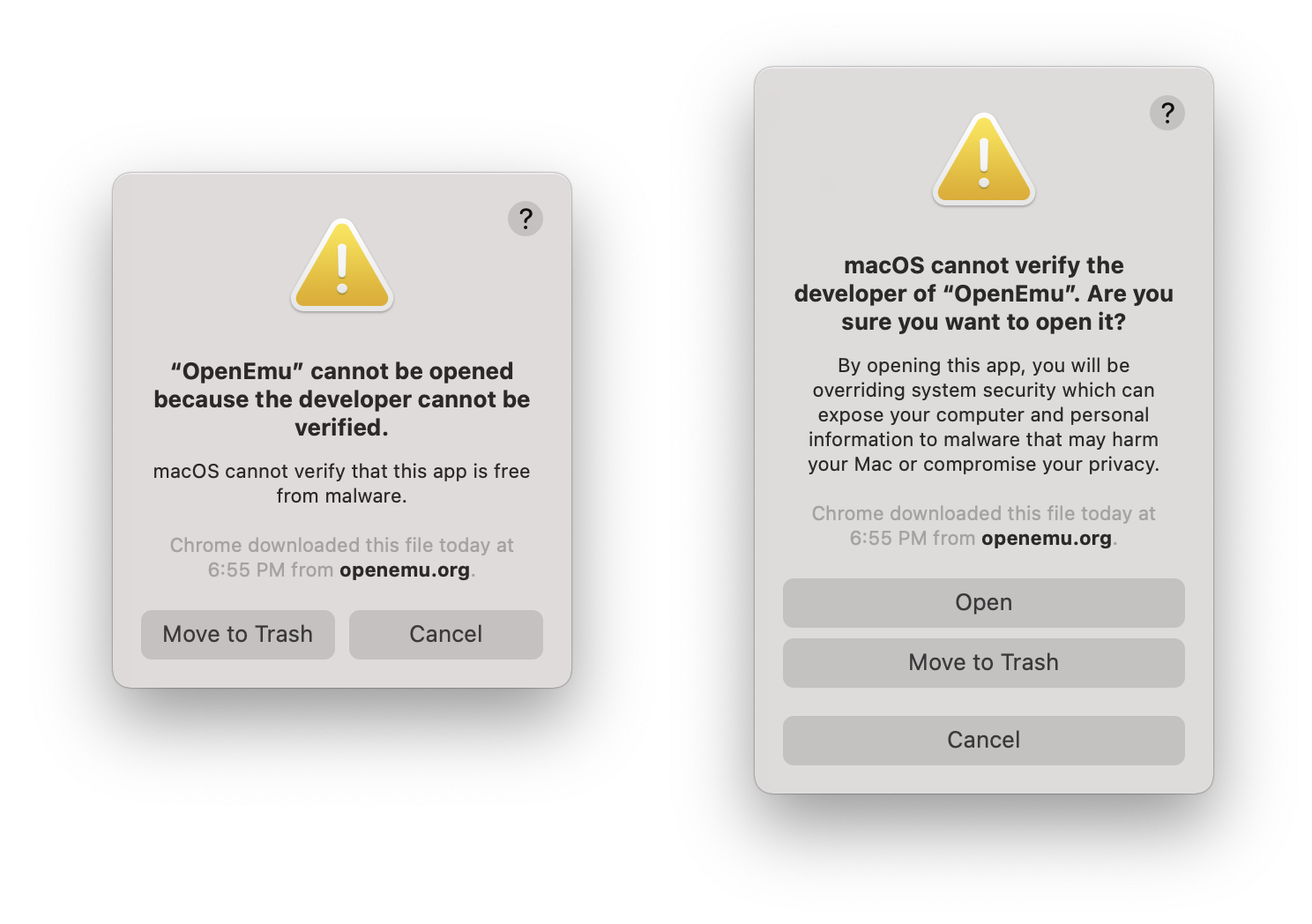

Left: the warning users will receive without right-clicking open. Right: warning message when right-clicking/option clicking bypass

Not condoning it, but if you really fear the inability to run illicit software, yes, you can do that, even Apple binaries. I've also run unsigned x86 code. SourceTree and OpenEmu both gave me spooky warnings, but I was able to run them after right-clicking. The fear-mongering is just that.

So how does it compare to my Mac Pro? Mostly faster with just a few asterisks. Even my 2017 MacBook Pro is often quicker in many tasks since a lot of web development (javascript) is so single core tied. Anything Node uses a single core per instance. The Mac Pro, though, still is my preferred box, but as my first new computer purchased for myself (I've had a flurry of work 15 inch MacBook Pros: 2013, 2015, 2017) since my 2008 Mac Pro, it's a damn fine computer.

iOS Applications and macOS

Running iOS apps is next to worthless. I thought it'd be nice to have a dedicated HBO Max app, but you cannot scale the app windows. When I tried to download Slack just to compare iOS vs. the shitty Electron app, I couldn't find it. It turns out it's listed as incompatible Same went for Gmail. You need to pull the IPA off your iPhone or iPad with a utility like iMazaing. Annoyingly, I do not have an iPad, so I cannot compare Slack's experience via Electron vs. native. The use cases seem pretty narrow, but perhaps with some hardware that that iOS only via Bluetooth, it'd work via the laptop. Apple's love and affection often seem too focused on the iPad Pro. It's a device that's not terribly compelling to most professionals as everything in its workflow is a compromise and functions as a spiffy niche device. However, the possibility of dual-booting to macOS or at least entering a "Finder" app when a keyboard with a trackpad is plugged means Apple could, in theory, make the iPad Pro a Mac. I'd be very surprised if Apple isn't playing with this idea. To date, I haven't seen this intuitive leap made by anyone other than me, but I cannot be the only one. I also can see the other side of the coin where Apple fears cannibalizing the near-non-existent pro app market on the iPad.

What about Windows?

Windows does have an ARM build, and with a Rosetta-like translation layer that currently only supports Win32 binaries (not 64-bit). That will certainly change as Microsoft has the technical chops. Apple's official policy has been "Really up to Microsoft." I highly recommend reading the linked article. Thus far, there isn't a way to run Windows natively but you virtually run Windows via Qemu. The performance is impressive.

I can't say if Redmond will move to make Windows ARM run on the Apple Silicon, but Apple doesn't seem like it'll be adversarial. There's still a question if Windows will include proper drivers for the ML engine (or if Apple would be game to at least provide support) and GPU but it seems promising. Fingers crossed, we'll see a return to dual-booting Macs or at least ultra-high quality offerings from either VMware or Parallels.

Final Thoughts

For most people, this is the laptop you want unless you're a professional. I would not have bought this as my primary work computer due to the software hurdles of being an early adopter and some of the hardware limitations (lack of video out beyond a single display, and only 16 GB of RAM). I don't regret it as I was heavily considering buying a MacBook Air for travel last year when we could do such things, but we're gonna see some monstrous CPU performance when the M1X (or M2) hits the shelves in the MacBook 16 inch. That said, there's a bit of an asterisk. It's unclear if we'll see nearly the year-over-year gains once Apple ratchets up its core count. Right now, we see the whiplash of a new architecture. Apple's year-over-year performance on the iPhone has been breakneck so there's no reason to assume the Macs won't be the same. I expect Apple to be the leader in portable performance but desktops? Not really. AMD has Zen 3 due out in 2021 with bold claims of 50% improvement on processing power to watt efficiency and then early 2022, Zen 4, again boasting another 50% improvement on processing power to watt efficiency on a 5nm die with PCIe 5.0 bringing a 16x PCIe slot to 64 Gigabytes (512 Gbps). AMD already has today in 2020, 128- Core Epyc CPUs. Brute has its merits too.

If I sound a bit negative, over the years, I've learned is my deep-seated skepticism generally doubles as the closest thing I have to intuition. The Apple MacBook Air M1 is impressive, especially in the class of laptops without dedicated discrete GPUs. Its single-core performance is so good (as is Rosetta 2) that running x86 is often faster than other Intel Macs, and native applications are even faster. macOS feels less pokey on an M1. Thanks to years of optimizations in the iOS arena for things like javascript, Apple Silicon is incredibly fast. In some ways, it's the fastest computer Apple makes, which is mind-boggling. In others? Not nearly as much so. Most pros probably should hold off as too many tools are x86 or using less-stable nightly builds or, worse, not working (audio apps are hit especially hard). Everyday users, though, will find the M1 incredibly zippy, especially if coming from several-year-old computers.

Apple's willingness to through caution to the wind and continually kill long-term support is the gift and the curse. Windows X can run many Windows 98 applications in an emulation layer. Windows X finally stopped distributing a 32-bit version of it's OS. Apple won't even support 32-bit binaries. MS's support now ranges in the decades for a considerable amount of software and hardware. In some facets, MS makes Apple look silly. The supposed bloated Windows X is faster> than macOS in many tasks (macOS is usually the bottom rung when compared against Windows or Linux), but it's also marred by a maddening UI that has two control panels, an absurdity when it comes to *nix flows (but hey, it has Ubuntu that can run as a virtualized kernel). Meanwhile, Apple has going 68k -> PPC -> x86 -> ARM with the Mac platform, and two OSes (as Mac OS 7-9 and OSX/macOS are effectively two separate operating systems). Apple isn't above criticism, but betting against Apple is foolish.

Due to the architecture of the current M1s, I think we'll see computers with real quirks. It's quite possible we'll see 27 inch 5k iMacs capable of editing 8k video but unable to play games maxed out even at 2560 x 1440 or connect to more than two external displays, let alone with the latest features like ray tracing. Typical GPU tasks like codec mashing and machine learning/tensor flow will fly on the Apple Silicon but leaving the happy path to perform tasks like digital noise reduction (DNR), gaming, and 3D rendering will be vastly behind AMD/Nvidia. ARM doesn't implicitly mean non-modular, SOC only, as we've already seen. For the portable class of computing, the Apple Silicon looks like it'll be unmatched (and expensive). Brute Force vs efficiency will be the story of x86 versus Apple Silicon versus ARM, and I suspect there won't always be a clear winner.

Welcome to the next decade of computing.