Visual CSS Regression Testing 101 for Front End Developers

I recently went to a talk by Micah Godbolt on Visual Regression Testing (who forked Grunt PhantomCSS). Micah has been leading the charge for the banner of "Front End Architect”, and also an advocator for regression testings. I have been compiling my notes for my company, Emerge Interactive, and figured it'd make for a great blog post. Introductions aside, let's begin.

What is Visual CSS Regression Testing?

Visual CSS Regression Testing (often shortened to CSS Regression Testing or Regression Testing) is a set of automated tests to compare visual differences on websites. It's an automated game of "Spot the Differences”, where your computer uses a web browser to render a page or portion of a page and highlights all the differences it finds between two sources. Visual CSS regression testing requires a fair amount of technical know-how, so I'll try and distill this into common-folk language, but with a few assumptions: You're familiar with at least the concept terminals/command consoles, you've heard of Grunt or Gulp, and you have an intermediate understanding of the fundamentals of front end development.

Some designers/developers may be familiar with visual difference testing using programs like Kaleidoscope, GitHub's Image Viewer or ImageMagick. These tools allow users to compare two images and use a variety of views to compare the visual differences, including A/B swiping, onion skinning, and highlighting changed areas. These tools are useful but require manual operation.

In the cases of both Kaleidoscope and GitHub, can be only used on pre-existing files, meaning you cannot setup the tools to take screenshots that automatically run a visual comparison and give it a "pass” or "fail”. (note: Kaleidoscope does have KSdiff, a CLI tool that can be used to automate some functionality).

Enter Automated Visual CSS Testing

The obvious next step is "What if you could run automated tests?” and assign a value to it for difference tolerances to assign a pass or fail grade. ImageMagick already has a command line-level ability to run visual tests. It was only a matter of time before clever developers used it to create smarter testing.

While there have been some web services that provided automated visual difference testing using specialized services, there hasn't been an easy way to roll these into a development workflow until recently.

Combined with headless web browsers (browsers that do not feature a graphical-user-interface aka GUI), PhantomJS (Webkit) and SlimerJS (Gecko) visual tests can be rolled into Grunt or Gulp tests or even triggered on Jenkins builds. A developer can get instant and immediate feedback on the implications of his/her code change.

Headless Web Browsers are insanely quick and efficient. Despite the name, PhantomJS is not Webkit ported to Javascript. PhantomJS is actually platform-specific compiled native code. The "JS” in PhantomJS is the javascript API that allows the browser to be controlled externally. This means the browser can be spun up, render the page, and capture a screenshot in much less time than if you were to use a GUI browser as it runs natively.

Fun fact: In OS X, you can double-click the PhantomJS binary, and it will launch in its own terminal window. Don't expect too much! Without a GUI, you're limited to the JS console.Comparative vs Baseline: The Philosophies of Regression Testing

There are currently two types of visual regression tests: Comparative and Baseline. Each has its use case and pros and cons, and each has its own separate code libraries Wraith and PhantomCSS, each firmly rooted in an aforementioned philosophy.

It's important to understand neither philosophy is correct, but rather, each has use cases and pros and cons. Both strategies can be used in conjunction. Also, not every project will benefit from visual regression testing as it requires at some point the assumption that the "Correct” the state has been attained and that it has been signed off as "Correct” or "Gold”

Visual Regression is only truly useful once a "finalized” version of a page or component has been developed to be used as the reference. So, while actively developing, this will not assist in creating new components or pages beyond preventing style changes that possibly affect other elements. Also design patterns that include style guides will greatly benefit each competing philosophy. If you aren't developing style guides for sites, consider this a wake-up call. You should start developing style guides even if you do not start with visual regression tests today as it will aid you tomorrow. Check out A List Apart's "Creating Style Guides” for more information.

Lastly, visual regression tests do take time to set up and are most likely best suited for projects that will be on a service agreement or will be updated and/or maintained. Involved tests may not have much payoff for one-off sites for short campaigns.

Visual Regression tests are:

- For maintaining agreed-upon visual standards for pages or components of a site.

- That new code pushes do not break the agreed-upon standard.

Visual regression tests are not:

- For developing actively developing pages/widgets other than making sure they do not break finalized pages/components

- ideal for one-off sites that have a short life span. Visual regression tests are not without benefit but may not be necessary

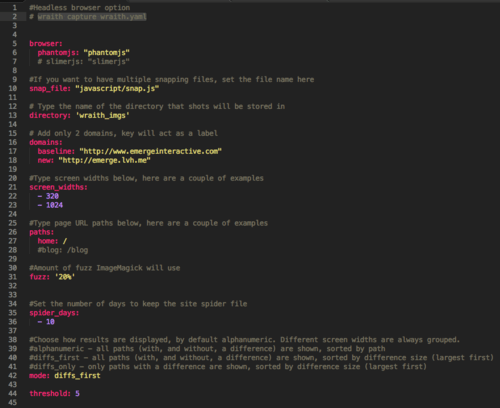

Comparative (Wraith)

Comparative visual testing requires a "correct” or "gold master” version of a website to be used as the source to be tested against. Comparative takes a full-screen image and highlights the changed areas. However, due to the fluid nature of the web, new images, text, and so forth will be seen as changes. It also doesn't take into account pseudo-states like hovers and offscreen menus. It also breaks down further, as any changes to page length will offset content like footers. Thus, anything below the difference in page length will be highlighted as a change.

In short, a comparative screenshot is like taking a command+shift+3 & spacebar of a window (PrintScreen for the Windows crowd) of a website from Server A (Source) and Server B (Staging/Feature Branch) and highlighting all the changes.

This doesn't mean that comparative tests are not useful, a comparative test is quick to set up, and could be easily assigned to a style guide and run as a daily automated test to make sure any code pushes haven't affected the style guide of base styles at various breakpoints. Due to the unchanging content of a style guide, the test can easily be tested to run as pass or fail.

Pros

- Fast

- Easy setup (Uses YAML)

Cons

- Cannot "Ignore” content areas

- Not very useful on pages that have changing areas

- Tests will report any areas affected by vertical positioning as "different” below an offending area.

- Does not test for pseudo-states and UI interactions

Baseline (PhantomCSS)

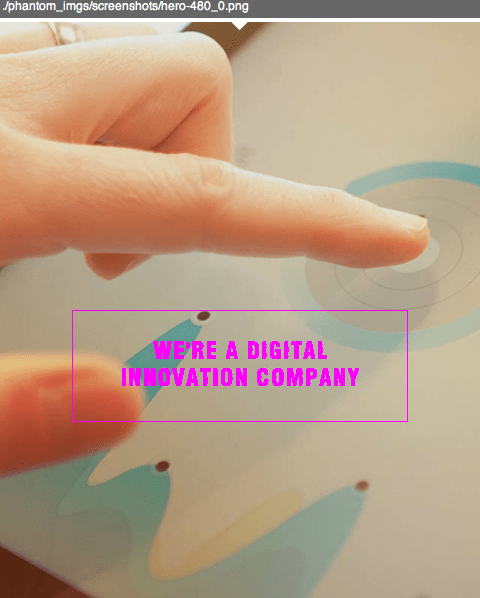

Baseline tests are quite a bit different than comparative. The baseline is for testing individual elements, such as UI elements, and can be scripted using JS and JQuery to trigger UI interactions.

Baseline uses a "Gold Master” or Signed off screenshot as its basis, and then compares from this image. Tests can be defined in a host of ways, from screen size to states, each individually run. Its best to think of these as command-shift-3 vs Command-Shift-4 (in terms of entire page vs area).

Due to the modular nature of Baseline testing, tests can be written with a host of patterns, often pairing up with Grunt to perform batch tests of modules on pages.

However, due to the more precise nature of the baseline, it requires a much larger setup and is much more complex.

Pros

- Granular

- Works with a wide variety of workflows

- Allows to capture UI interactions

Cons

- Tests take much more time to setup

- Initial setup is confusing (PhantomJS 2.0 currently isn't officially supported by Casper, which requires hacking)

- Slower to test

- UI interactions must be manually coded

Its all about workflows

Since visual regression tests can be baked into Grunt / Gulp tasks and Jenkins builds, deciding on usage patterns is key. In a completely componented site, every "Gold master” component could include the PNG with the component in the Git repository and the JS for that component's test, then the grunt task could pull in the list of component tests for each component. Jenkins builds could be automated to run Wraith against a style guide and report if the test fails, with links to the offending failed screenshots.

PhantomCSS can also be used with live products/websites. PhantomCSS tests can use JQuery to write in filler content to avoid PhantomCSS reporting differences on constantly changing elements. These are simply a few hypothetical use cases, and there are plenty more.

Technologies involved

In order for all this to work, I've already mentioned most of the technologies visual regression testing requires.

Both Wraith and PhantomCSS use headless web browsers like PhantomJS / SlimerJS to render the pages. ImageMagick is used to capture and render the difference tests. PhantomCSS uses another library, CasperJS to interact with PhantomJS.

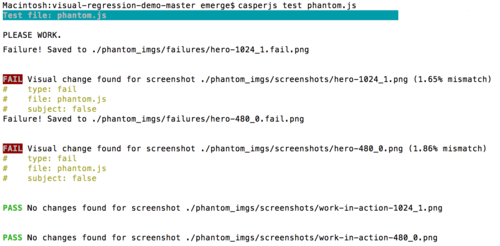

Installing everything takes time. Currently, Brew installs PhantomJS 2.0 by default, which isn't out-of-the-box compatible with Casper PhantomCSS. I spent a significant amount of wasted time to get 2.0 to work. I was able to get PhantomCSS 2.0 run, but it hung after taking screenshots.

After a lot of trial and error, I uninstalled PhantomJS and followed the recommendation of Kevin Vanzandberghe. After uninstalling PhantomJS 2.0 and downloading version 1.9.8 from PhantomJS's website, only then was I able to get PhantomCSS to work.

More to come

As of writing this, I've managed to get both utilities up and running. I created dummy branches of my company's website to use as a playground, purposely broken tests as a proof of concept. I've yet to fully integrate these into any real-world projects, but I will without question.

My plans are to write a blog post about how to install Wraith and Phantom on a new computer as I'll inevitably be installing both libraries on my Mac Pro. Update: Step-by-step guide on how to install PhantomCSS.

I'll be revisiting visual testing in more future posts and covering how I integrate it into my workflow.

I hope all this is useful as a primer for visual regression testing.